---

license: apache-2.0

pipeline_tag: visual-question-answering

---

## About

This was trained by using [TinyLlama](https://huggingface.co/PY007/TinyLlama-1.1B-Chat-v0.3) as the base model using the [BakLlava](https://github.com/SkunkworksAI/BakLLaVA/) repo.

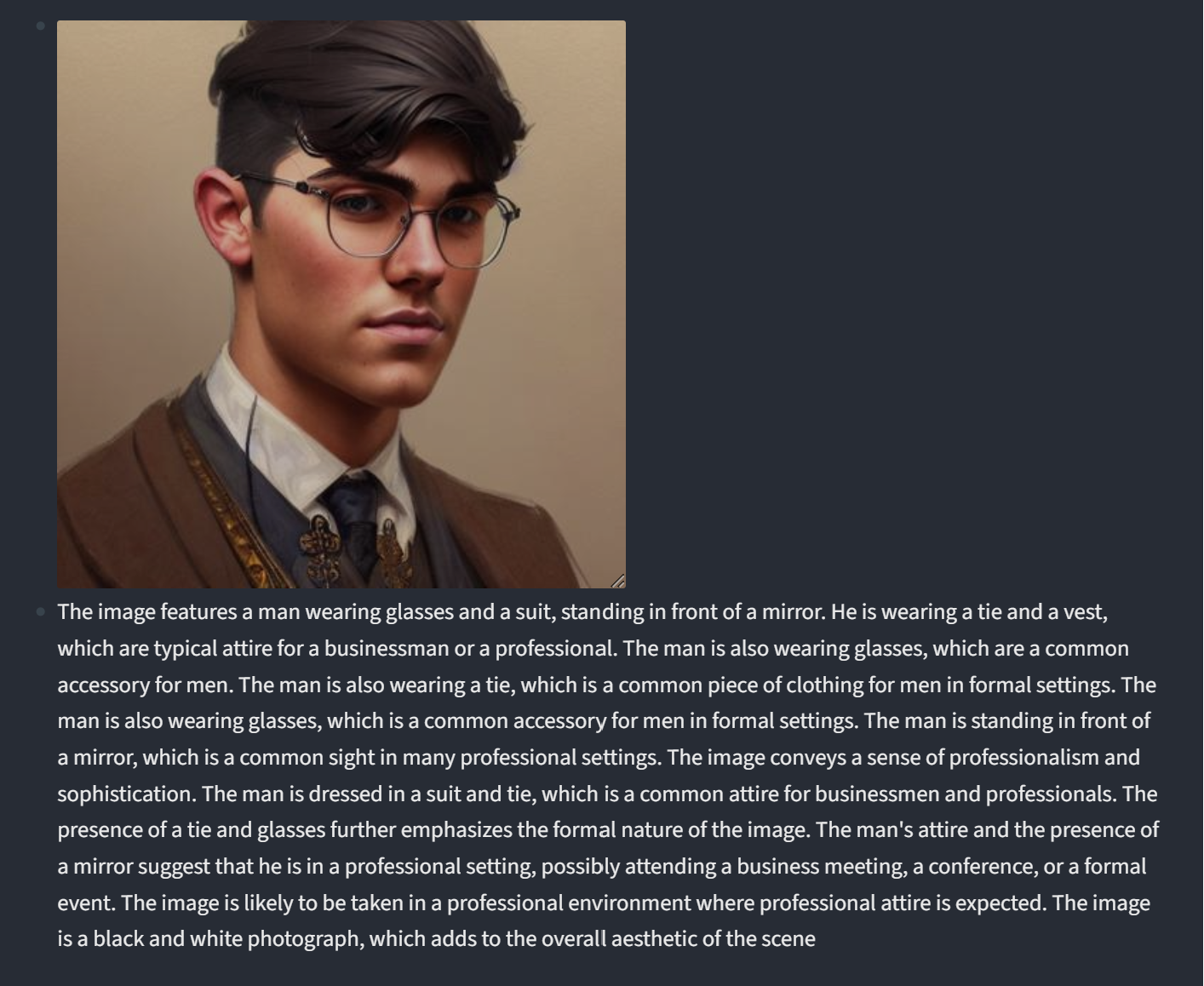

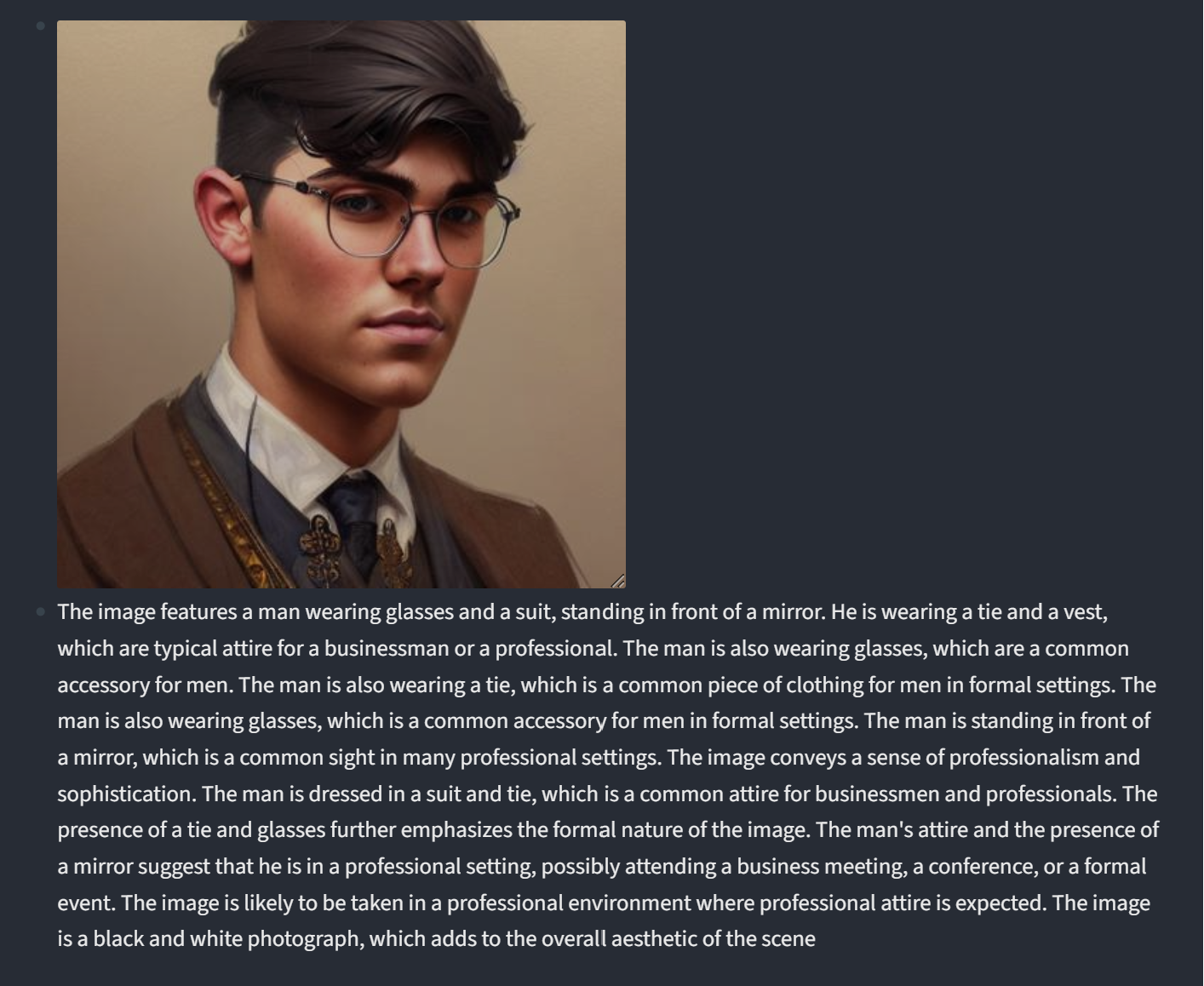

## Examples

Prompt for both was, "What is shown in the given image?"

## Install

If you are not using Linux, do *NOT* proceed, see instructions for [macOS](https://github.com/haotian-liu/LLaVA/blob/main/docs/macOS.md) and [Windows](https://github.com/haotian-liu/LLaVA/blob/main/docs/Windows.md).

1. Clone this repository and navigate to LLaVA folder

```bash

git clone https://github.com/haotian-liu/LLaVA.git

cd LLaVA

```

2. Install Package

```Shell

conda create -n llava python=3.10 -y

conda activate llava

pip install --upgrade pip # enable PEP 660 support

pip install -e .

```

3. Install additional packages for training cases

```

pip install -e ".[train]"

pip install flash-attn --no-build-isolation

```

### Upgrade to latest code base

```Shell

git pull

pip install -e .

```

#### Launch a controller

```Shell

python -m llava.serve.controller --host 0.0.0.0 --port 10000

```

#### Launch a gradio web server.

```Shell

python -m llava.serve.gradio_web_server --controller http://localhost:10000 --model-list-mode reload

```

You just launched the Gradio web interface. Now, you can open the web interface with the URL printed on the screen. You may notice that there is no model in the model list. Do not worry, as we have not launched any model worker yet. It will be automatically updated when you launch a model worker.

#### Launch a model worker

This is the actual *worker* that performs the inference on the GPU. Each worker is responsible for a single model specified in `--model-path`.

```Shell

python -m llava.serve.model_worker --host 0.0.0.0 --controller http://localhost:10000 --port 40000 --worker http://localhost:40000 --model-path ameywtf/tinyllava-1.1b-v0.1

```

Wait until the process finishes loading the model and you see "Uvicorn running on ...". Now, refresh your Gradio web UI, and you will see the model you just launched in the model list.

You can launch as many workers as you want, and compare between different model checkpoints in the same Gradio interface. Please keep the `--controller` the same, and modify the `--port` and `--worker` to a different port number for each worker.

```Shell

python -m llava.serve.model_worker --host 0.0.0.0 --controller http://localhost:10000 --port --worker http://localhost: --model-path

```

If you are using an Apple device with an M1 or M2 chip, you can specify the mps device by using the `--device` flag: `--device mps`.

## Install

If you are not using Linux, do *NOT* proceed, see instructions for [macOS](https://github.com/haotian-liu/LLaVA/blob/main/docs/macOS.md) and [Windows](https://github.com/haotian-liu/LLaVA/blob/main/docs/Windows.md).

1. Clone this repository and navigate to LLaVA folder

```bash

git clone https://github.com/haotian-liu/LLaVA.git

cd LLaVA

```

2. Install Package

```Shell

conda create -n llava python=3.10 -y

conda activate llava

pip install --upgrade pip # enable PEP 660 support

pip install -e .

```

3. Install additional packages for training cases

```

pip install -e ".[train]"

pip install flash-attn --no-build-isolation

```

### Upgrade to latest code base

```Shell

git pull

pip install -e .

```

#### Launch a controller

```Shell

python -m llava.serve.controller --host 0.0.0.0 --port 10000

```

#### Launch a gradio web server.

```Shell

python -m llava.serve.gradio_web_server --controller http://localhost:10000 --model-list-mode reload

```

You just launched the Gradio web interface. Now, you can open the web interface with the URL printed on the screen. You may notice that there is no model in the model list. Do not worry, as we have not launched any model worker yet. It will be automatically updated when you launch a model worker.

#### Launch a model worker

This is the actual *worker* that performs the inference on the GPU. Each worker is responsible for a single model specified in `--model-path`.

```Shell

python -m llava.serve.model_worker --host 0.0.0.0 --controller http://localhost:10000 --port 40000 --worker http://localhost:40000 --model-path ameywtf/tinyllava-1.1b-v0.1

```

Wait until the process finishes loading the model and you see "Uvicorn running on ...". Now, refresh your Gradio web UI, and you will see the model you just launched in the model list.

You can launch as many workers as you want, and compare between different model checkpoints in the same Gradio interface. Please keep the `--controller` the same, and modify the `--port` and `--worker` to a different port number for each worker.

```Shell

python -m llava.serve.model_worker --host 0.0.0.0 --controller http://localhost:10000 --port --worker http://localhost: --model-path

```

If you are using an Apple device with an M1 or M2 chip, you can specify the mps device by using the `--device` flag: `--device mps`.

## Install

If you are not using Linux, do *NOT* proceed, see instructions for [macOS](https://github.com/haotian-liu/LLaVA/blob/main/docs/macOS.md) and [Windows](https://github.com/haotian-liu/LLaVA/blob/main/docs/Windows.md).

1. Clone this repository and navigate to LLaVA folder

```bash

git clone https://github.com/haotian-liu/LLaVA.git

cd LLaVA

```

2. Install Package

```Shell

conda create -n llava python=3.10 -y

conda activate llava

pip install --upgrade pip # enable PEP 660 support

pip install -e .

```

3. Install additional packages for training cases

```

pip install -e ".[train]"

pip install flash-attn --no-build-isolation

```

### Upgrade to latest code base

```Shell

git pull

pip install -e .

```

#### Launch a controller

```Shell

python -m llava.serve.controller --host 0.0.0.0 --port 10000

```

#### Launch a gradio web server.

```Shell

python -m llava.serve.gradio_web_server --controller http://localhost:10000 --model-list-mode reload

```

You just launched the Gradio web interface. Now, you can open the web interface with the URL printed on the screen. You may notice that there is no model in the model list. Do not worry, as we have not launched any model worker yet. It will be automatically updated when you launch a model worker.

#### Launch a model worker

This is the actual *worker* that performs the inference on the GPU. Each worker is responsible for a single model specified in `--model-path`.

```Shell

python -m llava.serve.model_worker --host 0.0.0.0 --controller http://localhost:10000 --port 40000 --worker http://localhost:40000 --model-path ameywtf/tinyllava-1.1b-v0.1

```

Wait until the process finishes loading the model and you see "Uvicorn running on ...". Now, refresh your Gradio web UI, and you will see the model you just launched in the model list.

You can launch as many workers as you want, and compare between different model checkpoints in the same Gradio interface. Please keep the `--controller` the same, and modify the `--port` and `--worker` to a different port number for each worker.

```Shell

python -m llava.serve.model_worker --host 0.0.0.0 --controller http://localhost:10000 --port

## Install

If you are not using Linux, do *NOT* proceed, see instructions for [macOS](https://github.com/haotian-liu/LLaVA/blob/main/docs/macOS.md) and [Windows](https://github.com/haotian-liu/LLaVA/blob/main/docs/Windows.md).

1. Clone this repository and navigate to LLaVA folder

```bash

git clone https://github.com/haotian-liu/LLaVA.git

cd LLaVA

```

2. Install Package

```Shell

conda create -n llava python=3.10 -y

conda activate llava

pip install --upgrade pip # enable PEP 660 support

pip install -e .

```

3. Install additional packages for training cases

```

pip install -e ".[train]"

pip install flash-attn --no-build-isolation

```

### Upgrade to latest code base

```Shell

git pull

pip install -e .

```

#### Launch a controller

```Shell

python -m llava.serve.controller --host 0.0.0.0 --port 10000

```

#### Launch a gradio web server.

```Shell

python -m llava.serve.gradio_web_server --controller http://localhost:10000 --model-list-mode reload

```

You just launched the Gradio web interface. Now, you can open the web interface with the URL printed on the screen. You may notice that there is no model in the model list. Do not worry, as we have not launched any model worker yet. It will be automatically updated when you launch a model worker.

#### Launch a model worker

This is the actual *worker* that performs the inference on the GPU. Each worker is responsible for a single model specified in `--model-path`.

```Shell

python -m llava.serve.model_worker --host 0.0.0.0 --controller http://localhost:10000 --port 40000 --worker http://localhost:40000 --model-path ameywtf/tinyllava-1.1b-v0.1

```

Wait until the process finishes loading the model and you see "Uvicorn running on ...". Now, refresh your Gradio web UI, and you will see the model you just launched in the model list.

You can launch as many workers as you want, and compare between different model checkpoints in the same Gradio interface. Please keep the `--controller` the same, and modify the `--port` and `--worker` to a different port number for each worker.

```Shell

python -m llava.serve.model_worker --host 0.0.0.0 --controller http://localhost:10000 --port