Upload 9 files

Browse files- .gitattributes +1 -0

- README.md +180 -0

- added_tokens.json +24 -0

- config.json +39 -0

- generation_config.json +14 -0

- merges.txt +0 -0

- special_tokens_map.json +31 -0

- tokenizer.json +3 -0

- tokenizer_config.json +208 -0

- vocab.json +0 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,4 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

tokenizer.json filter=lfs diff=lfs merge=lfs -text

|

README.md

ADDED

|

@@ -0,0 +1,180 @@

|

|

|

|

|

|

|

|

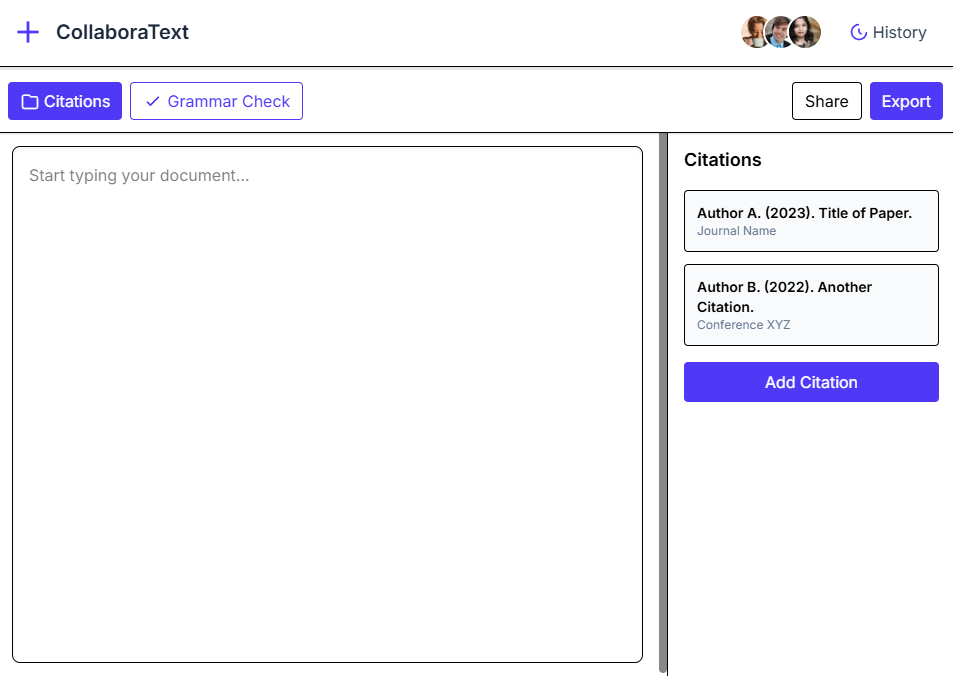

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

base_model: Qwen/Qwen2.5-Coder-7B-Instruct

|

| 3 |

+

tags:

|

| 4 |

+

- text-generation-inference

|

| 5 |

+

- transformers

|

| 6 |

+

- qwen2

|

| 7 |

+

- ui-generation

|

| 8 |

+

- peft

|

| 9 |

+

- lora

|

| 10 |

+

- tailwind-css

|

| 11 |

+

- html

|

| 12 |

+

license: apache-2.0

|

| 13 |

+

language:

|

| 14 |

+

- en

|

| 15 |

+

---

|

| 16 |

+

|

| 17 |

+

# Model Card for UIGEN-T2-7B

|

| 18 |

+

|

| 19 |

+

<!-- Provide a quick summary of what the model is/does. -->

|

| 20 |

+

|

| 21 |

+

|

| 22 |

+

|

| 23 |

+

|

| 24 |

+

[OUR Training Article](https://cypress-dichondra-4b5.notion.site/UIGEN-T2-Training-1e393ce17c258024abfcff24dae7bedd)

|

| 25 |

+

|

| 26 |

+

[Testing Github for Artifacts](https://github.com/TesslateAI/UIGEN-T2-Artifacts)

|

| 27 |

+

|

| 28 |

+

## **Model Overview**

|

| 29 |

+

|

| 30 |

+

We're excited to introduce **UIGEN-T2**, the next evolution in our UI generation model series. Fine-tuned from the highly capable **Qwen2.5-Coder-7B-Instruct** base model using PEFT/LoRA, UIGEN-T2 is specifically designed to generate **HTML and Tailwind CSS** code for web interfaces. What sets UIGEN-T2 apart is its training on a massive **50,000 sample dataset** (up from 400) and its unique **UI-based reasoning capability**, allowing it to generate not just code, but code informed by thoughtful design principles.

|

| 31 |

+

|

| 32 |

+

---

|

| 33 |

+

|

| 34 |

+

## **Model Highlights**

|

| 35 |

+

|

| 36 |

+

- **High-Quality UI Code Generation**: Produces functional and semantic HTML combined with utility-first Tailwind CSS.

|

| 37 |

+

- **Massive Training Dataset**: Trained on 50,000 diverse UI examples, enabling broader component understanding and stylistic range.

|

| 38 |

+

- **Innovative UI-Based Reasoning**: Incorporates detailed reasoning traces generated by a specialized "teacher" model, ensuring outputs consider usability, layout, and aesthetics. (*See example reasoning in description below*)

|

| 39 |

+

- **PEFT/LoRA Trained (Rank 128)**: Efficiently fine-tuned for UI generation. We've published LoRA checkpoints at each training step for transparency and community use!

|

| 40 |

+

- **Improved Chat Interaction**: Streamlined prompt flow – no more need for the awkward double `think` prompt! Interaction feels more natural.

|

| 41 |

+

|

| 42 |

+

---

|

| 43 |

+

|

| 44 |

+

## **Example Reasoning (Internal Guide for Generation)**

|

| 45 |

+

|

| 46 |

+

Here's a glimpse into the kind of reasoning that guides UIGEN-T2 internally, generated by our specialized teacher model:

|

| 47 |

+

|

| 48 |

+

```plaintext

|

| 49 |

+

<|begin_of_thought|>

|

| 50 |

+

When approaching the challenge of crafting an elegant stopwatch UI, my first instinct is to dissect what truly makes such an interface delightful yet functional—hence, I consider both aesthetic appeal and usability grounded in established heuristics like Nielsen’s “aesthetic and minimalist design” alongside Gestalt principles... placing the large digital clock prominently aligns with Fitts’ Law... The glassmorphism effect here enhances visual separation... typography choices—the use of a monospace font family ("Fira Code" via Google Fonts) supports readability... iconography paired with labels inside buttons provides dual coding... Tailwind CSS v4 enables utility-driven consistency... critical reflection concerns responsiveness: flexbox layouts combined with relative sizing guarantee graceful adaptation...

|

| 51 |

+

<|end_of_thought|>

|

| 52 |

+

```

|

| 53 |

+

|

| 54 |

+

---

|

| 55 |

+

|

| 56 |

+

## **Example Outputs**

|

| 57 |

+

|

| 58 |

+

|

| 59 |

+

|

| 60 |

+

|

| 61 |

+

|

| 62 |

+

|

| 63 |

+

|

| 64 |

+

|

| 65 |

+

|

| 66 |

+

|

| 67 |

+

---

|

| 68 |

+

|

| 69 |

+

## **Use Cases**

|

| 70 |

+

|

| 71 |

+

### **Recommended Uses**

|

| 72 |

+

- **Rapid UI Prototyping**: Quickly generate HTML/Tailwind code snippets from descriptions or wireframes.

|

| 73 |

+

- **Component Generation**: Create standard and custom UI components (buttons, cards, forms, layouts).

|

| 74 |

+

- **Frontend Development Assistance**: Accelerate development by generating baseline component structures.

|

| 75 |

+

- **Design-to-Code Exploration**: Bridge the gap between design concepts and initial code implementation.

|

| 76 |

+

|

| 77 |

+

### **Limitations**

|

| 78 |

+

- **Current Framework Focus**: Primarily generates HTML and Tailwind CSS. (Bootstrap support is planned!).

|

| 79 |

+

- **Complex JavaScript Logic**: Focuses on structure and styling; dynamic behavior and complex state management typically require manual implementation.

|

| 80 |

+

- **Highly Specific Design Systems**: May need further fine-tuning for strict adherence to unique, complex corporate design systems.

|

| 81 |

+

|

| 82 |

+

---

|

| 83 |

+

|

| 84 |

+

## **How to Use**

|

| 85 |

+

|

| 86 |

+

You have to use this system prompt:

|

| 87 |

+

```

|

| 88 |

+

You are Tesslate, a helpful assistant specialized in UI generation.

|

| 89 |

+

```

|

| 90 |

+

These are the reccomended parameters: 0.7 Temp, Top P 0.9.

|

| 91 |

+

|

| 92 |

+

### **Inference Example**

|

| 93 |

+

```python

|

| 94 |

+

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 95 |

+

import torch

|

| 96 |

+

|

| 97 |

+

# Make sure you have PEFT installed: pip install peft

|

| 98 |

+

from peft import PeftModel

|

| 99 |

+

|

| 100 |

+

# Use your specific model name/path once uploaded

|

| 101 |

+

model_name_or_path = "tesslate/UIGEN-T2" # Placeholder - replace with actual HF repo name

|

| 102 |

+

base_model_name = "Qwen/Qwen2.5-Coder-7B-Instruct"

|

| 103 |

+

|

| 104 |

+

# Load the base model

|

| 105 |

+

base_model = AutoModelForCausalLM.from_pretrained(

|

| 106 |

+

base_model_name,

|

| 107 |

+

torch_dtype=torch.bfloat16, # or float16 if bf16 not supported

|

| 108 |

+

device_map="auto"

|

| 109 |

+

)

|

| 110 |

+

|

| 111 |

+

# Load the PEFT model (LoRA weights)

|

| 112 |

+

model = PeftModel.from_pretrained(base_model, model_name_or_path)

|

| 113 |

+

tokenizer = AutoTokenizer.from_pretrained(base_model_name) # Use base tokenizer

|

| 114 |

+

|

| 115 |

+

# Note the simplified prompt structure (no double 'think')

|

| 116 |

+

prompt = """<|im_start|>user

|

| 117 |

+

Create a simple card component using Tailwind CSS with an image, title, and description.<|im_end|>

|

| 118 |

+

<|im_start|>assistant

|

| 119 |

+

""" # Model will generate reasoning and code following this

|

| 120 |

+

|

| 121 |

+

inputs = tokenizer(prompt, return_tensors="pt").to(model.device)

|

| 122 |

+

|

| 123 |

+

# Adjust generation parameters as needed

|

| 124 |

+

outputs = model.generate(**inputs, max_new_tokens=1024, do_sample=True, temperature=0.6, top_p=0.9)

|

| 125 |

+

|

| 126 |

+

print(tokenizer.decode(outputs[0], skip_special_tokens=True))

|

| 127 |

+

```

|

| 128 |

+

---

|

| 129 |

+

|

| 130 |

+

## **Performance and Evaluation**

|

| 131 |

+

|

| 132 |

+

- **Strengths**:

|

| 133 |

+

- Generates semantically correct and well-structured HTML/Tailwind CSS.

|

| 134 |

+

- Leverages a large dataset (50k samples) for improved robustness and diversity.

|

| 135 |

+

- Incorporates design reasoning for more thoughtful UI outputs.

|

| 136 |

+

- Improved usability via streamlined chat template.

|

| 137 |

+

- Openly published LoRA checkpoints for community use.

|

| 138 |

+

- **Weaknesses**:

|

| 139 |

+

- Currently limited to HTML/Tailwind CSS (Bootstrap planned).

|

| 140 |

+

- Complex JavaScript interactivity requires manual implementation.

|

| 141 |

+

- Reinforcement Learning refinement (for stricter adherence to principles/rewards) is a future step.

|

| 142 |

+

|

| 143 |

+

---

|

| 144 |

+

|

| 145 |

+

## **Technical Specifications**

|

| 146 |

+

|

| 147 |

+

- **Architecture**: Transformer-based LLM adapted with PEFT/LoRA

|

| 148 |

+

- **Base Model**: Qwen/Qwen2.5-Coder-7B-Instruct

|

| 149 |

+

- **Adapter Rank (LoRA)**: 128

|

| 150 |

+

- **Training Data Size**: 50,000 samples

|

| 151 |

+

- **Precision**: Trained using bf16/fp16. Base model requires appropriate precision handling.

|

| 152 |

+

- **Hardware Requirements**: Recommend GPU with >= 16GB VRAM for efficient inference (depends on quantization/precision).

|

| 153 |

+

- **Software Dependencies**:

|

| 154 |

+

- Hugging Face Transformers (`transformers`)

|

| 155 |

+

- PyTorch (`torch`)

|

| 156 |

+

- Parameter-Efficient Fine-Tuning (`peft`)

|

| 157 |

+

|

| 158 |

+

---

|

| 159 |

+

|

| 160 |

+

## **Citation**

|

| 161 |

+

|

| 162 |

+

If you use UIGEN-T2 or the LoRA checkpoints in your work, please cite us:

|

| 163 |

+

|

| 164 |

+

```bibtex

|

| 165 |

+

@misc{tesslate_UIGEN-T2,

|

| 166 |

+

title={UIGEN-T2: Scaling UI Generation with Reasoning on Qwen2.5-Coder-7B},

|

| 167 |

+

author={tesslate},

|

| 168 |

+

year={2024}, # Adjust year if needed

|

| 169 |

+

publisher={Hugging Face},

|

| 170 |

+

url={https://huggingface.co/tesslate/UIGEN-T2} # Placeholder URL

|

| 171 |

+

}

|

| 172 |

+

```

|

| 173 |

+

|

| 174 |

+

---

|

| 175 |

+

|

| 176 |

+

## **Contact & Community**

|

| 177 |

+

- **Creator:** [tesslate](https://huggingface.co/tesslate)

|

| 178 |

+

- **LoRA Checkpoints**: [tesslate](https://huggingface.co/tesslate)

|

| 179 |

+

- **Repository & Demo**: [smirki](https://huggingface.co/smirki)

|

| 180 |

+

```

|

added_tokens.json

ADDED

|

@@ -0,0 +1,24 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"</tool_call>": 151658,

|

| 3 |

+

"<tool_call>": 151657,

|

| 4 |

+

"<|box_end|>": 151649,

|

| 5 |

+

"<|box_start|>": 151648,

|

| 6 |

+

"<|endoftext|>": 151643,

|

| 7 |

+

"<|file_sep|>": 151664,

|

| 8 |

+

"<|fim_middle|>": 151660,

|

| 9 |

+

"<|fim_pad|>": 151662,

|

| 10 |

+

"<|fim_prefix|>": 151659,

|

| 11 |

+

"<|fim_suffix|>": 151661,

|

| 12 |

+

"<|im_end|>": 151645,

|

| 13 |

+

"<|im_start|>": 151644,

|

| 14 |

+

"<|image_pad|>": 151655,

|

| 15 |

+

"<|object_ref_end|>": 151647,

|

| 16 |

+

"<|object_ref_start|>": 151646,

|

| 17 |

+

"<|quad_end|>": 151651,

|

| 18 |

+

"<|quad_start|>": 151650,

|

| 19 |

+

"<|repo_name|>": 151663,

|

| 20 |

+

"<|video_pad|>": 151656,

|

| 21 |

+

"<|vision_end|>": 151653,

|

| 22 |

+

"<|vision_pad|>": 151654,

|

| 23 |

+

"<|vision_start|>": 151652

|

| 24 |

+

}

|

config.json

ADDED

|

@@ -0,0 +1,39 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"architectures": [

|

| 3 |

+

"Qwen2ForCausalLM"

|

| 4 |

+

],

|

| 5 |

+

"attention_dropout": 0.0,

|

| 6 |

+

"bos_token_id": 151643,

|

| 7 |

+

"eos_token_id": 151645,

|

| 8 |

+

"hidden_act": "silu",

|

| 9 |

+

"hidden_size": 3584,

|

| 10 |

+

"initializer_range": 0.02,

|

| 11 |

+

"intermediate_size": 18944,

|

| 12 |

+

"max_position_embeddings": 32768,

|

| 13 |

+

"max_window_layers": 28,

|

| 14 |

+

"model_type": "qwen2",

|

| 15 |

+

"num_attention_heads": 28,

|

| 16 |

+

"num_hidden_layers": 28,

|

| 17 |

+

"num_key_value_heads": 4,

|

| 18 |

+

"rms_norm_eps": 1e-06,

|

| 19 |

+

"rope_scaling": null,

|

| 20 |

+

"rope_theta": 1000000.0,

|

| 21 |

+

"sliding_window": 131072,

|

| 22 |

+

"tie_word_embeddings": false,

|

| 23 |

+

"torch_dtype": "bfloat16",

|

| 24 |

+

"transformers_version": "4.50.0.dev0",

|

| 25 |

+

"use_cache": true,

|

| 26 |

+

"use_sliding_window": false,

|

| 27 |

+

"vocab_size": 152064,

|

| 28 |

+

"quantization_config": {

|

| 29 |

+

"quant_method": "exl2",

|

| 30 |

+

"version": "0.2.9",

|

| 31 |

+

"bits": 4.0,

|

| 32 |

+

"head_bits": 6,

|

| 33 |

+

"calibration": {

|

| 34 |

+

"rows": 115,

|

| 35 |

+

"length": 2048,

|

| 36 |

+

"dataset": "(default)"

|

| 37 |

+

}

|

| 38 |

+

}

|

| 39 |

+

}

|

generation_config.json

ADDED

|

@@ -0,0 +1,14 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"bos_token_id": 151643,

|

| 3 |

+

"do_sample": true,

|

| 4 |

+

"eos_token_id": [

|

| 5 |

+

151645,

|

| 6 |

+

151643

|

| 7 |

+

],

|

| 8 |

+

"pad_token_id": 151643,

|

| 9 |

+

"repetition_penalty": 1.1,

|

| 10 |

+

"temperature": 0.7,

|

| 11 |

+

"top_k": 20,

|

| 12 |

+

"top_p": 0.8,

|

| 13 |

+

"transformers_version": "4.50.0.dev0"

|

| 14 |

+

}

|

merges.txt

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

special_tokens_map.json

ADDED

|

@@ -0,0 +1,31 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"additional_special_tokens": [

|

| 3 |

+

"<|im_start|>",

|

| 4 |

+

"<|im_end|>",

|

| 5 |

+

"<|object_ref_start|>",

|

| 6 |

+

"<|object_ref_end|>",

|

| 7 |

+

"<|box_start|>",

|

| 8 |

+

"<|box_end|>",

|

| 9 |

+

"<|quad_start|>",

|

| 10 |

+

"<|quad_end|>",

|

| 11 |

+

"<|vision_start|>",

|

| 12 |

+

"<|vision_end|>",

|

| 13 |

+

"<|vision_pad|>",

|

| 14 |

+

"<|image_pad|>",

|

| 15 |

+

"<|video_pad|>"

|

| 16 |

+

],

|

| 17 |

+

"eos_token": {

|

| 18 |

+

"content": "<|im_end|>",

|

| 19 |

+

"lstrip": false,

|

| 20 |

+

"normalized": false,

|

| 21 |

+

"rstrip": false,

|

| 22 |

+

"single_word": false

|

| 23 |

+

},

|

| 24 |

+

"pad_token": {

|

| 25 |

+

"content": "<|endoftext|>",

|

| 26 |

+

"lstrip": false,

|

| 27 |

+

"normalized": false,

|

| 28 |

+

"rstrip": false,

|

| 29 |

+

"single_word": false

|

| 30 |

+

}

|

| 31 |

+

}

|

tokenizer.json

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:9c5ae00e602b8860cbd784ba82a8aa14e8feecec692e7076590d014d7b7fdafa

|

| 3 |

+

size 11421896

|

tokenizer_config.json

ADDED

|

@@ -0,0 +1,208 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"add_bos_token": false,

|

| 3 |

+

"add_prefix_space": false,

|

| 4 |

+

"added_tokens_decoder": {

|

| 5 |

+

"151643": {

|

| 6 |

+

"content": "<|endoftext|>",

|

| 7 |

+

"lstrip": false,

|

| 8 |

+

"normalized": false,

|

| 9 |

+

"rstrip": false,

|

| 10 |

+

"single_word": false,

|

| 11 |

+

"special": true

|

| 12 |

+

},

|

| 13 |

+

"151644": {

|

| 14 |

+

"content": "<|im_start|>",

|

| 15 |

+

"lstrip": false,

|

| 16 |

+

"normalized": false,

|

| 17 |

+

"rstrip": false,

|

| 18 |

+

"single_word": false,

|

| 19 |

+

"special": true

|

| 20 |

+

},

|

| 21 |

+

"151645": {

|

| 22 |

+

"content": "<|im_end|>",

|

| 23 |

+

"lstrip": false,

|

| 24 |

+

"normalized": false,

|

| 25 |

+

"rstrip": false,

|

| 26 |

+

"single_word": false,

|

| 27 |

+

"special": true

|

| 28 |

+

},

|

| 29 |

+

"151646": {

|

| 30 |

+

"content": "<|object_ref_start|>",

|

| 31 |

+

"lstrip": false,

|

| 32 |

+

"normalized": false,

|

| 33 |

+

"rstrip": false,

|

| 34 |

+

"single_word": false,

|

| 35 |

+

"special": true

|

| 36 |

+

},

|

| 37 |

+

"151647": {

|

| 38 |

+

"content": "<|object_ref_end|>",

|

| 39 |

+

"lstrip": false,

|

| 40 |

+

"normalized": false,

|

| 41 |

+

"rstrip": false,

|

| 42 |

+

"single_word": false,

|

| 43 |

+

"special": true

|

| 44 |

+

},

|

| 45 |

+

"151648": {

|

| 46 |

+

"content": "<|box_start|>",

|

| 47 |

+

"lstrip": false,

|

| 48 |

+

"normalized": false,

|

| 49 |

+

"rstrip": false,

|

| 50 |

+

"single_word": false,

|

| 51 |

+

"special": true

|

| 52 |

+

},

|

| 53 |

+

"151649": {

|

| 54 |

+

"content": "<|box_end|>",

|

| 55 |

+

"lstrip": false,

|

| 56 |

+

"normalized": false,

|

| 57 |

+

"rstrip": false,

|

| 58 |

+

"single_word": false,

|

| 59 |

+

"special": true

|

| 60 |

+

},

|

| 61 |

+

"151650": {

|

| 62 |

+

"content": "<|quad_start|>",

|

| 63 |

+

"lstrip": false,

|

| 64 |

+

"normalized": false,

|

| 65 |

+

"rstrip": false,

|

| 66 |

+

"single_word": false,

|

| 67 |

+

"special": true

|

| 68 |

+

},

|

| 69 |

+

"151651": {

|

| 70 |

+

"content": "<|quad_end|>",

|

| 71 |

+

"lstrip": false,

|

| 72 |

+

"normalized": false,

|

| 73 |

+

"rstrip": false,

|

| 74 |

+

"single_word": false,

|

| 75 |

+

"special": true

|

| 76 |

+

},

|

| 77 |

+

"151652": {

|

| 78 |

+

"content": "<|vision_start|>",

|

| 79 |

+

"lstrip": false,

|

| 80 |

+

"normalized": false,

|

| 81 |

+

"rstrip": false,

|

| 82 |

+

"single_word": false,

|

| 83 |

+

"special": true

|

| 84 |

+

},

|

| 85 |

+

"151653": {

|

| 86 |

+

"content": "<|vision_end|>",

|

| 87 |

+

"lstrip": false,

|

| 88 |

+

"normalized": false,

|

| 89 |

+

"rstrip": false,

|

| 90 |

+

"single_word": false,

|

| 91 |

+

"special": true

|

| 92 |

+

},

|

| 93 |

+

"151654": {

|

| 94 |

+

"content": "<|vision_pad|>",

|

| 95 |

+

"lstrip": false,

|

| 96 |

+

"normalized": false,

|

| 97 |

+

"rstrip": false,

|

| 98 |

+

"single_word": false,

|

| 99 |

+

"special": true

|

| 100 |

+

},

|

| 101 |

+

"151655": {

|

| 102 |

+

"content": "<|image_pad|>",

|

| 103 |

+

"lstrip": false,

|

| 104 |

+

"normalized": false,

|

| 105 |

+

"rstrip": false,

|

| 106 |

+

"single_word": false,

|

| 107 |

+

"special": true

|

| 108 |

+

},

|

| 109 |

+

"151656": {

|

| 110 |

+

"content": "<|video_pad|>",

|

| 111 |

+

"lstrip": false,

|

| 112 |

+

"normalized": false,

|

| 113 |

+

"rstrip": false,

|

| 114 |

+

"single_word": false,

|

| 115 |

+

"special": true

|

| 116 |

+

},

|

| 117 |

+

"151657": {

|

| 118 |

+

"content": "<tool_call>",

|

| 119 |

+

"lstrip": false,

|

| 120 |

+

"normalized": false,

|

| 121 |

+

"rstrip": false,

|

| 122 |

+

"single_word": false,

|

| 123 |

+

"special": false

|

| 124 |

+

},

|

| 125 |

+

"151658": {

|

| 126 |

+

"content": "</tool_call>",

|

| 127 |

+

"lstrip": false,

|

| 128 |

+

"normalized": false,

|

| 129 |

+

"rstrip": false,

|

| 130 |

+

"single_word": false,

|

| 131 |

+

"special": false

|

| 132 |

+

},

|

| 133 |

+

"151659": {

|

| 134 |

+

"content": "<|fim_prefix|>",

|

| 135 |

+

"lstrip": false,

|

| 136 |

+

"normalized": false,

|

| 137 |

+

"rstrip": false,

|

| 138 |

+

"single_word": false,

|

| 139 |

+

"special": false

|

| 140 |

+

},

|

| 141 |

+

"151660": {

|

| 142 |

+

"content": "<|fim_middle|>",

|

| 143 |

+

"lstrip": false,

|

| 144 |

+

"normalized": false,

|

| 145 |

+

"rstrip": false,

|

| 146 |

+

"single_word": false,

|

| 147 |

+

"special": false

|

| 148 |

+

},

|

| 149 |

+

"151661": {

|

| 150 |

+

"content": "<|fim_suffix|>",

|

| 151 |

+

"lstrip": false,

|

| 152 |

+

"normalized": false,

|

| 153 |

+

"rstrip": false,

|

| 154 |

+

"single_word": false,

|

| 155 |

+

"special": false

|

| 156 |

+

},

|

| 157 |

+

"151662": {

|

| 158 |

+

"content": "<|fim_pad|>",

|

| 159 |

+

"lstrip": false,

|

| 160 |

+

"normalized": false,

|

| 161 |

+

"rstrip": false,

|

| 162 |

+

"single_word": false,

|

| 163 |

+

"special": false

|

| 164 |

+

},

|

| 165 |

+

"151663": {

|

| 166 |

+

"content": "<|repo_name|>",

|

| 167 |

+

"lstrip": false,

|

| 168 |

+

"normalized": false,

|

| 169 |

+

"rstrip": false,

|

| 170 |

+

"single_word": false,

|

| 171 |

+

"special": false

|

| 172 |

+

},

|

| 173 |

+

"151664": {

|

| 174 |

+

"content": "<|file_sep|>",

|

| 175 |

+

"lstrip": false,

|

| 176 |

+

"normalized": false,

|

| 177 |

+

"rstrip": false,

|

| 178 |

+

"single_word": false,

|

| 179 |

+

"special": false

|

| 180 |

+

}

|

| 181 |

+

},

|

| 182 |

+

"additional_special_tokens": [

|

| 183 |

+

"<|im_start|>",

|

| 184 |

+

"<|im_end|>",

|

| 185 |

+

"<|object_ref_start|>",

|

| 186 |

+

"<|object_ref_end|>",

|

| 187 |

+

"<|box_start|>",

|

| 188 |

+

"<|box_end|>",

|

| 189 |

+

"<|quad_start|>",

|

| 190 |

+

"<|quad_end|>",

|

| 191 |

+

"<|vision_start|>",

|

| 192 |

+

"<|vision_end|>",

|

| 193 |

+

"<|vision_pad|>",

|

| 194 |

+

"<|image_pad|>",

|

| 195 |

+

"<|video_pad|>"

|

| 196 |

+

],

|

| 197 |

+

"bos_token": null,

|

| 198 |

+

"chat_template": "{%- if tools %}\n {{- '<|im_start|>system\\n' }}\n {%- if messages[0]['role'] == 'system' %}\n {{- messages[0]['content'] }}\n {%- else %}\n {{- 'You are Qwen, created by Alibaba Cloud. You are a helpful assistant.' }}\n {%- endif %}\n {{- \"\\n\\n# Tools\\n\\nYou may call one or more functions to assist with the user query.\\n\\nYou are provided with function signatures within <tools></tools> XML tags:\\n<tools>\" }}\n {%- for tool in tools %}\n {{- \"\\n\" }}\n {{- tool | tojson }}\n {%- endfor %}\n {{- \"\\n</tools>\\n\\nFor each function call, return a json object with function name and arguments within <tool_call></tool_call> XML tags:\\n<tool_call>\\n{\\\"name\\\": <function-name>, \\\"arguments\\\": <args-json-object>}\\n</tool_call><|im_end|>\\n\" }}\n{%- else %}\n {%- if messages[0]['role'] == 'system' %}\n {{- '<|im_start|>system\\n' + messages[0]['content'] + '<|im_end|>\\n' }}\n {%- else %}\n {{- '<|im_start|>system\\nYou are Qwen, created by Alibaba Cloud. You are a helpful assistant.<|im_end|>\\n' }}\n {%- endif %}\n{%- endif %}\n{%- for message in messages %}\n {%- if (message.role == \"user\") or (message.role == \"system\" and not loop.first) or (message.role == \"assistant\" and not message.tool_calls) %}\n {{- '<|im_start|>' + message.role + '\\n' + message.content + '<|im_end|>' + '\\n' }}\n {%- elif message.role == \"assistant\" %}\n {{- '<|im_start|>' + message.role }}\n {%- if message.content %}\n {{- '\\n' + message.content }}\n {%- endif %}\n {%- for tool_call in message.tool_calls %}\n {%- if tool_call.function is defined %}\n {%- set tool_call = tool_call.function %}\n {%- endif %}\n {{- '\\n<tool_call>\\n{\"name\": \"' }}\n {{- tool_call.name }}\n {{- '\", \"arguments\": ' }}\n {{- tool_call.arguments | tojson }}\n {{- '}\\n</tool_call>' }}\n {%- endfor %}\n {{- '<|im_end|>\\n' }}\n {%- elif message.role == \"tool\" %}\n {%- if (loop.index0 == 0) or (messages[loop.index0 - 1].role != \"tool\") %}\n {{- '<|im_start|>user' }}\n {%- endif %}\n {{- '\\n<tool_response>\\n' }}\n {{- message.content }}\n {{- '\\n</tool_response>' }}\n {%- if loop.last or (messages[loop.index0 + 1].role != \"tool\") %}\n {{- '<|im_end|>\\n' }}\n {%- endif %}\n {%- endif %}\n{%- endfor %}\n{%- if add_generation_prompt %}\n {{- '<|im_start|>assistant\\n' }}\n{%- endif %}\n",

|

| 199 |

+

"clean_up_tokenization_spaces": false,

|

| 200 |

+

"eos_token": "<|im_end|>",

|

| 201 |

+

"errors": "replace",

|

| 202 |

+

"extra_special_tokens": {},

|

| 203 |

+

"model_max_length": 32768,

|

| 204 |

+

"pad_token": "<|endoftext|>",

|

| 205 |

+

"split_special_tokens": false,

|

| 206 |

+

"tokenizer_class": "Qwen2Tokenizer",

|

| 207 |

+

"unk_token": null

|

| 208 |

+

}

|

vocab.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|