Upload folder using huggingface_hub

Browse files- .gitattributes +1 -0

- README.md +33 -0

- gemma-3-1b-it-qat-abliterated.q2_k.gguf +3 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,4 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

gemma-3-1b-it-qat-abliterated.q2_k.gguf filter=lfs diff=lfs merge=lfs -text

|

README.md

ADDED

|

@@ -0,0 +1,33 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: gemma

|

| 3 |

+

library_name: transformers

|

| 4 |

+

pipeline_tag: image-text-to-text

|

| 5 |

+

base_model: google/gemma-3-1b-it-qat-q4_0-unquantized

|

| 6 |

+

tags:

|

| 7 |

+

- autoquant

|

| 8 |

+

- gguf

|

| 9 |

+

---

|

| 10 |

+

|

| 11 |

+

# 💎 Gemma 3 1B IT QAT Abliterated

|

| 12 |

+

|

| 13 |

+

|

| 14 |

+

<center>Gemma 3 QAT Abliterated <a href="https://huggingface.co/mlabonne/gemma-3-1b-it-qat-abliterated">1B</a> • <a href="https://huggingface.co/mlabonne/gemma-3-4b-it-qat-abliterated">4B</a> • <a href="https://huggingface.co/mlabonne/gemma-3-12b-it-qat-abliterated">12B</a> • <a href="https://huggingface.co/mlabonne/gemma-3-27b-it-qat-abliterated">27B</a></center>

|

| 15 |

+

|

| 16 |

+

This is an uncensored version of [google/gemma-3-1b-it-qat-q4_0-unquantized](https://huggingface.co/google/gemma-3-1b-it-qat-q4_0-unquantized) created with a new abliteration technique.

|

| 17 |

+

See [this article](https://huggingface.co/blog/mlabonne/abliteration) to know more about abliteration.

|

| 18 |

+

|

| 19 |

+

This is a new, improved version that targets refusals with enhanced accuracy.

|

| 20 |

+

|

| 21 |

+

I recommend using these generation parameters: `temperature=1.0`, `top_k=64`, `top_p=0.95`.

|

| 22 |

+

|

| 23 |

+

## ✂️ Abliteration

|

| 24 |

+

|

| 25 |

+

|

| 26 |

+

|

| 27 |

+

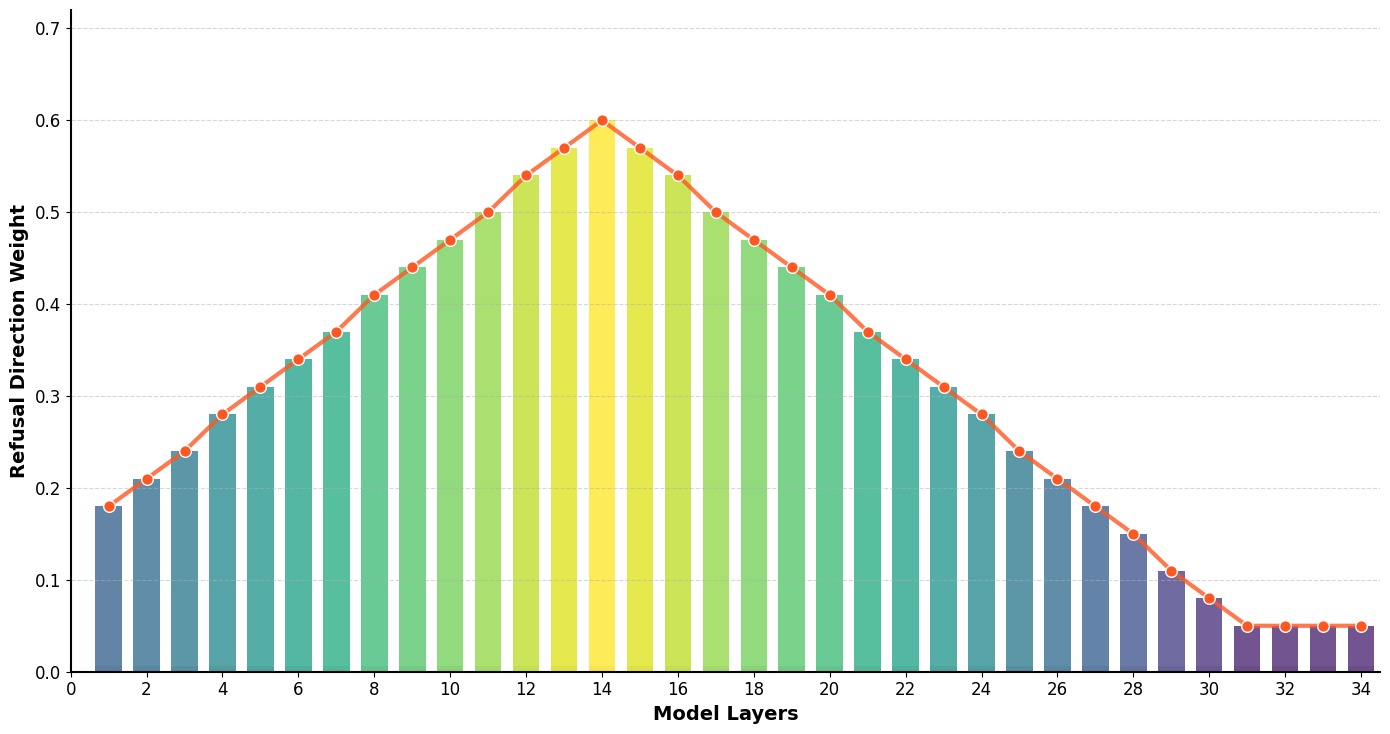

The refusal direction is computed by comparing the residual streams between target (harmful) and baseline (harmless) samples.

|

| 28 |

+

The hidden states of target modules (e.g., o_proj) are orthogonalized to subtract this refusal direction with a given weight factor.

|

| 29 |

+

These weight factors follow a normal distribution with a certain spread and peak layer.

|

| 30 |

+

Modules can be iteratively orthogonalized in batches, or the refusal direction can be accumulated to save memory.

|

| 31 |

+

|

| 32 |

+

Finally, I used a hybrid evaluation with a dedicated test set to calculate the acceptance rate. This uses both a dictionary approach and [NousResearch/Minos-v1](https://huggingface.co/NousResearch/Minos-v1).

|

| 33 |

+

The goal is to obtain an acceptance rate >90% and still produce coherent outputs.

|

gemma-3-1b-it-qat-abliterated.q2_k.gguf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:7ecebfa73858edba7564d1ba0f397c3c736e4a8221b84d114fcd6cbf4c3b6c8d

|

| 3 |

+

size 689814592

|