---

license: apache-2.0

datasets:

- remyxai/SpaceThinker

base_model:

- UCSC-VLAA/VLAA-Thinker-Qwen2.5VL-3B

tags:

- remyx

- qwen2.5-vl

- spatial-reasoning

- multimodal

- vlm

- vqasynth

- thinking

- reasoning

- test-time-compute

- robotics

- embodied-ai

- quantitative-spatial-reasoning

- distance-estimation

language:

- en

pipeline_tag: image-text-to-text

library_name: transformers

model-index:

- name: SpaceThinker-Qwen2.5VL-3B

results:

- task:

type: visual-question-answering

name: Spatial Reasoning

dataset:

name: Q-Spatial-Bench

type: custom

metrics:

- type: success_rate

value: 0.3226

name: Overall Success Rate

results_by_distance_bucket:

- name: 0-10cm

count: 7

successes: 3

success_rate: 0.4286

- name: 10-30cm

count: 28

successes: 5

success_rate: 0.1786

- name: 30-60cm

count: 16

successes: 8

success_rate: 0.5

- name: 60-100cm

count: 17

successes: 9

success_rate: 0.5294

- name: 100-200cm

count: 19

successes: 4

success_rate: 0.2105

- name: 200cm+

count: 6

successes: 1

success_rate: 0.1667

---

[](https://remyx.ai/?model_id=SpaceThinker-Qwen2.5VL-3B&sha256=abc123def4567890abc123def4567890abc123def4567890abc123def4567890)

# SpaceThinker-Qwen2.5VL-3B

## 📚 Contents

- [🚀 Try It Live](#try-the-spacethinker-space)

- [🧠 Model Overview](#model-overview)

- [📏 Quantitative Spatial Reasoning](#spatial-reasoning-capabilities)

- [🔍 View Examples](#examples-of-spacethinker)

- [📊 Evaluation & Benchmarks](#model-evaluation)

- [🏃♀️ Running SpaceThinker](#running-spacethinker)

- [🏋️♂️ Training Configuration](#training-spacethinker)

- [📂 Dataset Info](#spacethinker-dataset)

- [⚠️ Limitations](#limitations)

- [📜 Citation](#citation)

## Try the SpaceThinker Space

[](https://huggingface.co/spaces/remyxai/SpaceThinker-Qwen2.5VL-3B)

# Model Overview

**SpaceThinker-Qwen2.5VL-3B** is a thinking/reasoning multimodal/vision-language model (VLM) trained to enhance spatial reasoning with test-time compute by fine-tuning

`UCSC-VLAA/VLAA-Thinker-Qwen2.5VL-3B` on synthetic reasoning traces generated by the [VQASynth](https://huggingface.co/datasets/remyxai/SpaceThinker) pipeline.

- **Model Type:** Multimodal, Vision-Language Model

- **Architecture**: `Qwen2.5-VL-3B`

- **Model Size:** 3.75B parameters (FP16)

- **Finetuned from:** `UCSC-VLAA/VLAA-Thinker-Qwen2.5VL-3B`

- **Finetune Strategy:** LoRA (Low-Rank Adaptation)

- **License:** Apache-2.0

Check out the [SpaceThinker collection](https://huggingface.co/collections/remyxai/spacethinker-68014f174cd049ca5acca4e5)

## Spatial Reasoning Capabilities

Strong quantitative spatial reasoning is critical for embodied AI applications demanding the ability to plan and navigate a 3D space, such as robotics and drones.

**SpaceThinker** improves capabilities using test-time compute, trained with samples which ground the final response on a consistent explanation of a collection of scene observations.

- Enhanced Quantitative Spatial Reasoning (e.g., distances, sizes)

- Grounded object relations (e.g., left-of, above, inside)

### Examples of SpaceThinker

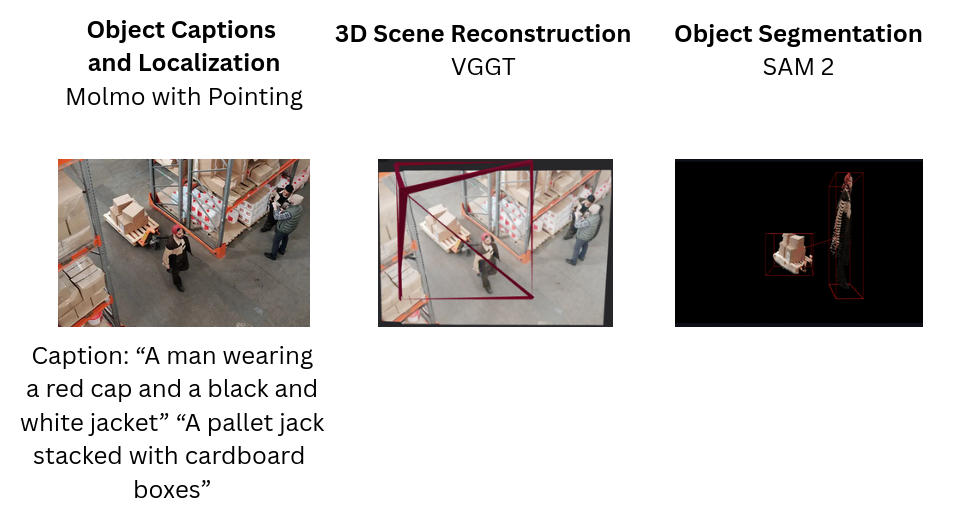

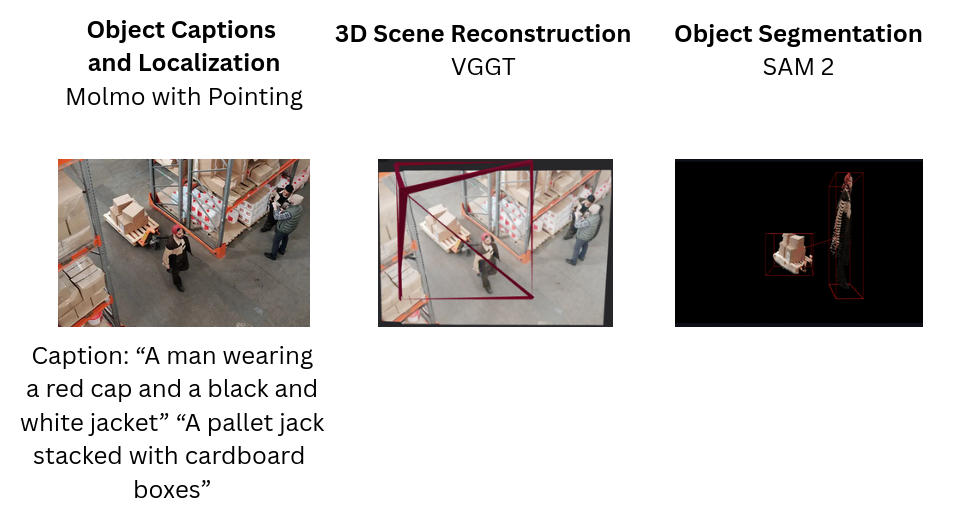

Consider the following sample images/prompts for visual QA of **SpaceThinker-Qwen2.5VL-3B**

|  |

|  |

|  |

|----------|-------------|--------|

| **Prompt:** How far is the man in the red hat from the pallet of boxes in feet? | **Prompt:** How far is the Goal from the penalty taker in this image? | **Prompt:** Approximately how many meters apart are the chair and bookshelf? |

| **Reasoning:** To determine how far the man in the red hat is from the pallet of boxes, I need to consider the spatial arrangement in the warehouse. The man is walking on the floor, and there's a visible gap between him and the pallet. First, I'll estimate the size of the pallet. A standard pallet is typically around 4 feet wide and 8 feet long. Given that the man is standing near the edge of the pallet, he might be about 2–3 feet away from the front edge of the pallet. Next, I'll consider the distance from the pallet to the man. The man appears to be walking along the aisle, which seems to be about 6–7 feet wide. If he is standing at the edge of the pallet, he would likely be about 2–3 feet from the front edge of the pallet. Combining these observations, the man in the red hat is approximately 2–3 feet from the pallet of boxes. | **Reasoning:** First, I observe the scene where a player in a red uniform stands near the goal. The goal itself is a standard soccer goalpost, which typically extends 2.5 meters beyond the line of the goalposts. The player appears to be standing close to the goal, but not directly at the goal line. To estimate the distance, I consider that the player's position relative to the goal suggests he is likely standing closer than 2.5 meters away. Given the typical setup of a penalty kick scenario, the player would be positioned further back from the goal than the goal itself. By visual estimation, the player seems to be standing approximately 10–15 meters from the goal. This range aligns with the common distance for a penalty kick, considering the player's stance and the goal's structure. Thus, the distance from the goal to the penalty taker is likely around 10–15 meters. | **Reasoning:** To determine the distance between the chair and the bookshelf, I'll consider the typical dimensions of these objects. A standard office chair is around 60–70 cm tall, and a bookshelf can be anywhere from 1.2 to 1.8 meters tall. Given that the chair is likely placed on a desk or floor level, the height difference between the chair and the top of the bookshelf would be approximately 1 meter. Assuming the bookshelf is at least 1 meter high, and the chair is at ground level, the vertical distance between them is indeed 1 meter. The horizontal distance might vary depending on the layout, but given the room's dimensions and the placement of the chair relative to the bookshelf, the total distance between the chair and the top of the bookshelf is likely around 1 meter. Therefore, the chair and bookshelf are approximately 1 meter apart. |

| **Answer:** 2–3 feet | **Answer:** 10–15 meters | **Answer:** 1 meter |

> **SpaceThinker** demonstrates grounded, quantitative spatial reasoning—inferring accurate distances, interpreting 3D scene context, and formatting open-ended answers precisely

> by integrating visual cues, real-world object priors, and human-centric spatial logic.

Read more about using test-time compute [here](https://huggingface.co/spaces/open-r1/README/discussions/10) for enhanced multimodal quantitative spatial reasoning.

## Running SpaceThinker

Try the **SpaceThinker** Space

[](https://huggingface.co/spaces/remyxai/SpaceThinker-Qwen2.5VL-3B)

To run locally with **llama.cpp**, install and build this [branch](https://github.com/HimariO/llama.cpp.qwen2.5vl/tree/qwen25-vl) and download the [.gguf weights here](https://huggingface.co/remyxai/SpaceThinker-Qwen2.5VL-3B/tree/main/gguf)

```bash

./llama-qwen2vl-cli -m spacethinker-qwen2.5VL-3B-F16.gguf

--mmproj spacethinker-qwen2.5vl-3b-vision.gguf

--image images/example_1.jpg --threads 24 -ngl 9

-p "Does the man in blue shirt working have a greater \\

height compared to the wooden pallet with boxes on floor?"

```

Run using **llama.cpp in colab**

[](https://colab.research.google.com/drive/1_ShhJAqnac8L4N9o1YNdsxCksSLJCrU7?usp=sharing)

Or run locally using **Transformers**

```python

import torch

from PIL import Image

from transformers import Qwen2_5_VLForConditionalGeneration, AutoProcessor

import requests

from io import BytesIO

# Configuration

model_id = "remyxai/SpaceThinker-Qwen2.5VL-3B"

image_path = "images/example_1.jpg" # or local path

prompt = "What can you infer from this image about the environment?"

system_message = (

"You are VL-Thinking 🤔, a helpful assistant with excellent reasoning ability. "

"You should first think about the reasoning process and then provide the answer. "

"Use ... and ... tags."

)

# Load model and processor

model = Qwen2_5_VLForConditionalGeneration.from_pretrained(

model_id, device_map="auto", torch_dtype=torch.bfloat16

)

processor = AutoProcessor.from_pretrained(model_id)

# Load and preprocess image

if image_path.startswith("http"):

image = Image.open(BytesIO(requests.get(image_path).content)).convert("RGB")

else:

image = Image.open(image_path).convert("RGB")

if image.width > 512:

ratio = image.height / image.width

image = image.resize((512, int(512 * ratio)), Image.Resampling.LANCZOS)

# Format input

chat = [

{"role": "system", "content": [{"type": "text", "text": system_message}]},

{"role": "user", "content": [{"type": "image", "image": image},

{"type": "text", "text": prompt}]}

]

text_input = processor.apply_chat_template(chat, tokenize=False,

add_generation_prompt=True)

# Tokenize

inputs = processor(text=[text_input], images=[image],

return_tensors="pt").to("cuda")

# Generate response

generated_ids = model.generate(**inputs, max_new_tokens=1024)

output = processor.batch_decode(generated_ids, skip_special_tokens=True)[0]

print("Response:\n", output)

```

## SpaceThinker Dataset

The **SpaceThinker** dataset includes over 12K samples synthesized using VQASynth on a subset of images in the localized narratives split of [the cauldron](https://huggingface.co/datasets/HuggingFaceM4/the_cauldron).

**SpaceThinker** is formatted similar to the [Llama-Nemotron-Post-Training-Dataset-v1](https://huggingface.co/datasets/nvidia/Llama-Nemotron-Post-Training-Dataset) to toggle reasoning.

The model builds upon the ideas from [SpatialVLM (Chen et al., 2024)](https://spatial-vlm.github.io/), introducing synthetic reasoning traces grounded on a 3D scene reconstruction pipeline using Molmo, VGGT, SAM2.

**Dataset Summary**

- ~12K synthetic spatial reasoning traces

- Question types: spatial relations (distances (units), above, left-of, contains, closest to)

- Format: image (RGB) + question + answer with reasoning traces

- Dataset: [remyxai/SpaceThinker](https://huggingface.co/datasets/remyxai/SpaceThinker)

- Code: [Synthetize Spatial Reasoning Traces with VQASynth](https://github.com/remyxai/VQASynth)

## Training SpaceThinker

**PEFT Configuration**

- Architecture: Qwen2.5-VL-3B

- Base model: UCSC-VLAA/VLAA-Thinker-Qwen2.5VL-3B

- Method: LoRA finetuning (PEFT)

- LoRA Alpha: 256

- LoRA Rank: 128

- Target Modules: q_proj, v_proj

- Optimizer: AdamW (lr=2e-5), batch size = 1, epochs = 3

- Max input length: 1024 tokens

Scripts for LoRA SFT available at [trl](https://github.com/huggingface/trl/blob/main/examples/scripts/sft_vlm.py), wandb logs available [here](https://wandb.ai/smellslikeml/qwen2.5-3b-instruct-trl-sft-spacethinker).

## Model Evaluation

[](https://colab.research.google.com/drive/1NH2n-PRJJOiu_md8agyYCnxEZDGO5ICJ?usp=sharing)

The [Q-Spatial-Bench dataset](https://huggingface.co/datasets/andrewliao11/Q-Spatial-Bench) includes hundreds of

VQA samples designed to evaluate quantitative spatial reasoning of VLMs with high-precision.

Using the Colab notebook we evaluate **SpaceThinker** on the **QSpatial++** split under two conditions:

- **Default System Prompt**:

- Prompts completed: **93 / 101**

- Correct answers: **30**

- **Accuracy**: **32.26%**

- **Prompting for step-by-step reasoning** using the [spatial prompt](https://github.com/andrewliao11/Q-Spatial-Bench-code/blob/main/prompt_templates/spatial_prompt_steps.txt) from **Q-Spatial-Bench**:

- Correct answers: **53**

- **Accuracy**: **52.48%**

Using the spatial prompt improves the number of correct answers and overall accuracy rate while improving the task completion rate.

Updating the comparison from **Q-Spatial-Bench** [project page](https://andrewliao11.github.io/spatial_prompt/), the **SpaceThinker-Qwen2.5-VL-3B** VLM using

the SpatialPrompt for step-by-step reasoning performs on par with larger, closed, frontier API providers.

## Limitations

- Performance may degrade in cluttered environments or camera perspective.

- This model was fine-tuned using synthetic reasoning over an internet image dataset.

- Multimodal biases inherent to the base model (Qwen2.5-VL) may persist.

- Not intended for use in safety-critical or legal decision-making.

> Users are encouraged to evaluate outputs critically and consider fine-tuning for domain-specific safety and performance. Distances estimated using autoregressive

> transformers may help in higher-order reasoning for planning and behavior but may not be suitable replacements for measurements taken with high-precision sensors,

> calibrated stereo vision systems, or specialist monocular depth estimation models capable of more accurate, pixel-wise predictions and real-time performance.

## Citation

```

@article{chen2024spatialvlm,

title = {SpatialVLM: Endowing Vision-Language Models with Spatial Reasoning Capabilities},

author = {Chen, Boyuan and Xu, Zhuo and Kirmani, Sean and Ichter, Brian and Driess, Danny and Florence, Pete and Sadigh, Dorsa and Guibas, Leonidas and Xia, Fei},

journal = {arXiv preprint arXiv:2401.12168},

year = {2024},

url = {https://arxiv.org/abs/2401.12168},

}

@misc{qwen2.5-VL,

title = {Qwen2.5-VL},

url = {https://qwenlm.github.io/blog/qwen2.5-vl/},

author = {Qwen Team},

month = {January},

year = {2025}

}

@misc{vl-thinking2025,

title={SFT or RL? An Early Investigation into Training R1-Like Reasoning Large Vision-Language Models },

author={Hardy Chen and Haoqin Tu and Fali Wang and Hui Liu and Xianfeng Tang and Xinya Du and Yuyin Zhou and Cihang Xie},

year = {2025},

publisher = {GitHub},

journal = {GitHub repository},

howpublished = {\url{https://github.com/UCSC-VLAA/VLAA-Thinking}},

}

@inproceedings{

liaos2024reasoning,

title={Reasoning Paths with Reference Objects Elicit Quantitative Spatial Reasoning in Large Vision-Language Models},

author={Yuan-Hong Liao and Rafid Mahmood and Sanja Fidler and David Acuna},

booktitle={The 2024 Conference on Empirical Methods in Natural Language Processing},

year={2024},

url={https://arxiv.org/abs/2409.09788},

}

```

|

|----------|-------------|--------|

| **Prompt:** How far is the man in the red hat from the pallet of boxes in feet? | **Prompt:** How far is the Goal from the penalty taker in this image? | **Prompt:** Approximately how many meters apart are the chair and bookshelf? |

| **Reasoning:** To determine how far the man in the red hat is from the pallet of boxes, I need to consider the spatial arrangement in the warehouse. The man is walking on the floor, and there's a visible gap between him and the pallet. First, I'll estimate the size of the pallet. A standard pallet is typically around 4 feet wide and 8 feet long. Given that the man is standing near the edge of the pallet, he might be about 2–3 feet away from the front edge of the pallet. Next, I'll consider the distance from the pallet to the man. The man appears to be walking along the aisle, which seems to be about 6–7 feet wide. If he is standing at the edge of the pallet, he would likely be about 2–3 feet from the front edge of the pallet. Combining these observations, the man in the red hat is approximately 2–3 feet from the pallet of boxes. | **Reasoning:** First, I observe the scene where a player in a red uniform stands near the goal. The goal itself is a standard soccer goalpost, which typically extends 2.5 meters beyond the line of the goalposts. The player appears to be standing close to the goal, but not directly at the goal line. To estimate the distance, I consider that the player's position relative to the goal suggests he is likely standing closer than 2.5 meters away. Given the typical setup of a penalty kick scenario, the player would be positioned further back from the goal than the goal itself. By visual estimation, the player seems to be standing approximately 10–15 meters from the goal. This range aligns with the common distance for a penalty kick, considering the player's stance and the goal's structure. Thus, the distance from the goal to the penalty taker is likely around 10–15 meters. | **Reasoning:** To determine the distance between the chair and the bookshelf, I'll consider the typical dimensions of these objects. A standard office chair is around 60–70 cm tall, and a bookshelf can be anywhere from 1.2 to 1.8 meters tall. Given that the chair is likely placed on a desk or floor level, the height difference between the chair and the top of the bookshelf would be approximately 1 meter. Assuming the bookshelf is at least 1 meter high, and the chair is at ground level, the vertical distance between them is indeed 1 meter. The horizontal distance might vary depending on the layout, but given the room's dimensions and the placement of the chair relative to the bookshelf, the total distance between the chair and the top of the bookshelf is likely around 1 meter. Therefore, the chair and bookshelf are approximately 1 meter apart. |

| **Answer:** 2–3 feet | **Answer:** 10–15 meters | **Answer:** 1 meter |

> **SpaceThinker** demonstrates grounded, quantitative spatial reasoning—inferring accurate distances, interpreting 3D scene context, and formatting open-ended answers precisely

> by integrating visual cues, real-world object priors, and human-centric spatial logic.

Read more about using test-time compute [here](https://huggingface.co/spaces/open-r1/README/discussions/10) for enhanced multimodal quantitative spatial reasoning.

## Running SpaceThinker

Try the **SpaceThinker** Space

[](https://huggingface.co/spaces/remyxai/SpaceThinker-Qwen2.5VL-3B)

To run locally with **llama.cpp**, install and build this [branch](https://github.com/HimariO/llama.cpp.qwen2.5vl/tree/qwen25-vl) and download the [.gguf weights here](https://huggingface.co/remyxai/SpaceThinker-Qwen2.5VL-3B/tree/main/gguf)

```bash

./llama-qwen2vl-cli -m spacethinker-qwen2.5VL-3B-F16.gguf

--mmproj spacethinker-qwen2.5vl-3b-vision.gguf

--image images/example_1.jpg --threads 24 -ngl 9

-p "Does the man in blue shirt working have a greater \\

height compared to the wooden pallet with boxes on floor?"

```

Run using **llama.cpp in colab**

[](https://colab.research.google.com/drive/1_ShhJAqnac8L4N9o1YNdsxCksSLJCrU7?usp=sharing)

Or run locally using **Transformers**

```python

import torch

from PIL import Image

from transformers import Qwen2_5_VLForConditionalGeneration, AutoProcessor

import requests

from io import BytesIO

# Configuration

model_id = "remyxai/SpaceThinker-Qwen2.5VL-3B"

image_path = "images/example_1.jpg" # or local path

prompt = "What can you infer from this image about the environment?"

system_message = (

"You are VL-Thinking 🤔, a helpful assistant with excellent reasoning ability. "

"You should first think about the reasoning process and then provide the answer. "

"Use ... and ... tags."

)

# Load model and processor

model = Qwen2_5_VLForConditionalGeneration.from_pretrained(

model_id, device_map="auto", torch_dtype=torch.bfloat16

)

processor = AutoProcessor.from_pretrained(model_id)

# Load and preprocess image

if image_path.startswith("http"):

image = Image.open(BytesIO(requests.get(image_path).content)).convert("RGB")

else:

image = Image.open(image_path).convert("RGB")

if image.width > 512:

ratio = image.height / image.width

image = image.resize((512, int(512 * ratio)), Image.Resampling.LANCZOS)

# Format input

chat = [

{"role": "system", "content": [{"type": "text", "text": system_message}]},

{"role": "user", "content": [{"type": "image", "image": image},

{"type": "text", "text": prompt}]}

]

text_input = processor.apply_chat_template(chat, tokenize=False,

add_generation_prompt=True)

# Tokenize

inputs = processor(text=[text_input], images=[image],

return_tensors="pt").to("cuda")

# Generate response

generated_ids = model.generate(**inputs, max_new_tokens=1024)

output = processor.batch_decode(generated_ids, skip_special_tokens=True)[0]

print("Response:\n", output)

```

## SpaceThinker Dataset

The **SpaceThinker** dataset includes over 12K samples synthesized using VQASynth on a subset of images in the localized narratives split of [the cauldron](https://huggingface.co/datasets/HuggingFaceM4/the_cauldron).

**SpaceThinker** is formatted similar to the [Llama-Nemotron-Post-Training-Dataset-v1](https://huggingface.co/datasets/nvidia/Llama-Nemotron-Post-Training-Dataset) to toggle reasoning.

The model builds upon the ideas from [SpatialVLM (Chen et al., 2024)](https://spatial-vlm.github.io/), introducing synthetic reasoning traces grounded on a 3D scene reconstruction pipeline using Molmo, VGGT, SAM2.

**Dataset Summary**

- ~12K synthetic spatial reasoning traces

- Question types: spatial relations (distances (units), above, left-of, contains, closest to)

- Format: image (RGB) + question + answer with reasoning traces

- Dataset: [remyxai/SpaceThinker](https://huggingface.co/datasets/remyxai/SpaceThinker)

- Code: [Synthetize Spatial Reasoning Traces with VQASynth](https://github.com/remyxai/VQASynth)

## Training SpaceThinker

**PEFT Configuration**

- Architecture: Qwen2.5-VL-3B

- Base model: UCSC-VLAA/VLAA-Thinker-Qwen2.5VL-3B

- Method: LoRA finetuning (PEFT)

- LoRA Alpha: 256

- LoRA Rank: 128

- Target Modules: q_proj, v_proj

- Optimizer: AdamW (lr=2e-5), batch size = 1, epochs = 3

- Max input length: 1024 tokens

Scripts for LoRA SFT available at [trl](https://github.com/huggingface/trl/blob/main/examples/scripts/sft_vlm.py), wandb logs available [here](https://wandb.ai/smellslikeml/qwen2.5-3b-instruct-trl-sft-spacethinker).

## Model Evaluation

[](https://colab.research.google.com/drive/1NH2n-PRJJOiu_md8agyYCnxEZDGO5ICJ?usp=sharing)

The [Q-Spatial-Bench dataset](https://huggingface.co/datasets/andrewliao11/Q-Spatial-Bench) includes hundreds of

VQA samples designed to evaluate quantitative spatial reasoning of VLMs with high-precision.

Using the Colab notebook we evaluate **SpaceThinker** on the **QSpatial++** split under two conditions:

- **Default System Prompt**:

- Prompts completed: **93 / 101**

- Correct answers: **30**

- **Accuracy**: **32.26%**

- **Prompting for step-by-step reasoning** using the [spatial prompt](https://github.com/andrewliao11/Q-Spatial-Bench-code/blob/main/prompt_templates/spatial_prompt_steps.txt) from **Q-Spatial-Bench**:

- Correct answers: **53**

- **Accuracy**: **52.48%**

Using the spatial prompt improves the number of correct answers and overall accuracy rate while improving the task completion rate.

Updating the comparison from **Q-Spatial-Bench** [project page](https://andrewliao11.github.io/spatial_prompt/), the **SpaceThinker-Qwen2.5-VL-3B** VLM using

the SpatialPrompt for step-by-step reasoning performs on par with larger, closed, frontier API providers.

## Limitations

- Performance may degrade in cluttered environments or camera perspective.

- This model was fine-tuned using synthetic reasoning over an internet image dataset.

- Multimodal biases inherent to the base model (Qwen2.5-VL) may persist.

- Not intended for use in safety-critical or legal decision-making.

> Users are encouraged to evaluate outputs critically and consider fine-tuning for domain-specific safety and performance. Distances estimated using autoregressive

> transformers may help in higher-order reasoning for planning and behavior but may not be suitable replacements for measurements taken with high-precision sensors,

> calibrated stereo vision systems, or specialist monocular depth estimation models capable of more accurate, pixel-wise predictions and real-time performance.

## Citation

```

@article{chen2024spatialvlm,

title = {SpatialVLM: Endowing Vision-Language Models with Spatial Reasoning Capabilities},

author = {Chen, Boyuan and Xu, Zhuo and Kirmani, Sean and Ichter, Brian and Driess, Danny and Florence, Pete and Sadigh, Dorsa and Guibas, Leonidas and Xia, Fei},

journal = {arXiv preprint arXiv:2401.12168},

year = {2024},

url = {https://arxiv.org/abs/2401.12168},

}

@misc{qwen2.5-VL,

title = {Qwen2.5-VL},

url = {https://qwenlm.github.io/blog/qwen2.5-vl/},

author = {Qwen Team},

month = {January},

year = {2025}

}

@misc{vl-thinking2025,

title={SFT or RL? An Early Investigation into Training R1-Like Reasoning Large Vision-Language Models },

author={Hardy Chen and Haoqin Tu and Fali Wang and Hui Liu and Xianfeng Tang and Xinya Du and Yuyin Zhou and Cihang Xie},

year = {2025},

publisher = {GitHub},

journal = {GitHub repository},

howpublished = {\url{https://github.com/UCSC-VLAA/VLAA-Thinking}},

}

@inproceedings{

liaos2024reasoning,

title={Reasoning Paths with Reference Objects Elicit Quantitative Spatial Reasoning in Large Vision-Language Models},

author={Yuan-Hong Liao and Rafid Mahmood and Sanja Fidler and David Acuna},

booktitle={The 2024 Conference on Empirical Methods in Natural Language Processing},

year={2024},

url={https://arxiv.org/abs/2409.09788},

}

``` |

|  |

|  |

|----------|-------------|--------|

| **Prompt:** How far is the man in the red hat from the pallet of boxes in feet? | **Prompt:** How far is the Goal from the penalty taker in this image? | **Prompt:** Approximately how many meters apart are the chair and bookshelf? |

| **Reasoning:** To determine how far the man in the red hat is from the pallet of boxes, I need to consider the spatial arrangement in the warehouse. The man is walking on the floor, and there's a visible gap between him and the pallet. First, I'll estimate the size of the pallet. A standard pallet is typically around 4 feet wide and 8 feet long. Given that the man is standing near the edge of the pallet, he might be about 2–3 feet away from the front edge of the pallet. Next, I'll consider the distance from the pallet to the man. The man appears to be walking along the aisle, which seems to be about 6–7 feet wide. If he is standing at the edge of the pallet, he would likely be about 2–3 feet from the front edge of the pallet. Combining these observations, the man in the red hat is approximately 2–3 feet from the pallet of boxes. | **Reasoning:** First, I observe the scene where a player in a red uniform stands near the goal. The goal itself is a standard soccer goalpost, which typically extends 2.5 meters beyond the line of the goalposts. The player appears to be standing close to the goal, but not directly at the goal line. To estimate the distance, I consider that the player's position relative to the goal suggests he is likely standing closer than 2.5 meters away. Given the typical setup of a penalty kick scenario, the player would be positioned further back from the goal than the goal itself. By visual estimation, the player seems to be standing approximately 10–15 meters from the goal. This range aligns with the common distance for a penalty kick, considering the player's stance and the goal's structure. Thus, the distance from the goal to the penalty taker is likely around 10–15 meters. | **Reasoning:** To determine the distance between the chair and the bookshelf, I'll consider the typical dimensions of these objects. A standard office chair is around 60–70 cm tall, and a bookshelf can be anywhere from 1.2 to 1.8 meters tall. Given that the chair is likely placed on a desk or floor level, the height difference between the chair and the top of the bookshelf would be approximately 1 meter. Assuming the bookshelf is at least 1 meter high, and the chair is at ground level, the vertical distance between them is indeed 1 meter. The horizontal distance might vary depending on the layout, but given the room's dimensions and the placement of the chair relative to the bookshelf, the total distance between the chair and the top of the bookshelf is likely around 1 meter. Therefore, the chair and bookshelf are approximately 1 meter apart. |

| **Answer:** 2–3 feet | **Answer:** 10–15 meters | **Answer:** 1 meter |

> **SpaceThinker** demonstrates grounded, quantitative spatial reasoning—inferring accurate distances, interpreting 3D scene context, and formatting open-ended answers precisely

> by integrating visual cues, real-world object priors, and human-centric spatial logic.

Read more about using test-time compute [here](https://huggingface.co/spaces/open-r1/README/discussions/10) for enhanced multimodal quantitative spatial reasoning.

## Running SpaceThinker

Try the **SpaceThinker** Space

[](https://huggingface.co/spaces/remyxai/SpaceThinker-Qwen2.5VL-3B)

To run locally with **llama.cpp**, install and build this [branch](https://github.com/HimariO/llama.cpp.qwen2.5vl/tree/qwen25-vl) and download the [.gguf weights here](https://huggingface.co/remyxai/SpaceThinker-Qwen2.5VL-3B/tree/main/gguf)

```bash

./llama-qwen2vl-cli -m spacethinker-qwen2.5VL-3B-F16.gguf

--mmproj spacethinker-qwen2.5vl-3b-vision.gguf

--image images/example_1.jpg --threads 24 -ngl 9

-p "Does the man in blue shirt working have a greater \\

height compared to the wooden pallet with boxes on floor?"

```

Run using **llama.cpp in colab**

[](https://colab.research.google.com/drive/1_ShhJAqnac8L4N9o1YNdsxCksSLJCrU7?usp=sharing)

Or run locally using **Transformers**

```python

import torch

from PIL import Image

from transformers import Qwen2_5_VLForConditionalGeneration, AutoProcessor

import requests

from io import BytesIO

# Configuration

model_id = "remyxai/SpaceThinker-Qwen2.5VL-3B"

image_path = "images/example_1.jpg" # or local path

prompt = "What can you infer from this image about the environment?"

system_message = (

"You are VL-Thinking 🤔, a helpful assistant with excellent reasoning ability. "

"You should first think about the reasoning process and then provide the answer. "

"Use

|

|----------|-------------|--------|

| **Prompt:** How far is the man in the red hat from the pallet of boxes in feet? | **Prompt:** How far is the Goal from the penalty taker in this image? | **Prompt:** Approximately how many meters apart are the chair and bookshelf? |

| **Reasoning:** To determine how far the man in the red hat is from the pallet of boxes, I need to consider the spatial arrangement in the warehouse. The man is walking on the floor, and there's a visible gap between him and the pallet. First, I'll estimate the size of the pallet. A standard pallet is typically around 4 feet wide and 8 feet long. Given that the man is standing near the edge of the pallet, he might be about 2–3 feet away from the front edge of the pallet. Next, I'll consider the distance from the pallet to the man. The man appears to be walking along the aisle, which seems to be about 6–7 feet wide. If he is standing at the edge of the pallet, he would likely be about 2–3 feet from the front edge of the pallet. Combining these observations, the man in the red hat is approximately 2–3 feet from the pallet of boxes. | **Reasoning:** First, I observe the scene where a player in a red uniform stands near the goal. The goal itself is a standard soccer goalpost, which typically extends 2.5 meters beyond the line of the goalposts. The player appears to be standing close to the goal, but not directly at the goal line. To estimate the distance, I consider that the player's position relative to the goal suggests he is likely standing closer than 2.5 meters away. Given the typical setup of a penalty kick scenario, the player would be positioned further back from the goal than the goal itself. By visual estimation, the player seems to be standing approximately 10–15 meters from the goal. This range aligns with the common distance for a penalty kick, considering the player's stance and the goal's structure. Thus, the distance from the goal to the penalty taker is likely around 10–15 meters. | **Reasoning:** To determine the distance between the chair and the bookshelf, I'll consider the typical dimensions of these objects. A standard office chair is around 60–70 cm tall, and a bookshelf can be anywhere from 1.2 to 1.8 meters tall. Given that the chair is likely placed on a desk or floor level, the height difference between the chair and the top of the bookshelf would be approximately 1 meter. Assuming the bookshelf is at least 1 meter high, and the chair is at ground level, the vertical distance between them is indeed 1 meter. The horizontal distance might vary depending on the layout, but given the room's dimensions and the placement of the chair relative to the bookshelf, the total distance between the chair and the top of the bookshelf is likely around 1 meter. Therefore, the chair and bookshelf are approximately 1 meter apart. |

| **Answer:** 2–3 feet | **Answer:** 10–15 meters | **Answer:** 1 meter |

> **SpaceThinker** demonstrates grounded, quantitative spatial reasoning—inferring accurate distances, interpreting 3D scene context, and formatting open-ended answers precisely

> by integrating visual cues, real-world object priors, and human-centric spatial logic.

Read more about using test-time compute [here](https://huggingface.co/spaces/open-r1/README/discussions/10) for enhanced multimodal quantitative spatial reasoning.

## Running SpaceThinker

Try the **SpaceThinker** Space

[](https://huggingface.co/spaces/remyxai/SpaceThinker-Qwen2.5VL-3B)

To run locally with **llama.cpp**, install and build this [branch](https://github.com/HimariO/llama.cpp.qwen2.5vl/tree/qwen25-vl) and download the [.gguf weights here](https://huggingface.co/remyxai/SpaceThinker-Qwen2.5VL-3B/tree/main/gguf)

```bash

./llama-qwen2vl-cli -m spacethinker-qwen2.5VL-3B-F16.gguf

--mmproj spacethinker-qwen2.5vl-3b-vision.gguf

--image images/example_1.jpg --threads 24 -ngl 9

-p "Does the man in blue shirt working have a greater \\

height compared to the wooden pallet with boxes on floor?"

```

Run using **llama.cpp in colab**

[](https://colab.research.google.com/drive/1_ShhJAqnac8L4N9o1YNdsxCksSLJCrU7?usp=sharing)

Or run locally using **Transformers**

```python

import torch

from PIL import Image

from transformers import Qwen2_5_VLForConditionalGeneration, AutoProcessor

import requests

from io import BytesIO

# Configuration

model_id = "remyxai/SpaceThinker-Qwen2.5VL-3B"

image_path = "images/example_1.jpg" # or local path

prompt = "What can you infer from this image about the environment?"

system_message = (

"You are VL-Thinking 🤔, a helpful assistant with excellent reasoning ability. "

"You should first think about the reasoning process and then provide the answer. "

"Use