Upload README.md with huggingface_hub

Browse files

README.md

CHANGED

|

@@ -1,9 +1,596 @@

|

|

| 1 |

---

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 2 |

tags:

|

|

|

|

| 3 |

- image-classification

|

|

|

|

| 4 |

- timm

|

| 5 |

- transformers

|

| 6 |

-

library_name: timm

|

| 7 |

-

license: apache-2.0

|

| 8 |

---

|

| 9 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

---

|

| 2 |

+

datasets:

|

| 3 |

+

- imagenet-1k

|

| 4 |

+

language: en

|

| 5 |

+

library_name: timm

|

| 6 |

+

license: apache-2.0

|

| 7 |

+

metrics:

|

| 8 |

+

- accuracy

|

| 9 |

+

model_name: recnext_t_share_channel

|

| 10 |

+

pipeline_tag: image-classification

|

| 11 |

tags:

|

| 12 |

+

- vision

|

| 13 |

- image-classification

|

| 14 |

+

- pytorch

|

| 15 |

- timm

|

| 16 |

- transformers

|

|

|

|

|

|

|

| 17 |

---

|

| 18 |

+

|

| 19 |

+

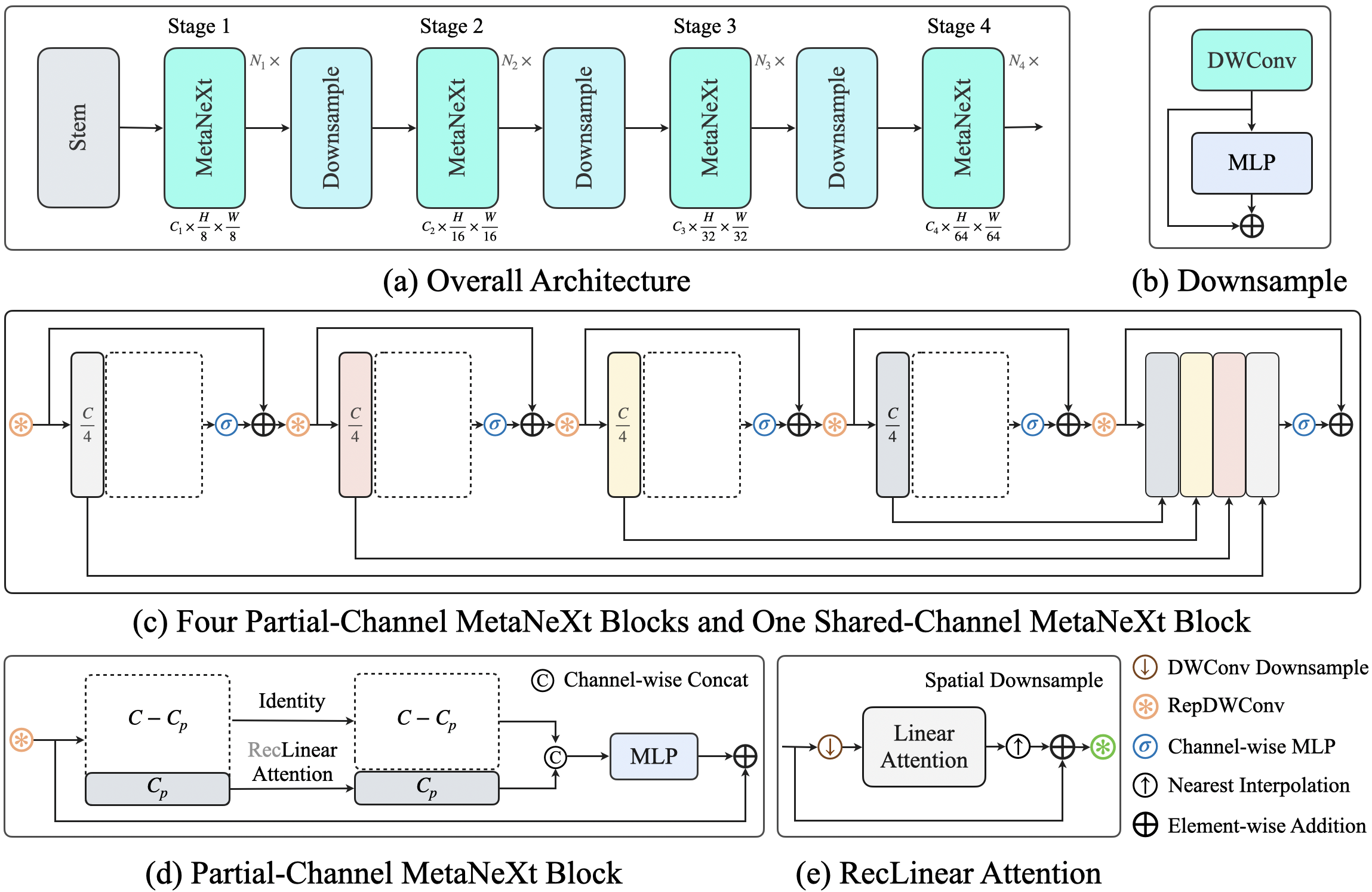

# Model Card for RecNeXt-CHANNEL (With Knowledge Distillation)

|

| 20 |

+

|

| 21 |

+

## Abstract

|

| 22 |

+

Recent advances in vision transformers (ViTs) have demonstrated the advantage of global modeling capabilities, prompting widespread integration of large-kernel convolutions for enlarging the effective receptive field (ERF). However, the quadratic scaling of parameter count and computational complexity (FLOPs) with respect to kernel size poses significant efficiency and optimization challenges. This paper introduces RecConv, a recursive decomposition strategy that efficiently constructs multi-frequency representations using small-kernel convolutions. RecConv establishes a linear relationship between parameter growth and decomposing levels which determines the effective receptive field $k\times 2^\ell$ for a base kernel $k$ and $\ell$ levels of decomposition, while maintaining constant FLOPs regardless of the ERF expansion. Specifically, RecConv achieves a parameter expansion of only $\ell+2$ times and a maximum FLOPs increase of $5/3$ times, compared to the exponential growth ($4^\ell$) of standard and depthwise convolutions. RecNeXt-M3 outperforms RepViT-M1.1 by 1.9 $AP^{box}$ on COCO with similar FLOPs. This innovation provides a promising avenue towards designing efficient and compact networks across various modalities. Codes and models can be found at https://github.com/suous/RecNeXt.

|

| 23 |

+

|

| 24 |

+

[](https://github.com/suous/RecNeXt/blob/main/LICENSE)

|

| 25 |

+

[](https://arxiv.org/abs/2412.19628)

|

| 26 |

+

|

| 27 |

+

<div style="display: flex; justify-content: space-between;">

|

| 28 |

+

<img src="https://raw.githubusercontent.com/suous/RecNeXt/refs/heads/main/figures/RecConvA.png" alt="RecConvA" style="width: 52%;">

|

| 29 |

+

<img src="https://raw.githubusercontent.com/suous/RecNeXt/refs/heads/main/figures/code.png" alt="code" style="width: 46%;">

|

| 30 |

+

</div>

|

| 31 |

+

|

| 32 |

+

## Model Details

|

| 33 |

+

|

| 34 |

+

- **Model Type**: Image Classification / Feature Extraction

|

| 35 |

+

- **Model Series**: M

|

| 36 |

+

- **Model Stats**:

|

| 37 |

+

- **Parameters**: N/A

|

| 38 |

+

- **MACs**: N/A

|

| 39 |

+

- **Latency**: N/A (iPhone 13, iOS 18)

|

| 40 |

+

- **Image Size**: 224x224

|

| 41 |

+

|

| 42 |

+

- **Architecture Configuration**:

|

| 43 |

+

- **Embedding Dimensions**: N/A

|

| 44 |

+

- **Depths**: N/A

|

| 45 |

+

- **MLP Ratio**: 2

|

| 46 |

+

|

| 47 |

+

- **Paper**: [RecConv: Efficient Recursive Convolutions for Multi-Frequency Representations](https://arxiv.org/abs/2412.19628)

|

| 48 |

+

|

| 49 |

+

- **Code**: https://github.com/suous/RecNeXt

|

| 50 |

+

|

| 51 |

+

- **Dataset**: ImageNet-1K

|

| 52 |

+

|

| 53 |

+

## Recent Updates

|

| 54 |

+

|

| 55 |

+

**UPDATES** 🔥

|

| 56 |

+

- **2025/07/23**: Added a simple architecture, the overall design follows [LSNet](https://github.com/jameslahm/lsnet).

|

| 57 |

+

- **2025/07/04**: Uploaded classification models to [HuggingFace](https://huggingface.co/suous)🤗.

|

| 58 |

+

- **2025/07/01**: Added more comparisons with [LSNet](https://github.com/jameslahm/lsnet).

|

| 59 |

+

- **2025/06/27**: Added **A** series code and logs, replacing convolution with linear attention.

|

| 60 |

+

- **2025/03/19**: Added more ablation study results, including using attention with RecConv design.

|

| 61 |

+

- **2025/01/02**: Uploaded checkpoints and training logs of RecNeXt-M0.

|

| 62 |

+

- **2024/12/29**: Uploaded checkpoints and training logs of RecNeXt-M1 - M5.

|

| 63 |

+

|

| 64 |

+

## Model Usage

|

| 65 |

+

|

| 66 |

+

### Image Classification

|

| 67 |

+

|

| 68 |

+

```python

|

| 69 |

+

from urllib.request import urlopen

|

| 70 |

+

from PIL import Image

|

| 71 |

+

import timm

|

| 72 |

+

import torch

|

| 73 |

+

|

| 74 |

+

img = Image.open(urlopen(

|

| 75 |

+

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

|

| 76 |

+

))

|

| 77 |

+

|

| 78 |

+

model = timm.create_model('recnext_t_share_channel', pretrained=True, distillation=True)

|

| 79 |

+

model = model.eval()

|

| 80 |

+

|

| 81 |

+

# get model specific transforms (normalization, resize)

|

| 82 |

+

data_config = timm.data.resolve_model_data_config(model)

|

| 83 |

+

transforms = timm.data.create_transform(**data_config, is_training=False)

|

| 84 |

+

|

| 85 |

+

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

|

| 86 |

+

|

| 87 |

+

top5_probabilities, top5_class_indices = torch.topk(output.softmax(dim=1) * 100, k=5)

|

| 88 |

+

```

|

| 89 |

+

|

| 90 |

+

### Converting to Inference Mode

|

| 91 |

+

|

| 92 |

+

```python

|

| 93 |

+

import utils

|

| 94 |

+

|

| 95 |

+

# Convert training-time model to inference structure, fuse batchnorms

|

| 96 |

+

utils.replace_batchnorm(model)

|

| 97 |

+

```

|

| 98 |

+

## Model Comparison

|

| 99 |

+

|

| 100 |

+

### Classification

|

| 101 |

+

|

| 102 |

+

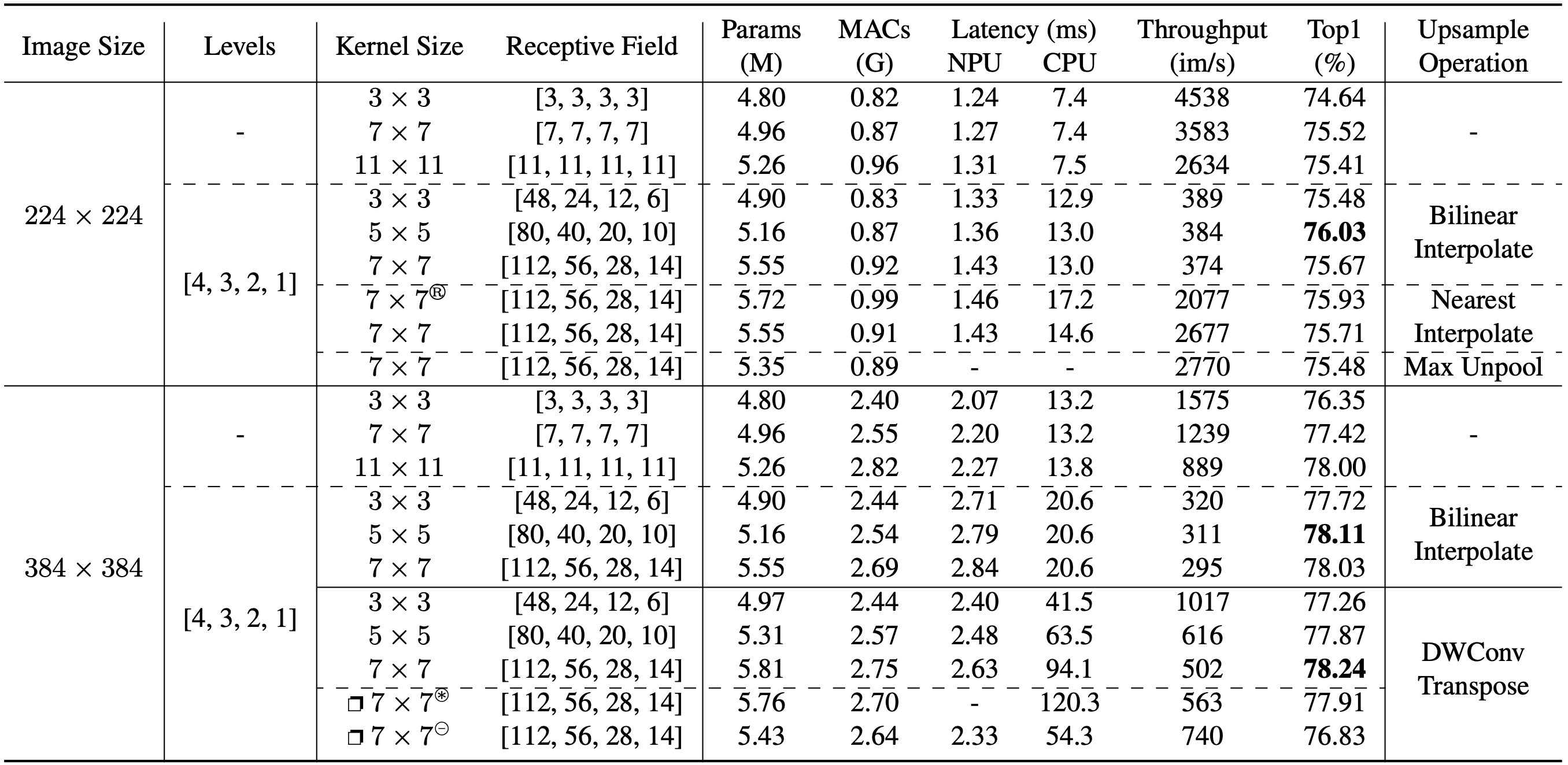

We introduce two series of models: the **A** series uses linear attention and nearest interpolation, while the **M** series employs convolution and bilinear interpolation for simplicity and broader hardware compatibility (e.g., to address suboptimal nearest interpolation support in some iOS versions).

|

| 103 |

+

|

| 104 |

+

> **dist**: distillation; **base**: without distillation (all models are trained over 300 epochs).

|

| 105 |

+

|

| 106 |

+

| model | top_1_accuracy | params | gmacs | npu_latency | cpu_latency | throughput | fused_weights | training_logs |

|

| 107 |

+

|-------|----------------|--------|-------|-------------|-------------|------------|--------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------|---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------|

|

| 108 |

+

| M0 | 74.7* \| 73.2 | 2.5M | 0.4 | 1.0ms | 189ms | 750 | [dist](https://github.com/suous/RecNeXt/releases/download/v1.0/recnext_m0_distill_300e_fused.pt) \| [base](https://github.com/suous/RecNeXt/releases/download/v1.0/recnext_m0_without_distill_300e_fused.pt) | [dist](https://github.com/suous/RecNeXt/blob/main/logs/distill/recnext_m0_distill_300e.txt) \| [base](https://github.com/suous/RecNeXt/blob/main/logs/normal/recnext_m0_without_distill_300e.txt) |

|

| 109 |

+

| M1 | 79.2* \| 78.0 | 5.2M | 0.9 | 1.4ms | 361ms | 384 | [dist](https://github.com/suous/RecNeXt/releases/download/v1.0/recnext_m1_distill_300e_fused.pt) \| [base](https://github.com/suous/RecNeXt/releases/download/v1.0/recnext_m1_without_distill_300e_fused.pt) | [dist](https://github.com/suous/RecNeXt/blob/main/logs/distill/recnext_m1_distill_300e.txt) \| [base](https://github.com/suous/RecNeXt/blob/main/logs/normal/recnext_m1_without_distill_300e.txt) |

|

| 110 |

+

| M2 | 80.3* \| 79.2 | 6.8M | 1.2 | 1.5ms | 431ms | 325 | [dist](https://github.com/suous/RecNeXt/releases/download/v1.0/recnext_m2_distill_300e_fused.pt) \| [base](https://github.com/suous/RecNeXt/releases/download/v1.0/recnext_m2_without_distill_300e_fused.pt) | [dist](https://github.com/suous/RecNeXt/blob/main/logs/distill/recnext_m2_distill_300e.txt) \| [base](https://github.com/suous/RecNeXt/blob/main/logs/normal/recnext_m2_without_distill_300e.txt) |

|

| 111 |

+

| M3 | 80.9* \| 79.6 | 8.2M | 1.4 | 1.6ms | 482ms | 314 | [dist](https://github.com/suous/RecNeXt/releases/download/v1.0/recnext_m3_distill_300e_fused.pt) \| [base](https://github.com/suous/RecNeXt/releases/download/v1.0/recnext_m3_without_distill_300e_fused.pt) | [dist](https://github.com/suous/RecNeXt/blob/main/logs/distill/recnext_m3_distill_300e.txt) \| [base](https://github.com/suous/RecNeXt/blob/main/logs/normal/recnext_m3_without_distill_300e.txt) |

|

| 112 |

+

| M4 | 82.5* \| 81.4 | 14.1M | 2.4 | 2.4ms | 843ms | 169 | [dist](https://github.com/suous/RecNeXt/releases/download/v1.0/recnext_m4_distill_300e_fused.pt) \| [base](https://github.com/suous/RecNeXt/releases/download/v1.0/recnext_m4_without_distill_300e_fused.pt) | [dist](https://github.com/suous/RecNeXt/blob/main/logs/distill/recnext_m4_distill_300e.txt) \| [base](https://github.com/suous/RecNeXt/blob/main/logs/normal/recnext_m4_without_distill_300e.txt) |

|

| 113 |

+

| M5 | 83.3* \| 82.9 | 22.9M | 4.7 | 3.4ms | 1487ms | 104 | [dist](https://github.com/suous/RecNeXt/releases/download/v1.0/recnext_m5_distill_300e_fused.pt) \| [base](https://github.com/suous/RecNeXt/releases/download/v1.0/recnext_m5_without_distill_300e_fused.pt) | [dist](https://github.com/suous/RecNeXt/blob/main/logs/distill/recnext_m5_distill_300e.txt) \| [base](https://github.com/suous/RecNeXt/blob/main/logs/normal/recnext_m5_without_distill_300e.txt) |

|

| 114 |

+

| A0 | 75.0* \| 73.6 | 2.8M | 0.4 | 1.4ms | 177ms | 4891 | [dist](https://github.com/suous/RecNeXt/releases/download/v2.0/recnext_a0_distill_300e_fused.pt) \| [base](https://github.com/suous/RecNeXt/releases/download/v2.0/recnext_a0_without_distill_300e_fused.pt) | [dist](https://github.com/suous/RecNeXt/blob/main/logs/distill/recnext_a0_distill_300e.txt) \| [base](https://github.com/suous/RecNeXt/blob/main/logs/normal/recnext_a0_without_distill_300e.txt) |

|

| 115 |

+

| A1 | 79.6* \| 78.3 | 5.9M | 0.9 | 1.9ms | 334ms | 2730 | [dist](https://github.com/suous/RecNeXt/releases/download/v2.0/recnext_a1_distill_300e_fused.pt) \| [base](https://github.com/suous/RecNeXt/releases/download/v2.0/recnext_a1_without_distill_300e_fused.pt) | [dist](https://github.com/suous/RecNeXt/blob/main/logs/distill/recnext_a1_distill_300e.txt) \| [base](https://github.com/suous/RecNeXt/blob/main/logs/normal/recnext_a1_without_distill_300e.txt) |

|

| 116 |

+

| A2 | 80.8* \| 79.6 | 7.9M | 1.2 | 2.2ms | 413ms | 2331 | [dist](https://github.com/suous/RecNeXt/releases/download/v2.0/recnext_a2_distill_300e_fused.pt) \| [base](https://github.com/suous/RecNeXt/releases/download/v2.0/recnext_a2_without_distill_300e_fused.pt) | [dist](https://github.com/suous/RecNeXt/blob/main/logs/distill/recnext_a2_distill_300e.txt) \| [base](https://github.com/suous/RecNeXt/blob/main/logs/normal/recnext_a2_without_distill_300e.txt) |

|

| 117 |

+

| A3 | 81.1* \| 80.1 | 9.0M | 1.4 | 2.4ms | 447ms | 2151 | [dist](https://github.com/suous/RecNeXt/releases/download/v2.0/recnext_a3_distill_300e_fused.pt) \| [base](https://github.com/suous/RecNeXt/releases/download/v2.0/recnext_a3_without_distill_300e_fused.pt) | [dist](https://github.com/suous/RecNeXt/blob/main/logs/distill/recnext_a3_distill_300e.txt) \| [base](https://github.com/suous/RecNeXt/blob/main/logs/normal/recnext_a3_without_distill_300e.txt) |

|

| 118 |

+

| A4 | 82.5* \| 81.6 | 15.8M | 2.4 | 3.6ms | 764ms | 1265 | [dist](https://github.com/suous/RecNeXt/releases/download/v2.0/recnext_a4_distill_300e_fused.pt) \| [base](https://github.com/suous/RecNeXt/releases/download/v2.0/recnext_a4_without_distill_300e_fused.pt) | [dist](https://github.com/suous/RecNeXt/blob/main/logs/distill/recnext_a4_distill_300e.txt) \| [base](https://github.com/suous/RecNeXt/blob/main/logs/normal/recnext_a4_without_distill_300e.txt) |

|

| 119 |

+

| A5 | 83.5* \| 83.1 | 25.7M | 4.7 | 5.6ms | 1376ms | 733 | [dist](https://github.com/suous/RecNeXt/releases/download/v2.0/recnext_a5_distill_300e_fused.pt) \| [base](https://github.com/suous/RecNeXt/releases/download/v2.0/recnext_a5_without_distill_300e_fused.pt) | [dist](https://github.com/suous/RecNeXt/blob/main/logs/distill/recnext_a5_distill_300e.txt) \| [base](https://github.com/suous/RecNeXt/blob/main/logs/normal/recnext_a5_without_distill_300e.txt) |

|

| 120 |

+

|

| 121 |

+

### Comparison with [LSNet](https://github.com/jameslahm/lsnet)

|

| 122 |

+

|

| 123 |

+

We present a simple architecture, the overall design follows [LSNet](https://github.com/jameslahm/lsnet). This framework centers around sharing channel features from the previous layers.

|

| 124 |

+

Our motivation for doing so is to reduce the computational cost of token mixers and minimize feature redundancy in the final stage.

|

| 125 |

+

|

| 126 |

+

|

| 127 |

+

|

| 128 |

+

#### With **Shared-Channel Blocks**

|

| 129 |

+

|

| 130 |

+

| model | top_1_accuracy | params | gmacs | npu_latency | cpu_latency | throughput | fused_weights | training_logs |

|

| 131 |

+

|-------|----------------|--------|-------|-------------|-------------|------------|----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------|-----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------|

|

| 132 |

+

| T | 76.8 \| 75.2 | 12.1M | 0.3 | 1.8ms | 105ms | 13957 | [dist](https://github.com/suous/RecNeXt/releases/download/v2.0/recnext_t_share_channel_distill_300e_fused.pt) \| [norm](https://github.com/suous/RecNeXt/releases/download/v2.0/recnext_t_share_channel_without_distill_300e_fused.pt) | [dist](https://github.com/suous/RecNeXt/blob/main/lsnet/logs/distill/recnext_t_share_channel_distill_300e.txt) \| [norm](https://github.com/suous/RecNeXt/blob/main/lsnet/logs/normal/recnext_t_share_channel_without_distill_300e.txt) |

|

| 133 |

+

| S | 79.5 \| 78.3 | 15.8M | 0.7 | 2.0ms | 182ms | 8034 | [dist](https://github.com/suous/RecNeXt/releases/download/v2.0/recnext_s_share_channel_distill_300e_fused.pt) \| [norm](https://github.com/suous/RecNeXt/releases/download/v2.0/recnext_s_share_channel_without_distill_300e_fused.pt) | [dist](https://github.com/suous/RecNeXt/blob/main/lsnet/logs/distill/recnext_s_share_channel_distill_300e.txt) \| [norm](https://github.com/suous/RecNeXt/blob/main/lsnet/logs/normal/recnext_s_share_channel_without_distill_300e.txt) |

|

| 134 |

+

| B | 81.5 \| 80.3 | 19.2M | 1.1 | 2.5ms | 296ms | 4472 | [dist](https://github.com/suous/RecNeXt/releases/download/v2.0/recnext_b_share_channel_distill_300e_fused.pt) \| [norm](https://github.com/suous/RecNeXt/releases/download/v2.0/recnext_b_share_channel_without_distill_300e_fused.pt) | [dist](https://github.com/suous/RecNeXt/blob/main/lsnet/logs/distill/recnext_b_share_channel_distill_300e.txt) \| [norm](https://github.com/suous/RecNeXt/blob/main/lsnet/logs/normal/recnext_b_share_channel_without_distill_300e.txt) |

|

| 135 |

+

|

| 136 |

+

#### Without **Shared-Channel Blocks**

|

| 137 |

+

|

| 138 |

+

| model | top_1_accuracy | params | gmacs | npu_latency | cpu_latency | throughput | fused_weights | training_logs |

|

| 139 |

+

|-------|----------------|--------|-------|-------------|-------------|------------|------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------|-------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------|

|

| 140 |

+

| T | 76.6* \| 75.1 | 12.1M | 0.3 | 1.8ms | 109ms | 13878 | [dist](https://github.com/suous/RecNeXt/releases/download/v2.0/recnext_t_distill_300e_fused.pt) \| [base](https://github.com/suous/RecNeXt/releases/download/v2.0/recnext_t_without_distill_300e_fused.pt) | [dist](https://github.com/suous/RecNeXt/blob/main/lsnet/logs/distill/recnext_t_distill_300e.txt) \| [base](https://github.com/suous/RecNeXt/blob/main/lsnet/logs/normal/recnext_t_without_distill_300e.txt) |

|

| 141 |

+

| S | 79.6* \| 78.3 | 15.8M | 0.7 | 2.0ms | 188ms | 7989 | [dist](https://github.com/suous/RecNeXt/releases/download/v2.0/recnext_s_distill_300e_fused.pt) \| [base](https://github.com/suous/RecNeXt/releases/download/v2.0/recnext_s_without_distill_300e_fused.pt) | [dist](https://github.com/suous/RecNeXt/blob/main/lsnet/logs/distill/recnext_s_distill_300e.txt) \| [base](https://github.com/suous/RecNeXt/blob/main/lsnet/logs/normal/recnext_s_without_distill_300e.txt) |

|

| 142 |

+

| B | 81.4* \| 80.3 | 19.3M | 1.1 | 2.5ms | 290ms | 4450 | [dist](https://github.com/suous/RecNeXt/releases/download/v2.0/recnext_b_distill_300e_fused.pt) \| [base](https://github.com/suous/RecNeXt/releases/download/v2.0/recnext_b_without_distill_300e_fused.pt) | [dist](https://github.com/suous/RecNeXt/blob/main/lsnet/logs/distill/recnext_b_distill_300e.txt) \| [base](https://github.com/suous/RecNeXt/blob/main/lsnet/logs/normal/recnext_b_without_distill_300e.txt) |

|

| 143 |

+

|

| 144 |

+

> The NPU latency is measured on an iPhone 13 with models compiled by Core ML Tools.

|

| 145 |

+

> The CPU latency is accessed on a Quad-core ARM Cortex-A57 processor in ONNX format.

|

| 146 |

+

> And the throughput is tested on an Nvidia RTX3090 with maximum power-of-two batch size that fits in memory.

|

| 147 |

+

|

| 148 |

+

|

| 149 |

+

## Latency Measurement

|

| 150 |

+

|

| 151 |

+

The latency reported in RecNeXt for iPhone 13 (iOS 18) uses the benchmark tool from [XCode 14](https://developer.apple.com/videos/play/wwdc2022/10027/).

|

| 152 |

+

|

| 153 |

+

<details>

|

| 154 |

+

<summary>

|

| 155 |

+

RecNeXt-M0

|

| 156 |

+

</summary>

|

| 157 |

+

<img src="https://raw.githubusercontent.com/suous/RecNeXt/main/figures/latency/recnext_m0_224x224.png" alt="recnext_m0">

|

| 158 |

+

</details>

|

| 159 |

+

|

| 160 |

+

<details>

|

| 161 |

+

<summary>

|

| 162 |

+

RecNeXt-M1

|

| 163 |

+

</summary>

|

| 164 |

+

<img src="https://raw.githubusercontent.com/suous/RecNeXt/main/figures/latency/recnext_m1_224x224.png" alt="recnext_m1">

|

| 165 |

+

</details>

|

| 166 |

+

|

| 167 |

+

<details>

|

| 168 |

+

<summary>

|

| 169 |

+

RecNeXt-M2

|

| 170 |

+

</summary>

|

| 171 |

+

<img src="https://raw.githubusercontent.com/suous/RecNeXt/main/figures/latency/recnext_m2_224x224.png" alt="recnext_m2">

|

| 172 |

+

</details>

|

| 173 |

+

|

| 174 |

+

<details>

|

| 175 |

+

<summary>

|

| 176 |

+

RecNeXt-M3

|

| 177 |

+

</summary>

|

| 178 |

+

<img src="https://raw.githubusercontent.com/suous/RecNeXt/main/figures/latency/recnext_m3_224x224.png" alt="recnext_m3">

|

| 179 |

+

</details>

|

| 180 |

+

|

| 181 |

+

<details>

|

| 182 |

+

<summary>

|

| 183 |

+

RecNeXt-M4

|

| 184 |

+

</summary>

|

| 185 |

+

<img src="https://raw.githubusercontent.com/suous/RecNeXt/main/figures/latency/recnext_m4_224x224.png" alt="recnext_m4">

|

| 186 |

+

</details>

|

| 187 |

+

|

| 188 |

+

<details>

|

| 189 |

+

<summary>

|

| 190 |

+

RecNeXt-M5

|

| 191 |

+

</summary>

|

| 192 |

+

<img src="https://raw.githubusercontent.com/suous/RecNeXt/main/figures/latency/recnext_m5_224x224.png" alt="recnext_m5">

|

| 193 |

+

</details>

|

| 194 |

+

|

| 195 |

+

<details>

|

| 196 |

+

<summary>

|

| 197 |

+

RecNeXt-A0

|

| 198 |

+

</summary>

|

| 199 |

+

<img src="https://raw.githubusercontent.com/suous/RecNeXt/main/figures/latency/recnext_a0_224x224.png" alt="recnext_a0">

|

| 200 |

+

</details>

|

| 201 |

+

|

| 202 |

+

<details>

|

| 203 |

+

<summary>

|

| 204 |

+

RecNeXt-A1

|

| 205 |

+

</summary>

|

| 206 |

+

<img src="https://raw.githubusercontent.com/suous/RecNeXt/main/figures/latency/recnext_a1_224x224.png" alt="recnext_a1">

|

| 207 |

+

</details>

|

| 208 |

+

|

| 209 |

+

<details>

|

| 210 |

+

<summary>

|

| 211 |

+

RecNeXt-A2

|

| 212 |

+

</summary>

|

| 213 |

+

<img src="https://raw.githubusercontent.com/suous/RecNeXt/main/figures/latency/recnext_a2_224x224.png" alt="recnext_a2">

|

| 214 |

+

</details>

|

| 215 |

+

|

| 216 |

+

<details>

|

| 217 |

+

<summary>

|

| 218 |

+

RecNeXt-A3

|

| 219 |

+

</summary>

|

| 220 |

+

<img src="https://raw.githubusercontent.com/suous/RecNeXt/main/figures/latency/recnext_a3_224x224.png" alt="recnext_a3">

|

| 221 |

+

</details>

|

| 222 |

+

|

| 223 |

+

<details>

|

| 224 |

+

<summary>

|

| 225 |

+

RecNeXt-A4

|

| 226 |

+

</summary>

|

| 227 |

+

<img src="https://raw.githubusercontent.com/suous/RecNeXt/main/figures/latency/recnext_a4_224x224.png" alt="recnext_a4">

|

| 228 |

+

</details>

|

| 229 |

+

|

| 230 |

+

<details>

|

| 231 |

+

<summary>

|

| 232 |

+

RecNeXt-A5

|

| 233 |

+

</summary>

|

| 234 |

+

<img src="https://raw.githubusercontent.com/suous/RecNeXt/main/figures/latency/recnext_a5_224x224.png" alt="recnext_a5">

|

| 235 |

+

</details>

|

| 236 |

+

|

| 237 |

+

<details>

|

| 238 |

+

<summary>

|

| 239 |

+

RecNeXt-T

|

| 240 |

+

</summary>

|

| 241 |

+

<img src="https://raw.githubusercontent.com/suous/RecNeXt/main/lsnet/figures/latency/recnext_t_224x224.png" alt="recnext_t">

|

| 242 |

+

</details>

|

| 243 |

+

|

| 244 |

+

<details>

|

| 245 |

+

<summary>

|

| 246 |

+

RecNeXt-S

|

| 247 |

+

</summary>

|

| 248 |

+

<img src="https://raw.githubusercontent.com/suous/RecNeXt/main/lsnet/figures/latency/recnext_s_224x224.png" alt="recnext_s">

|

| 249 |

+

</details>

|

| 250 |

+

|

| 251 |

+

<details>

|

| 252 |

+

<summary>

|

| 253 |

+

RecNeXt-B

|

| 254 |

+

</summary>

|

| 255 |

+

<img src="https://raw.githubusercontent.com/suous/RecNeXt/main/lsnet/figures/latency/recnext_b_224x224.png" alt="recnext_b">

|

| 256 |

+

</details>

|

| 257 |

+

|

| 258 |

+

Tips: export the model to Core ML model

|

| 259 |

+

```

|

| 260 |

+

python export_coreml.py --model recnext_m1 --ckpt pretrain/recnext_m1_distill_300e.pth

|

| 261 |

+

```

|

| 262 |

+

Tips: measure the throughput on GPU

|

| 263 |

+

```

|

| 264 |

+

python speed_gpu.py --model recnext_m1

|

| 265 |

+

```

|

| 266 |

+

|

| 267 |

+

## ImageNet (Training and Evaluation)

|

| 268 |

+

|

| 269 |

+

### Prerequisites

|

| 270 |

+

`conda` virtual environment is recommended.

|

| 271 |

+

```

|

| 272 |

+

conda create -n recnext python=3.8

|

| 273 |

+

pip install -r requirements.txt

|

| 274 |

+

```

|

| 275 |

+

|

| 276 |

+

### Data preparation

|

| 277 |

+

|

| 278 |

+

Download and extract ImageNet train and val images from http://image-net.org/. The training and validation data are expected to be in the `train` folder and `val` folder respectively:

|

| 279 |

+

|

| 280 |

+

```bash

|

| 281 |

+

# script to extract ImageNet dataset: https://github.com/pytorch/examples/blob/main/imagenet/extract_ILSVRC.sh

|

| 282 |

+

# ILSVRC2012_img_train.tar (about 138 GB)

|

| 283 |

+

# ILSVRC2012_img_val.tar (about 6.3 GB)

|

| 284 |

+

```

|

| 285 |

+

|

| 286 |

+

```

|

| 287 |

+

# organize the ImageNet dataset as follows:

|

| 288 |

+

imagenet

|

| 289 |

+

├── train

|

| 290 |

+

│ ├── n01440764

|

| 291 |

+

│ │ ├── n01440764_10026.JPEG

|

| 292 |

+

│ │ ├── n01440764_10027.JPEG

|

| 293 |

+

│ │ ├── ......

|

| 294 |

+

│ ├── ......

|

| 295 |

+

├── val

|

| 296 |

+

│ ├── n01440764

|

| 297 |

+

│ │ ├── ILSVRC2012_val_00000293.JPEG

|

| 298 |

+

│ │ ├── ILSVRC2012_val_00002138.JPEG

|

| 299 |

+

│ │ ├── ......

|

| 300 |

+

│ ├── ......

|

| 301 |

+

```

|

| 302 |

+

|

| 303 |

+

### Training

|

| 304 |

+

To train RecNeXt-M1 on an 8-GPU machine:

|

| 305 |

+

|

| 306 |

+

```

|

| 307 |

+

python -m torch.distributed.launch --nproc_per_node=8 --master_port 12346 --use_env main.py --model recnext_m1 --data-path ~/imagenet --dist-eval

|

| 308 |

+

```

|

| 309 |

+

Tips: specify your data path and model name!

|

| 310 |

+

|

| 311 |

+

### Testing

|

| 312 |

+

For example, to test RecNeXt-M1:

|

| 313 |

+

```

|

| 314 |

+

python main.py --eval --model recnext_m1 --resume pretrain/recnext_m1_distill_300e.pth --data-path ~/imagenet

|

| 315 |

+

```

|

| 316 |

+

|

| 317 |

+

Use pretrained model without knowledge distillation from [HuggingFace](https://huggingface.co/suous) 🤗.

|

| 318 |

+

```bash

|

| 319 |

+

python main.py --eval --model recnext_m1 --data-path ~/imagenet --pretrained --distillation-type none

|

| 320 |

+

```

|

| 321 |

+

|

| 322 |

+

Use pretrained model with knowledge distillation from [HuggingFace](https://huggingface.co/suous) 🤗.

|

| 323 |

+

```bash

|

| 324 |

+

python main.py --eval --model recnext_m1 --data-path ~/imagenet --pretrained --distillation-type hard

|

| 325 |

+

```

|

| 326 |

+

|

| 327 |

+

### Fused model evaluation

|

| 328 |

+

For example, to evaluate RecNeXt-M1 with the fused model: [](https://colab.research.google.com/github/suous/RecNeXt/blob/main/demo/fused_model_evaluation.ipynb)

|

| 329 |

+

```

|

| 330 |

+

python fuse_eval.py --model recnext_m1 --resume pretrain/recnext_m1_distill_300e_fused.pt --data-path ~/imagenet

|

| 331 |

+

```

|

| 332 |

+

|

| 333 |

+

### Extract model for publishing

|

| 334 |

+

|

| 335 |

+

```

|

| 336 |

+

# without distillation

|

| 337 |

+

python publish.py --model_name recnext_m1 --checkpoint_path pretrain/checkpoint_best.pth --epochs 300

|

| 338 |

+

|

| 339 |

+

# with distillation

|

| 340 |

+

python publish.py --model_name recnext_m1 --checkpoint_path pretrain/checkpoint_best.pth --epochs 300 --distillation

|

| 341 |

+

|

| 342 |

+

# fused model

|

| 343 |

+

python publish.py --model_name recnext_m1 --checkpoint_path pretrain/checkpoint_best.pth --epochs 300 --fused

|

| 344 |

+

```

|

| 345 |

+

|

| 346 |

+

## Downstream Tasks

|

| 347 |

+

[Object Detection and Instance Segmentation](https://github.com/suous/RecNeXt/blob/main/detection/README.md)<br>

|

| 348 |

+

|

| 349 |

+

| model | $AP^b$ | $AP_{50}^b$ | $AP_{75}^b$ | $AP^m$ | $AP_{50}^m$ | $AP_{75}^m$ | Latency | Ckpt | Log |

|

| 350 |

+

|:-----------|:------:|:-----------:|:-----------:|:------:|:-----------:|:-----------:|:-------:|:---------------------------------------------------------------------------------:|:----------------------------------------------------------------------------------------------:|

|

| 351 |

+

| RecNeXt-M3 | 41.7 | 63.4 | 45.4 | 38.6 | 60.5 | 41.4 | 5.2ms | [M3](https://github.com/suous/RecNeXt/releases/download/v1.0/recnext_m3_coco.pth) | [M3](https://raw.githubusercontent.com/suous/RecNeXt/main/detection/logs/recnext_m3_coco.json) |

|

| 352 |

+

| RecNeXt-M4 | 43.5 | 64.9 | 47.7 | 39.7 | 62.1 | 42.4 | 7.6ms | [M4](https://github.com/suous/RecNeXt/releases/download/v1.0/recnext_m4_coco.pth) | [M4](https://raw.githubusercontent.com/suous/RecNeXt/main/detection/logs/recnext_m4_coco.json) |

|

| 353 |

+

| RecNeXt-M5 | 44.6 | 66.3 | 49.0 | 40.6 | 63.5 | 43.5 | 12.4ms | [M5](https://github.com/suous/RecNeXt/releases/download/v1.0/recnext_m5_coco.pth) | [M5](https://raw.githubusercontent.com/suous/RecNeXt/main/detection/logs/recnext_m5_coco.json) |

|

| 354 |

+

| RecNeXt-A3 | 42.1 | 64.1 | 46.2 | 38.8 | 61.1 | 41.6 | 8.3ms | [A3](https://github.com/suous/RecNeXt/releases/download/v2.0/recnext_a3_coco.pth) | [A3](https://raw.githubusercontent.com/suous/RecNeXt/main/detection/logs/recnext_a3_coco.json) |

|

| 355 |

+

| RecNeXt-A4 | 43.5 | 65.4 | 47.6 | 39.8 | 62.4 | 42.9 | 14.0ms | [A4](https://github.com/suous/RecNeXt/releases/download/v2.0/recnext_a4_coco.pth) | [A4](https://raw.githubusercontent.com/suous/RecNeXt/main/detection/logs/recnext_a4_coco.json) |

|

| 356 |

+

| RecNeXt-A5 | 44.4 | 66.3 | 48.9 | 40.3 | 63.3 | 43.4 | 25.3ms | [A5](https://github.com/suous/RecNeXt/releases/download/v2.0/recnext_a5_coco.pth) | [A5](https://raw.githubusercontent.com/suous/RecNeXt/main/detection/logs/recnext_a5_coco.json) |

|

| 357 |

+

|

| 358 |

+

[Semantic Segmentation](https://github.com/suous/RecNeXt/blob/main/segmentation/README.md)

|

| 359 |

+

|

| 360 |

+

| Model | mIoU | Latency | Ckpt | Log |

|

| 361 |

+

|:-----------|:----:|:-------:|:-----------------------------------------------------------------------------------:|:---------------------------------------------------------------------------------------------------:|

|

| 362 |

+

| RecNeXt-M3 | 41.0 | 5.6ms | [M3](https://github.com/suous/RecNeXt/releases/download/v1.0/recnext_m3_ade20k.pth) | [M3](https://raw.githubusercontent.com/suous/RecNeXt/main/segmentation/logs/recnext_m3_ade20k.json) |

|

| 363 |

+

| RecNeXt-M4 | 43.6 | 7.2ms | [M4](https://github.com/suous/RecNeXt/releases/download/v1.0/recnext_m4_ade20k.pth) | [M4](https://raw.githubusercontent.com/suous/RecNeXt/main/segmentation/logs/recnext_m4_ade20k.json) |

|

| 364 |

+

| RecNeXt-M5 | 46.0 | 12.4ms | [M5](https://github.com/suous/RecNeXt/releases/download/v1.0/recnext_m5_ade20k.pth) | [M5](https://raw.githubusercontent.com/suous/RecNeXt/main/segmentation/logs/recnext_m5_ade20k.json) |

|

| 365 |

+

| RecNeXt-A3 | 41.9 | 8.4ms | [A3](https://github.com/suous/RecNeXt/releases/download/v2.0/recnext_a3_ade20k.pth) | [A3](https://raw.githubusercontent.com/suous/RecNeXt/main/segmentation/logs/recnext_a3_ade20k.json) |

|

| 366 |

+

| RecNeXt-A4 | 43.0 | 14.0ms | [A4](https://github.com/suous/RecNeXt/releases/download/v2.0/recnext_a4_ade20k.pth) | [A4](https://raw.githubusercontent.com/suous/RecNeXt/main/segmentation/logs/recnext_a4_ade20k.json) |

|

| 367 |

+

| RecNeXt-A5 | 46.5 | 25.3ms | [A5](https://github.com/suous/RecNeXt/releases/download/v2.0/recnext_a5_ade20k.pth) | [A5](https://raw.githubusercontent.com/suous/RecNeXt/main/segmentation/logs/recnext_a5_ade20k.json) |

|

| 368 |

+

|

| 369 |

+

## Ablation Study

|

| 370 |

+

|

| 371 |

+

### Overall Experiments

|

| 372 |

+

|

| 373 |

+

|

| 374 |

+

|

| 375 |

+

<details>

|

| 376 |

+

<summary>

|

| 377 |

+

<span style="font-size: larger; ">Ablation Logs</span>

|

| 378 |

+

</summary>

|

| 379 |

+

|

| 380 |

+

<pre>

|

| 381 |

+

logs/ablation

|

| 382 |

+

├── 224

|

| 383 |

+

│ ├── <a style="text-decoration:none" href="https://raw.githubusercontent.com/suous/RecNeXt/main/logs/ablation/224/recnext_m1_120e_224x224_3x3_7464.txt">recnext_m1_120e_224x224_3x3_7464.txt</a>

|

| 384 |

+

│ ├── <a style="text-decoration:none" href="https://raw.githubusercontent.com/suous/RecNeXt/main/logs/ablation/224/recnext_m1_120e_224x224_7x7_7552.txt">recnext_m1_120e_224x224_7x7_7552.txt</a>

|

| 385 |

+

│ ├── <a style="text-decoration:none" href="https://raw.githubusercontent.com/suous/RecNeXt/main/logs/ablation/224/recnext_m1_120e_224x224_bxb_7541.txt">recnext_m1_120e_224x224_bxb_7541.txt</a>

|

| 386 |

+

│ ├── <a style="text-decoration:none" href="https://raw.githubusercontent.com/suous/RecNeXt/main/logs/ablation/224/recnext_m1_120e_224x224_rec_3x3_7548.txt">recnext_m1_120e_224x224_rec_3x3_7548.txt</a>

|

| 387 |

+

│ ├── <a style="text-decoration:none" href="https://raw.githubusercontent.com/suous/RecNeXt/main/logs/ablation/224/recnext_m1_120e_224x224_rec_5x5_7603.txt">recnext_m1_120e_224x224_rec_5x5_7603.txt</a>

|

| 388 |

+

│ ├── <a style="text-decoration:none" href="https://raw.githubusercontent.com/suous/RecNeXt/main/logs/ablation/224/recnext_m1_120e_224x224_rec_7x7_7567.txt">recnext_m1_120e_224x224_rec_7x7_7567.txt</a>

|

| 389 |

+

│ ├── <a style="text-decoration:none" href="https://raw.githubusercontent.com/suous/RecNeXt/main/logs/ablation/224/recnext_m1_120e_224x224_rec_7x7_nearest_7571.txt">recnext_m1_120e_224x224_rec_7x7_nearest_7571.txt</a>

|

| 390 |

+

│ ├── <a style="text-decoration:none" href="https://raw.githubusercontent.com/suous/RecNeXt/main/logs/ablation/224/recnext_m1_120e_224x224_rec_7x7_nearest_ssm_7593.txt">recnext_m1_120e_224x224_rec_7x7_nearest_ssm_7593.txt</a>

|

| 391 |

+

│ └── <a style="text-decoration:none" href="https://raw.githubusercontent.com/suous/RecNeXt/main/logs/ablation/224/recnext_m1_120e_224x224_rec_7x7_unpool_7548.txt">recnext_m1_120e_224x224_rec_7x7_unpool_7548.txt</a>

|

| 392 |

+

└── 384

|

| 393 |

+

├── <a style="text-decoration:none" href="https://raw.githubusercontent.com/suous/RecNeXt/main/logs/ablation/384/recnext_m1_120e_384x384_3x3_7635.txt">recnext_m1_120e_384x384_3x3_7635.txt</a>

|

| 394 |

+

├── <a style="text-decoration:none" href="https://raw.githubusercontent.com/suous/RecNeXt/main/logs/ablation/384/recnext_m1_120e_384x384_7x7_7742.txt">recnext_m1_120e_384x384_7x7_7742.txt</a>

|

| 395 |

+

├── <a style="text-decoration:none" href="https://raw.githubusercontent.com/suous/RecNeXt/main/logs/ablation/384/recnext_m1_120e_384x384_bxb_7800.txt">recnext_m1_120e_384x384_bxb_7800.txt</a>

|

| 396 |

+

├── <a style="text-decoration:none" href="https://raw.githubusercontent.com/suous/RecNeXt/main/logs/ablation/384/recnext_m1_120e_384x384_rec_3x3_7772.txt">recnext_m1_120e_384x384_rec_3x3_7772.txt</a>

|

| 397 |

+

├── <a style="text-decoration:none" href="https://raw.githubusercontent.com/suous/RecNeXt/main/logs/ablation/384/recnext_m1_120e_384x384_rec_5x5_7811.txt">recnext_m1_120e_384x384_rec_5x5_7811.txt</a>

|

| 398 |

+

├── <a style="text-decoration:none" href="https://raw.githubusercontent.com/suous/RecNeXt/main/logs/ablation/384/recnext_m1_120e_384x384_rec_7x7_7803.txt">recnext_m1_120e_384x384_rec_7x7_7803.txt</a>

|

| 399 |

+

├── <a style="text-decoration:none" href="https://raw.githubusercontent.com/suous/RecNeXt/main/logs/ablation/384/recnext_m1_120e_384x384_rec_convtrans_3x3_basic_7726.txt">recnext_m1_120e_384x384_rec_convtrans_3x3_basic_7726.txt</a>

|

| 400 |

+

├── <a style="text-decoration:none" href="https://raw.githubusercontent.com/suous/RecNeXt/main/logs/ablation/384/recnext_m1_120e_384x384_rec_convtrans_5x5_basic_7787.txt">recnext_m1_120e_384x384_rec_convtrans_5x5_basic_7787.txt</a>

|

| 401 |

+

├── <a style="text-decoration:none" href="https://raw.githubusercontent.com/suous/RecNeXt/main/logs/ablation/384/recnext_m1_120e_384x384_rec_convtrans_7x7_basic_7824.txt">recnext_m1_120e_384x384_rec_convtrans_7x7_basic_7824.txt</a>

|

| 402 |

+

├── <a style="text-decoration:none" href="https://raw.githubusercontent.com/suous/RecNeXt/main/logs/ablation/384/recnext_m1_120e_384x384_rec_convtrans_7x7_group_7791.txt">recnext_m1_120e_384x384_rec_convtrans_7x7_group_7791.txt</a>

|

| 403 |

+

└── <a style="text-decoration:none" href="https://raw.githubusercontent.com/suous/RecNeXt/main/logs/ablation/384/recnext_m1_120e_384x384_rec_convtrans_7x7_split_7683.txt">recnext_m1_120e_384x384_rec_convtrans_7x7_split_7683.txt</a>

|

| 404 |

+

</pre>

|

| 405 |

+

</details>

|

| 406 |

+

|

| 407 |

+

<details>

|

| 408 |

+

<summary>

|

| 409 |

+

<span style="font-size: larger; ">RecConv Recurrent Aggregation</span>

|

| 410 |

+

</summary>

|

| 411 |

+

|

| 412 |

+

```python

|

| 413 |

+

class RecConv2d(nn.Module):

|

| 414 |

+

def __init__(self, in_channels, kernel_size=5, bias=False, level=1, mode='nearest'):

|

| 415 |

+

super().__init__()

|

| 416 |

+

self.level = level

|

| 417 |

+

self.mode = mode

|

| 418 |

+

kwargs = {

|

| 419 |

+

'in_channels': in_channels,

|

| 420 |

+

'out_channels': in_channels,

|

| 421 |

+

'groups': in_channels,

|

| 422 |

+

'kernel_size': kernel_size,

|

| 423 |

+

'padding': kernel_size // 2,

|

| 424 |

+

'bias': bias

|

| 425 |

+

}

|

| 426 |

+

self.n = nn.Conv2d(stride=2, **kwargs)

|

| 427 |

+

self.a = nn.Conv2d(**kwargs) if level >1 else None

|

| 428 |

+

self.b = nn.Conv2d(**kwargs)

|

| 429 |

+

self.c = nn.Conv2d(**kwargs)

|

| 430 |

+

self.d = nn.Conv2d(**kwargs)

|

| 431 |

+

|

| 432 |

+

def forward(self, x):

|

| 433 |

+

# 1. Generate Multi-scale Features.

|

| 434 |

+

fs = [x]

|

| 435 |

+

for _ in range(self.level):

|

| 436 |

+

fs.append(self.n(fs[-1]))

|

| 437 |

+

|

| 438 |

+

# 2. Multi-scale Recurrent Aggregation.

|

| 439 |

+

h = None

|

| 440 |

+

for i, o in reversed(list(zip(fs[1:], fs[:-1]))):

|

| 441 |

+

h = self.a(h) + self.b(i) if h is not None else self.b(i)

|

| 442 |

+

h = nn.functional.interpolate(h, size=o.shape[2:], mode=self.mode)

|

| 443 |

+

return self.c(h) + self.d(x)

|

| 444 |

+

```

|

| 445 |

+

</details>

|

| 446 |

+

|

| 447 |

+

### RecConv Variants

|

| 448 |

+

|

| 449 |

+

<div style="display: flex; justify-content: space-between;">

|

| 450 |

+

<img src="https://raw.githubusercontent.com/suous/RecNeXt/main/figures/RecConvB.png" alt="RecConvB" style="width: 49%;">

|

| 451 |

+

<img src="https://raw.githubusercontent.com/suous/RecNeXt/main/figures/RecConvC.png" alt="RecConvC" style="width: 49%;">

|

| 452 |

+

</div>

|

| 453 |

+

|

| 454 |

+

|

| 455 |

+

<details>

|

| 456 |

+

<summary>

|

| 457 |

+

<span style="font-size: larger; ">RecConv Variant Details</span>

|

| 458 |

+

</summary>

|

| 459 |

+

|

| 460 |

+

- **RecConv using group convolutions**

|

| 461 |

+

|

| 462 |

+

```python

|

| 463 |

+

# RecConv Variant A

|

| 464 |

+

# recursive decomposition on both spatial and channel dimensions

|

| 465 |

+

# downsample and upsample through group convolutions

|

| 466 |

+

class RecConv2d(nn.Module):

|

| 467 |

+

def __init__(self, in_channels, kernel_size=5, bias=False, level=2):

|

| 468 |

+

super().__init__()

|

| 469 |

+

self.level = level

|

| 470 |

+

kwargs = {'kernel_size': kernel_size, 'padding': kernel_size // 2, 'bias': bias}

|

| 471 |

+

downs = []

|

| 472 |

+

for l in range(level):

|

| 473 |

+

i_channels = in_channels // (2 ** l)

|

| 474 |

+

o_channels = in_channels // (2 ** (l+1))

|

| 475 |

+

downs.append(nn.Conv2d(in_channels=i_channels, out_channels=o_channels, groups=o_channels, stride=2, **kwargs))

|

| 476 |

+

self.downs = nn.ModuleList(downs)

|

| 477 |

+

|

| 478 |

+

convs = []

|

| 479 |

+

for l in range(level+1):

|

| 480 |

+

channels = in_channels // (2 ** l)

|

| 481 |

+

convs.append(nn.Conv2d(in_channels=channels, out_channels=channels, groups=channels, **kwargs))

|

| 482 |

+

self.convs = nn.ModuleList(reversed(convs))

|

| 483 |

+

|

| 484 |

+

# this is the simplest modification, only support resoltions like 256, 384, etc

|

| 485 |

+

kwargs['kernel_size'] = kernel_size + 1

|

| 486 |

+

ups = []

|

| 487 |

+

for l in range(level):

|

| 488 |

+

i_channels = in_channels // (2 ** (l+1))

|

| 489 |

+

o_channels = in_channels // (2 ** l)

|

| 490 |

+

ups.append(nn.ConvTranspose2d(in_channels=i_channels, out_channels=o_channels, groups=i_channels, stride=2, **kwargs))

|

| 491 |

+

self.ups = nn.ModuleList(reversed(ups))

|

| 492 |

+

|

| 493 |

+

def forward(self, x):

|

| 494 |

+

i = x

|

| 495 |

+

features = []

|

| 496 |

+

for down in self.downs:

|

| 497 |

+

x, s = down(x), x.shape[2:]

|

| 498 |

+

features.append((x, s))

|

| 499 |

+

|

| 500 |

+

x = 0

|

| 501 |

+

for conv, up, (f, s) in zip(self.convs, self.ups, reversed(features)):

|

| 502 |

+

x = up(conv(f + x))

|

| 503 |

+

return self.convs[self.level](i + x)

|

| 504 |

+

```

|

| 505 |

+

|

| 506 |

+

- **RecConv using channel-wise concatenation**

|

| 507 |

+

|

| 508 |

+

```python

|

| 509 |

+

# recursive decomposition on both spatial and channel dimensions

|

| 510 |

+

# downsample using channel-wise split, followed by depthwise convolution with a stride of 2

|

| 511 |

+

# upsample through channel-wise concatenation

|

| 512 |

+

class RecConv2d(nn.Module):

|

| 513 |

+

def __init__(self, in_channels, kernel_size=5, bias=False, level=2):

|

| 514 |

+

super().__init__()

|

| 515 |

+

self.level = level

|

| 516 |

+

kwargs = {'kernel_size': kernel_size, 'padding': kernel_size // 2, 'bias': bias}

|

| 517 |

+

downs = []

|

| 518 |

+

for l in range(level):

|

| 519 |

+

channels = in_channels // (2 ** (l+1))

|

| 520 |

+

downs.append(nn.Conv2d(in_channels=channels, out_channels=channels, groups=channels, stride=2, **kwargs))

|

| 521 |

+

self.downs = nn.ModuleList(downs)

|

| 522 |

+

|

| 523 |

+

convs = []

|

| 524 |

+

for l in range(level+1):

|

| 525 |

+

channels = in_channels // (2 ** l)

|

| 526 |

+

convs.append(nn.Conv2d(in_channels=channels, out_channels=channels, groups=channels, **kwargs))

|

| 527 |

+

self.convs = nn.ModuleList(reversed(convs))

|

| 528 |

+

|

| 529 |

+

. # this is the simplest modification, only support resoltions like 256, 384, etc

|

| 530 |

+

kwargs['kernel_size'] = kernel_size + 1

|

| 531 |

+

ups = []

|

| 532 |

+

for l in range(level):

|

| 533 |

+

channels = in_channels // (2 ** (l+1))

|

| 534 |

+

ups.append(nn.ConvTranspose2d(in_channels=channels, out_channels=channels, groups=channels, stride=2, **kwargs))

|

| 535 |

+

self.ups = nn.ModuleList(reversed(ups))

|

| 536 |

+

|

| 537 |

+

def forward(self, x):

|

| 538 |

+

features = []

|

| 539 |

+

for down in self.downs:

|

| 540 |

+

r, x = torch.chunk(x, 2, dim=1)

|

| 541 |

+

x, s = down(x), x.shape[2:]

|

| 542 |

+

features.append((r, s))

|

| 543 |

+

|

| 544 |

+

for conv, up, (r, s) in zip(self.convs, self.ups, reversed(features)):

|

| 545 |

+

x = torch.cat([r, up(conv(x))], dim=1)

|

| 546 |

+

return self.convs[self.level](x)

|

| 547 |

+

```

|

| 548 |

+

</details>

|

| 549 |

+

|

| 550 |

+

### RecConv Beyond

|

| 551 |

+

|

| 552 |

+

We apply RecConv to [MLLA](https://github.com/LeapLabTHU/MLLA) small variants, replacing linear attention and downsampling layers.

|

| 553 |

+

Result in higher throughput and less training memory usage.

|

| 554 |

+

|

| 555 |

+

<pre>

|

| 556 |

+

mlla/logs

|

| 557 |

+

├── 1_mlla_nano

|

| 558 |

+

│ ├── <a style="text-decoration:none" href="https://raw.githubusercontent.com/suous/RecNeXt/main/mlla/logs/1_mlla_nano/01_baseline.txt">01_baseline.txt</a>

|

| 559 |

+

│ ├── <a style="text-decoration:none" href="https://raw.githubusercontent.com/suous/RecNeXt/main/mlla/logs/1_mlla_nano/02_recconv_5x5_conv_trans.txt">02_recconv_5x5_conv_trans.txt</a>

|

| 560 |

+

│ ├── <a style="text-decoration:none" href="https://raw.githubusercontent.com/suous/RecNeXt/main/mlla/logs/1_mlla_nano/03_recconv_5x5_nearest_interp.txt">03_recconv_5x5_nearest_interp.txt</a>

|

| 561 |

+

│ ├── <a style="text-decoration:none" href="https://raw.githubusercontent.com/suous/RecNeXt/main/mlla/logs/1_mlla_nano/04_recattn_nearest_interp.txt">04_recattn_nearest_interp.txt</a>

|

| 562 |

+

│ └── <a style="text-decoration:none" href="https://raw.githubusercontent.com/suous/RecNeXt/main/mlla/logs/1_mlla_nano/05_recattn_nearest_interp_simplify.txt">05_recattn_nearest_interp_simplify.txt</a>

|

| 563 |

+

└── 2_mlla_mini

|

| 564 |

+

├── <a style="text-decoration:none" href="https://raw.githubusercontent.com/suous/RecNeXt/main/mlla/logs/2_mlla_mini/01_baseline.txt">01_baseline.txt</a>

|

| 565 |

+

├── <a style="text-decoration:none" href="https://raw.githubusercontent.com/suous/RecNeXt/main/mlla/logs/2_mlla_mini/02_recconv_5x5_conv_trans.txt">02_recconv_5x5_conv_trans.txt</a>

|

| 566 |

+

├── <a style="text-decoration:none" href="https://raw.githubusercontent.com/suous/RecNeXt/main/mlla/logs/2_mlla_mini/03_recconv_5x5_nearest_interp.txt">03_recconv_5x5_nearest_interp.txt</a>

|

| 567 |

+

├── <a style="text-decoration:none" href="https://raw.githubusercontent.com/suous/RecNeXt/main/mlla/logs/2_mlla_mini/04_recattn_nearest_interp.txt">04_recattn_nearest_interp.txt</a>

|

| 568 |

+

└── <a style="text-decoration:none" href="https://raw.githubusercontent.com/suous/RecNeXt/main/mlla/logs/2_mlla_mini/05_recattn_nearest_interp_simplify.txt">05_recattn_nearest_interp_simplify.txt</a>

|

| 569 |

+

</pre>

|

| 570 |

+

|

| 571 |

+

## Limitations

|

| 572 |

+

|

| 573 |

+

1. RecNeXt exhibits the lowest **throughput** among models of comparable parameter size due to extensive use of bilinear interpolation, which can be mitigated by employing transposed convolution.

|

| 574 |

+

2. The recursive decomposition may introduce **numerical instability** during mixed precision training, which can be alleviated by using fixed-point or BFloat16 arithmetic.

|

| 575 |

+

3. **Compatibility issues** with bilinear interpolation and transposed convolution on certain iOS versions may also result in performance degradation.

|

| 576 |

+

|

| 577 |

+

## Acknowledgement

|

| 578 |

+

|

| 579 |

+

Classification (ImageNet) code base is partly built with [LeViT](https://github.com/facebookresearch/LeViT), [PoolFormer](https://github.com/sail-sg/poolformer), [EfficientFormer](https://github.com/snap-research/EfficientFormer), [RepViT](https://github.com/THU-MIG/RepViT), [LSNet](https://github.com/jameslahm/lsnet), and [MogaNet](https://github.com/Westlake-AI/MogaNet).

|

| 580 |

+

|

| 581 |

+

The detection and segmentation pipeline is from [MMCV](https://github.com/open-mmlab/mmcv) ([MMDetection](https://github.com/open-mmlab/mmdetection) and [MMSegmentation](https://github.com/open-mmlab/mmsegmentation)).

|

| 582 |

+

|

| 583 |

+

Thanks for the great implementations!

|

| 584 |

+

|

| 585 |

+

## Citation

|

| 586 |

+

|

| 587 |

+

```BibTeX

|

| 588 |

+

@misc{zhao2024recnext,

|

| 589 |

+

title={RecConv: Efficient Recursive Convolutions for Multi-Frequency Representations},

|

| 590 |

+

author={Mingshu Zhao and Yi Luo and Yong Ouyang},

|

| 591 |

+

year={2024},

|

| 592 |

+

eprint={2412.19628},

|

| 593 |

+

archivePrefix={arXiv},

|

| 594 |

+

primaryClass={cs.CV}

|

| 595 |

+

}

|

| 596 |

+

```

|