See our collection for all versions of Qwen3 including GGUF, 4-bit & 16-bit formats.

Learn to run Qwen3-Coder correctly - Read our Guide.

See Unsloth Dynamic 2.0 GGUFs for our quantization benchmarks.

✨ Read our Qwen3-Coder Guide here!

- Fine-tune Qwen3 (14B) for free using our Google Colab notebook!

- Read our Blog about Qwen3 support: unsloth.ai/blog/qwen3

- View the rest of our notebooks in our docs here.

- Run & export your fine-tuned model to Ollama, llama.cpp or HF.

| Unsloth supports | Free Notebooks | Performance | Memory use |

|---|---|---|---|

| Qwen3 (14B) | ▶️ Start on Colab | 3x faster | 70% less |

| GRPO with Qwen3 (8B) | ▶️ Start on Colab | 3x faster | 80% less |

| Llama-3.2 (3B) | ▶️ Start on Colab | 2.4x faster | 58% less |

| Llama-3.2 (11B vision) | ▶️ Start on Colab | 2x faster | 60% less |

| Qwen2.5 (7B) | ▶️ Start on Colab | 2x faster | 60% less |

Qwen3-Coder-480B-A35B-Instruct

Highlights

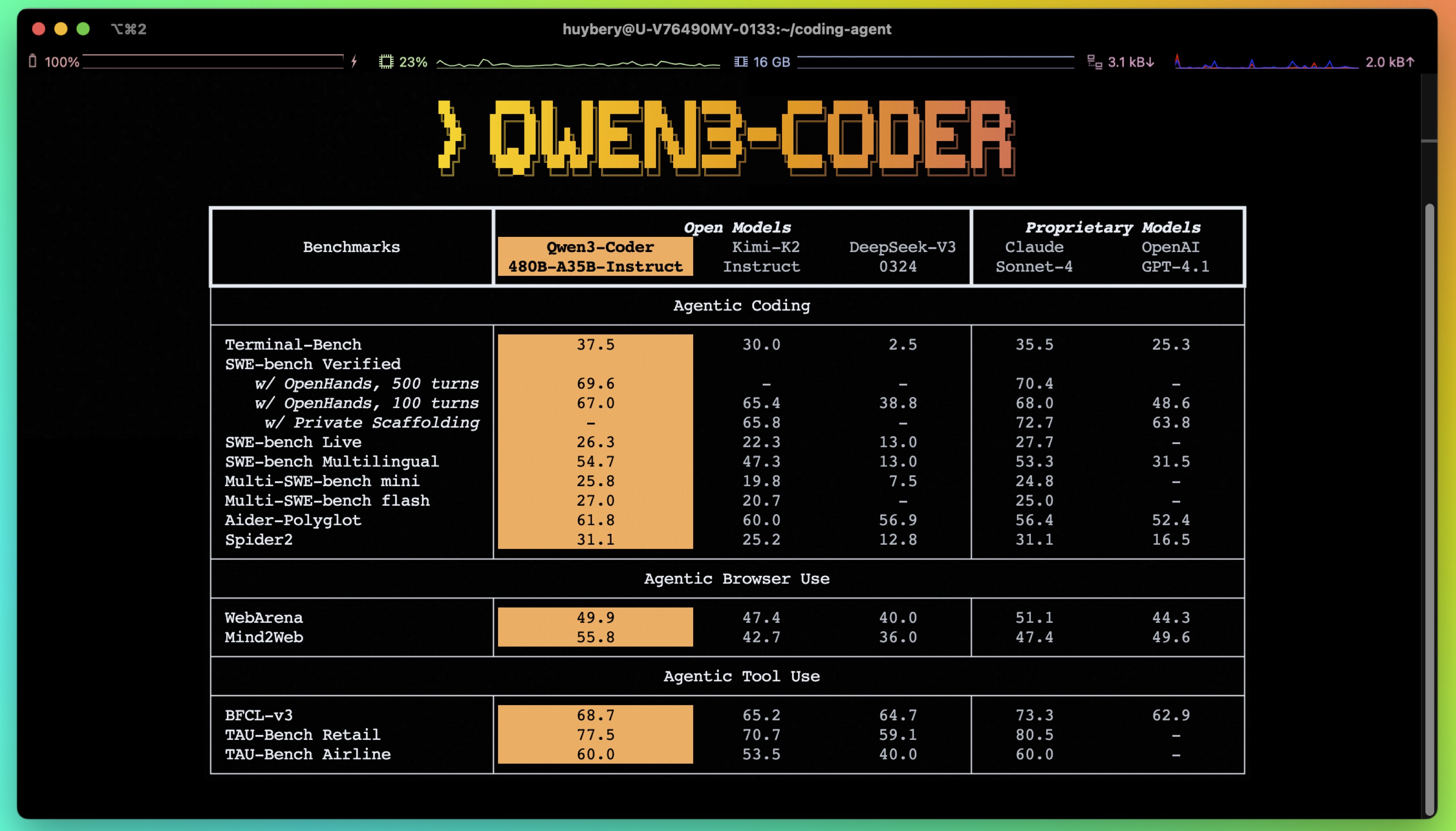

Today, we're announcing Qwen3-Coder, our most agentic code model to date. Qwen3-Coder is available in multiple sizes, but we're excited to introduce its most powerful variant first: Qwen3-Coder-480B-A35B-Instruct. featuring the following key enhancements:

- Significant Performance among open models on Agentic Coding, Agentic Browser-Use, and other foundational coding tasks, achieving results comparable to Claude Sonnet.

- Long-context Capabilities with native support for 256K tokens, extendable up to 1M tokens using Yarn, optimized for repository-scale understanding.

- Agentic Coding supporting for most platforms such as Qwen Code, CLINE, featuring a specially designed function call format.

Model Overview

Qwen3-480B-A35B-Instruct has the following features:

- Type: Causal Language Models

- Training Stage: Pretraining & Post-training

- Number of Parameters: 480B in total and 35B activated

- Number of Layers: 62

- Number of Attention Heads (GQA): 96 for Q and 8 for KV

- Number of Experts: 160

- Number of Activated Experts: 8

- Context Length: 262,144 natively.

NOTE: This model supports only non-thinking mode and does not generate <think></think> blocks in its output. Meanwhile, specifying enable_thinking=False is no longer required.

For more details, including benchmark evaluation, hardware requirements, and inference performance, please refer to our blog, GitHub, and Documentation.

Quickstart

We advise you to use the latest version of transformers.

With transformers<4.51.0, you will encounter the following error:

KeyError: 'qwen3_moe'

The following contains a code snippet illustrating how to use the model generate content based on given inputs.

from transformers import AutoModelForCausalLM, AutoTokenizer

model_name = "Qwen/Qwen3-480B-A35B-Instruct"

# load the tokenizer and the model

tokenizer = AutoTokenizer.from_pretrained(model_name)

model = AutoModelForCausalLM.from_pretrained(

model_name,

torch_dtype="auto",

device_map="auto"

)

# prepare the model input

prompt = "Write a quick sort algorithm."

messages = [

{"role": "user", "content": prompt}

]

text = tokenizer.apply_chat_template(

messages,

tokenize=False,

add_generation_prompt=True,

)

model_inputs = tokenizer([text], return_tensors="pt").to(model.device)

# conduct text completion

generated_ids = model.generate(

**model_inputs,

max_new_tokens=65536

)

output_ids = generated_ids[0][len(model_inputs.input_ids[0]):].tolist()

content = tokenizer.decode(output_ids, skip_special_tokens=True)

print("content:", content)

Note: If you encounter out-of-memory (OOM) issues, consider reducing the context length to a shorter value, such as 32,768.

For local use, applications such as Ollama, LMStudio, MLX-LM, llama.cpp, and KTransformers have also supported Qwen3.

Agentic Coding

Qwen3-Coder excels in tool calling capabilities.

You can simply define or use any tools as following example.

# Your tool implementation

def square_the_number(num: float) -> dict:

return num ** 2

# Define Tools

tools=[

{

"type":"function",

"function":{

"name": "square_the_number",

"description": "output the square of the number.",

"parameters": {

"type": "object",

"required": ["input_num"],

"properties": {

'input_num': {

'type': 'number',

'description': 'input_num is a number that will be squared'

}

},

}

}

}

]

import OpenAI

# Define LLM

client = OpenAI(

# Use a custom endpoint compatible with OpenAI API

base_url='http://localhost:8000/v1', # api_base

api_key="EMPTY"

)

messages = [{'role': 'user', 'content': 'square the number 1024'}]

completion = client.chat.completions.create(

messages=messages,

model="Qwen3-480B-A35B-Instruct",

max_tokens=65536,

tools=tools,

)

print(completion.choice[0])

Best Practices

To achieve optimal performance, we recommend the following settings:

Sampling Parameters:

- We suggest using

temperature=0.7,top_p=0.8,top_k=20,repetition_penalty=1.05.

- We suggest using

Adequate Output Length: We recommend using an output length of 65,536 tokens for most queries, which is adequate for instruct models.

Citation

If you find our work helpful, feel free to give us a cite.

@misc{qwen3technicalreport,

title={Qwen3 Technical Report},

author={Qwen Team},

year={2025},

eprint={2505.09388},

archivePrefix={arXiv},

primaryClass={cs.CL},

url={https://arxiv.org/abs/2505.09388},

}

- Downloads last month

- 81,855

1-bit

2-bit

3-bit

4-bit

5-bit

6-bit

8-bit

16-bit

Chat template for unsloth/Qwen3-Coder-480B-A35B-Instruct-GGUF

| {#- Copyright 2025-present the Unsloth team. All rights reserved. #} | |

| {#- Licensed under the Apache License, Version 2.0 (the "License") #} | |

| {#- Edits made by Unsloth to fix the chat template #} | |

| {% macro render_item_list(item_list, tag_name='required') %} | |

| {%- if item_list is defined and item_list is iterable and item_list | length > 0 %} | |

| {%- if tag_name %}{{- '\n<' ~ tag_name ~ '>' -}}{% endif %} | |

| {{- '[' }} | |

| {%- for item in item_list -%} | |

| {%- if loop.index > 1 %}{{- ", "}}{% endif -%} | |

| {%- if item is string -%} | |

| {{ "`" ~ item ~ "`" }} | |

| {%- else -%} | |

| {{ item }} | |

| {%- endif -%} | |

| {%- endfor -%} | |

| {{- ']' }} | |

| {%- if tag_name %}{{- '</' ~ tag_name ~ '>' -}}{% endif %} | |

| {%- endif %} | |

| {% endmacro %} | |

| {%- if messages[0]["role"] == "system" %} | |

| {%- set system_message = messages[0]["content"] %} | |

| {%- set loop_messages = messages[1:] %} | |

| {%- else %} | |

| {%- set loop_messages = messages %} | |

| {%- endif %} | |

| {%- if not tools is defined %} | |

| {%- set tools = [] %} | |

| {%- endif %} | |

| {%- if system_message is defined %} | |

| {{- "<|im_start|>system\n" + system_message }} | |

| {%- else %} | |

| {%- if tools is iterable and tools | length > 0 %} | |

| {{- "<|im_start|>system\nYou are Qwen, a helpful AI assistant that can interact with a computer to solve tasks." }} | |

| {%- endif %} | |

| {%- endif %} | |

| {%- if tools is iterable and tools | length > 0 %} | |

| {{- "\n\nYou have access to the following functions:\n\n" }} | |

| {{- "<tools>" }} | |

| {%- for tool in tools %} | |

| {%- if tool.function is defined %} | |

| {%- set tool = tool.function %} | |

| {%- endif %} | |

| {{- "\n<function>\n<name>" ~ tool.name ~ "</name>" }} | |

| {{- '\n<description>' ~ (tool.description | trim) ~ '</description>' }} | |

| {{- '\n<parameters>' }} | |

| {%- for param_name, param_fields in tool.parameters.properties|items %} | |

| {{- '\n<parameter>' }} | |

| {{- '\n<name>' ~ param_name ~ '</name>' }} | |

| {%- if param_fields.type is defined %} | |

| {{- '\n<type>' ~ (param_fields.type | string) ~ '</type>' }} | |

| {%- endif %} | |

| {%- if param_fields.description is defined %} | |

| {{- '\n<description>' ~ (param_fields.description | trim) ~ '</description>' }} | |

| {%- endif %} | |

| {{- render_item_list(param_fields.enum, 'enum') }} | |

| {%- set handled_keys = ['type', 'description', 'enum', 'required'] %} | |

| {%- for json_key in param_fields %} | |

| {%- if json_key not in handled_keys %} | |

| {%- set normed_json_key = json_key | replace("-", "_") | replace(" ", "_") | replace("$", "") %} | |

| {%- if param_fields[json_key] is mapping %} | |

| {{- '\n<' ~ normed_json_key ~ '>' ~ (param_fields[json_key] | tojson | safe) ~ '</' ~ normed_json_key ~ '>' }} | |

| {%- else %} | |

| {{- '\n<' ~ normed_json_key ~ '>' ~ (param_fields[json_key] | string) ~ '</' ~ normed_json_key ~ '>' }} | |

| {%- endif %} | |

| {%- endif %} | |

| {%- endfor %} | |

| {{- render_item_list(param_fields.required, 'required') }} | |

| {{- '\n</parameter>' }} | |

| {%- endfor %} | |

| {{- render_item_list(tool.parameters.required, 'required') }} | |

| {{- '\n</parameters>' }} | |

| {%- if tool.return is defined %} | |

| {%- if tool.return is mapping %} | |

| {{- '\n<return>' ~ (tool.return | tojson | safe) ~ '</return>' }} | |

| {%- else %} | |

| {{- '\n<return>' ~ (tool.return | string) ~ '</return>' }} | |

| {%- endif %} | |

| {%- endif %} | |

| {{- '\n</function>' }} | |

| {%- endfor %} | |

| {{- "\n</tools>" }} | |

| {{- '\n\nIf you choose to call a function ONLY reply in the following format with NO suffix:\n\n<tool_call>\n<function=example_function_name>\n<parameter=example_parameter_1>\nvalue_1\n</parameter>\n<parameter=example_parameter_2>\nThis is the value for the second parameter\nthat can span\nmultiple lines\n</parameter>\n</function>\n</tool_call>\n\n<IMPORTANT>\nReminder:\n- Function calls MUST follow the specified format: an inner <function=...></function> block must be nested within <tool_call></tool_call> XML tags\n- Required parameters MUST be specified\n- You may provide optional reasoning for your function call in natural language BEFORE the function call, but NOT after\n- If there is no function call available, answer the question like normal with your current knowledge and do not tell the user about function calls\n</IMPORTANT>' }} | |

| {%- endif %} | |

| {%- if system_message is defined %} | |

| {{- '<|im_end|>\n' }} | |

| {%- else %} | |

| {%- if tools is iterable and tools | length > 0 %} | |

| {{- '<|im_end|>\n' }} | |

| {%- endif %} | |

| {%- endif %} | |

| {%- for message in loop_messages %} | |

| {%- if message.role == "assistant" and message.tool_calls is defined and message.tool_calls is iterable and message.tool_calls | length > 0 %} | |

| {{- '<|im_start|>' + message.role }} | |

| {%- if message.content is defined and message.content is string and message.content | trim | length > 0 %} | |

| {{- '\n' + message.content | trim + '\n' }} | |

| {%- endif %} | |

| {%- for tool_call in message.tool_calls %} | |

| {%- if tool_call.function is defined %} | |

| {%- set tool_call = tool_call.function %} | |

| {%- endif %} | |

| {{- '\n<tool_call>\n<function=' + tool_call.name + '>\n' }} | |

| {%- if tool_call.arguments is defined %} | |

| {%- for args_name, args_value in tool_call.arguments|items %} | |

| {{- '<parameter=' + args_name + '>\n' }} | |

| {%- set args_value = args_value if args_value is string else args_value | string %} | |

| {{- args_value }} | |

| {{- '\n</parameter>\n' }} | |

| {%- endfor %} | |

| {%- endif %} | |

| {{- '</function>\n</tool_call>' }} | |

| {%- endfor %} | |

| {{- '<|im_end|>\n' }} | |

| {%- elif message.role == "user" or message.role == "system" or message.role == "assistant" %} | |

| {{- '<|im_start|>' + message.role + '\n' + message.content + '<|im_end|>' + '\n' }} | |

| {%- elif message.role == "tool" %} | |

| {%- if loop.previtem and loop.previtem.role != "tool" %} | |

| {{- '<|im_start|>user\n' }} | |

| {%- endif %} | |

| {{- '<tool_response>\n' }} | |

| {{- message.content }} | |

| {{- '\n</tool_response>\n' }} | |

| {%- if not loop.last and loop.nextitem.role != "tool" %} | |

| {{- '<|im_end|>\n' }} | |

| {%- elif loop.last %} | |

| {{- '<|im_end|>\n' }} | |

| {%- endif %} | |

| {%- else %} | |

| {{- '<|im_start|>' + message.role + '\n' + message.content + '<|im_end|>\n' }} | |

| {%- endif %} | |

| {%- endfor %} | |

| {%- if add_generation_prompt %} | |

| {{- '<|im_start|>assistant\n' }} | |

| {%- endif %} | |

| {#- Copyright 2025-present the Unsloth team. All rights reserved. #} | |

| {#- Licensed under the Apache License, Version 2.0 (the "License") #} |

Model tree for unsloth/Qwen3-Coder-480B-A35B-Instruct-GGUF

Base model

Qwen/Qwen3-Coder-480B-A35B-Instruct