Uploaded model

- Developed by: Agnuxo

- License: apache-2.0

- Finetuned from model: Agnuxo/Phi-3.5

This model was fine-tuned using Unsloth and Huggingface's TRL library.

Benchmark Results

This model has been fine-tuned for various tasks and evaluated on the following benchmarks:

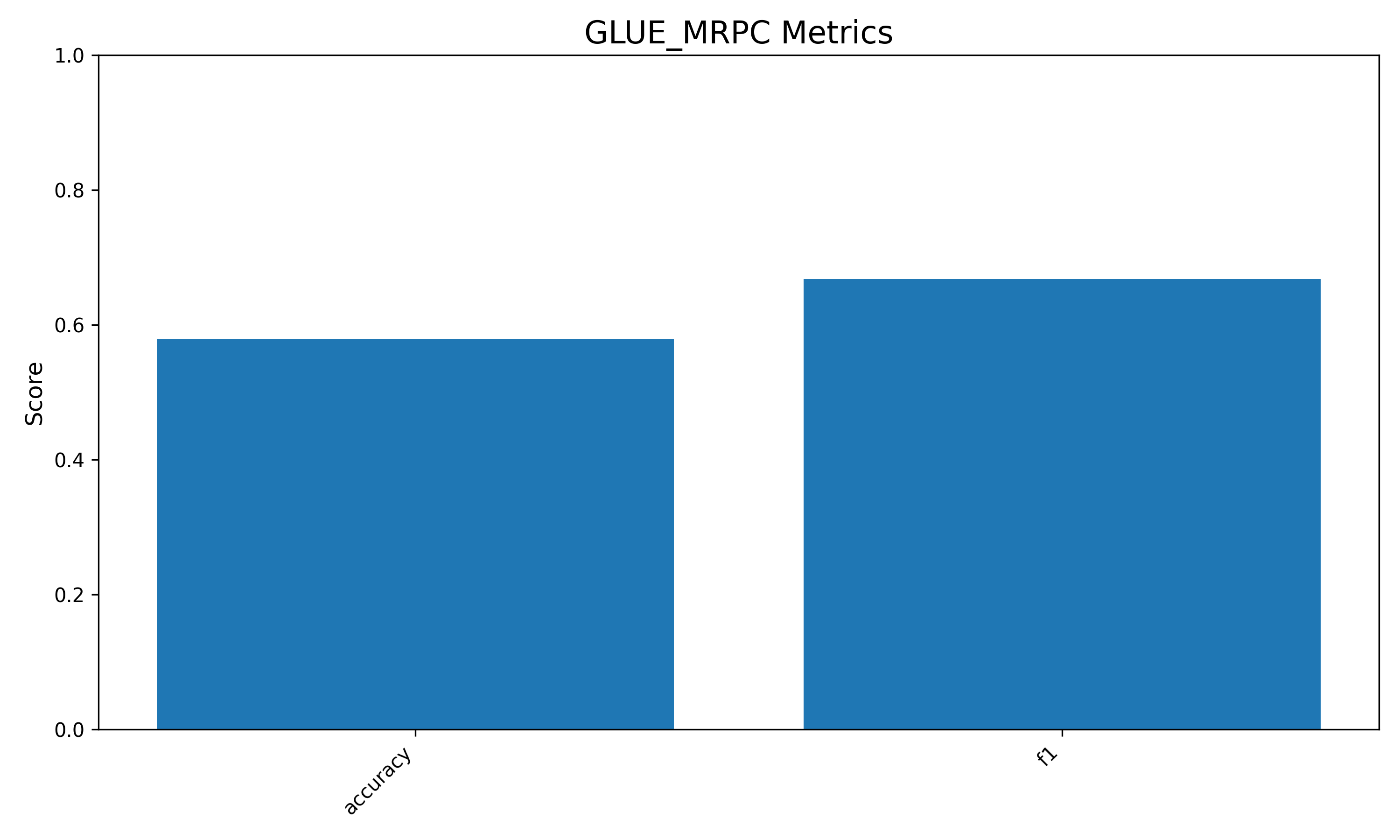

GLUE_MRPC

Accuracy: 0.5784 F1: 0.6680

Model Size: 3,722,585,088 parameters Required Memory: 13.87 GB

For more details, visit my GitHub.

Thanks for your interest in this model!

- Downloads last month

- 0

Inference Providers

NEW

This model isn't deployed by any Inference Provider.

🙋

Ask for provider support

Model tree for Agnuxo/Phi-3.5-ORPO_tron-Instruct_CODE_Python_English_Asistant-16bit-v2

Base model

microsoft/Phi-3.5-mini-instruct

Finetuned

unsloth/Phi-3.5-mini-instruct