metadata

license: other

license_name: flux-1-dev-non-commercial-license

license_link: >-

https://huggingface.co/black-forest-labs/FLUX.1-Kontext-dev/blob/main/LICENSE.md

language:

- en

base_model:

- black-forest-labs/FLUX.1-Kontext-dev

pipeline_tag: image-to-image

tags:

- gguf-node

- gguf-connector

widget:

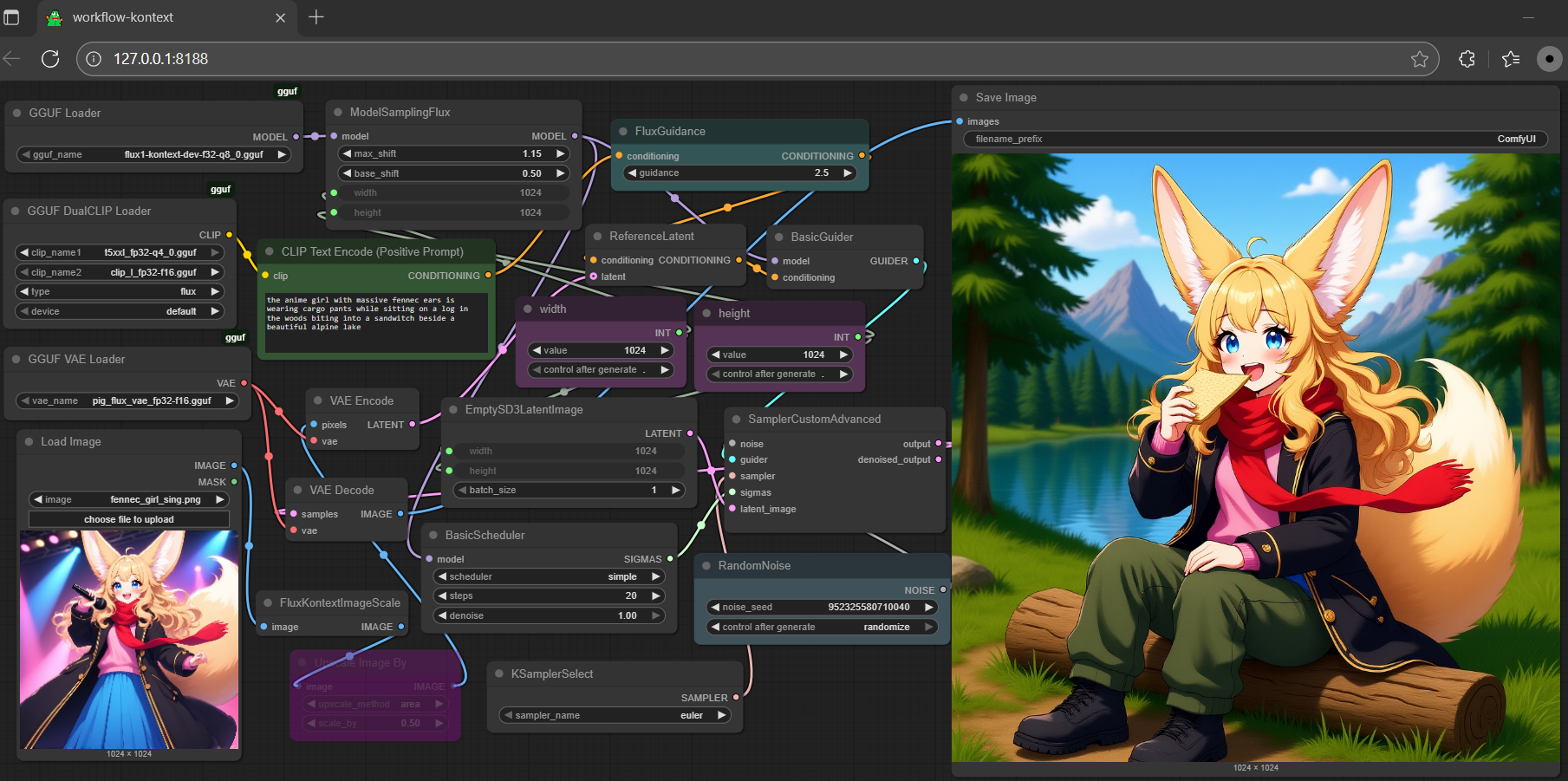

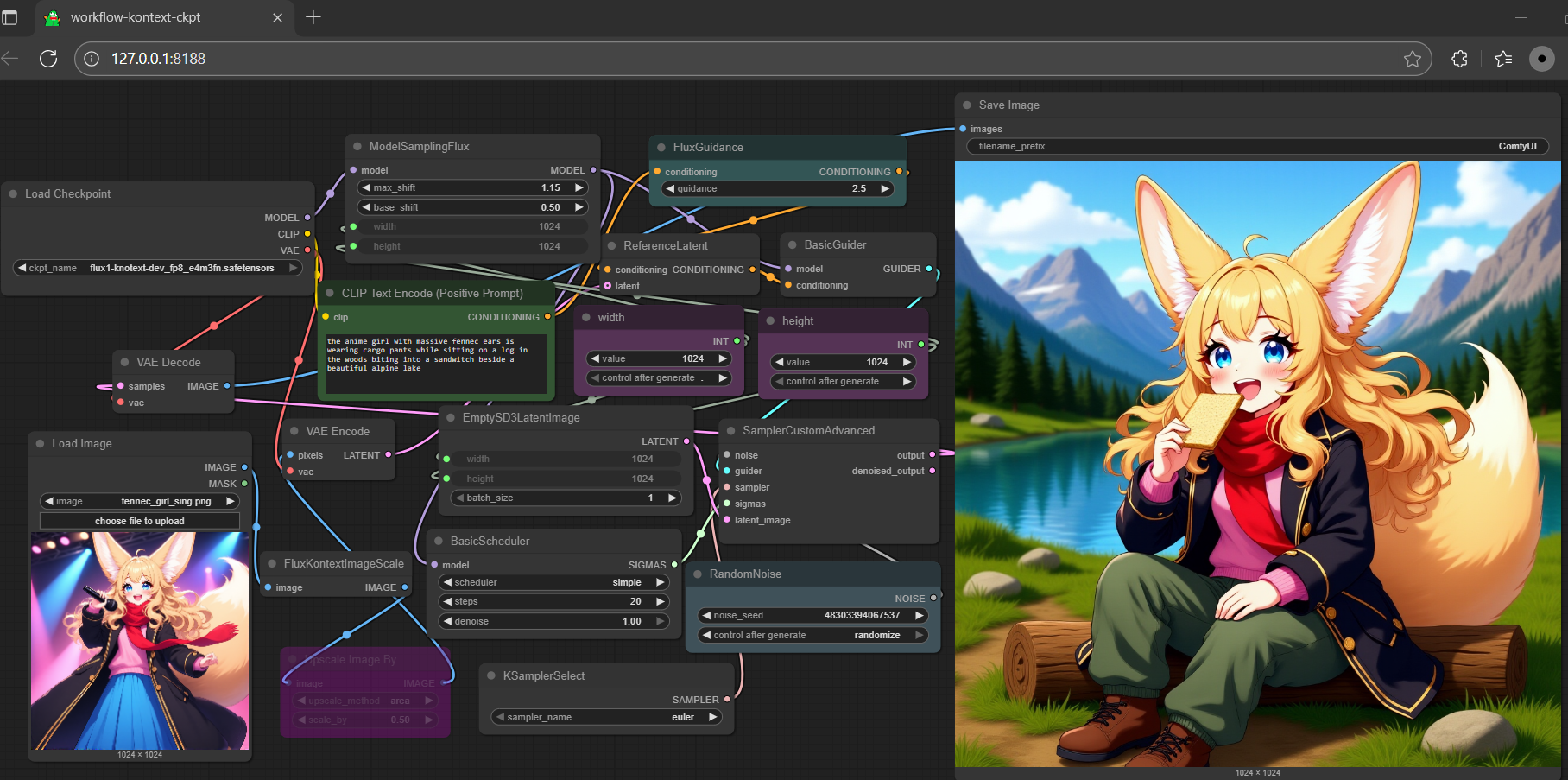

- text: >-

the anime girl with massive fennec ears is wearing cargo pants while

sitting on a log in the woods biting into a sandwitch beside a beautiful

alpine lake

output:

url: samples\ComfyUI_00001_.png

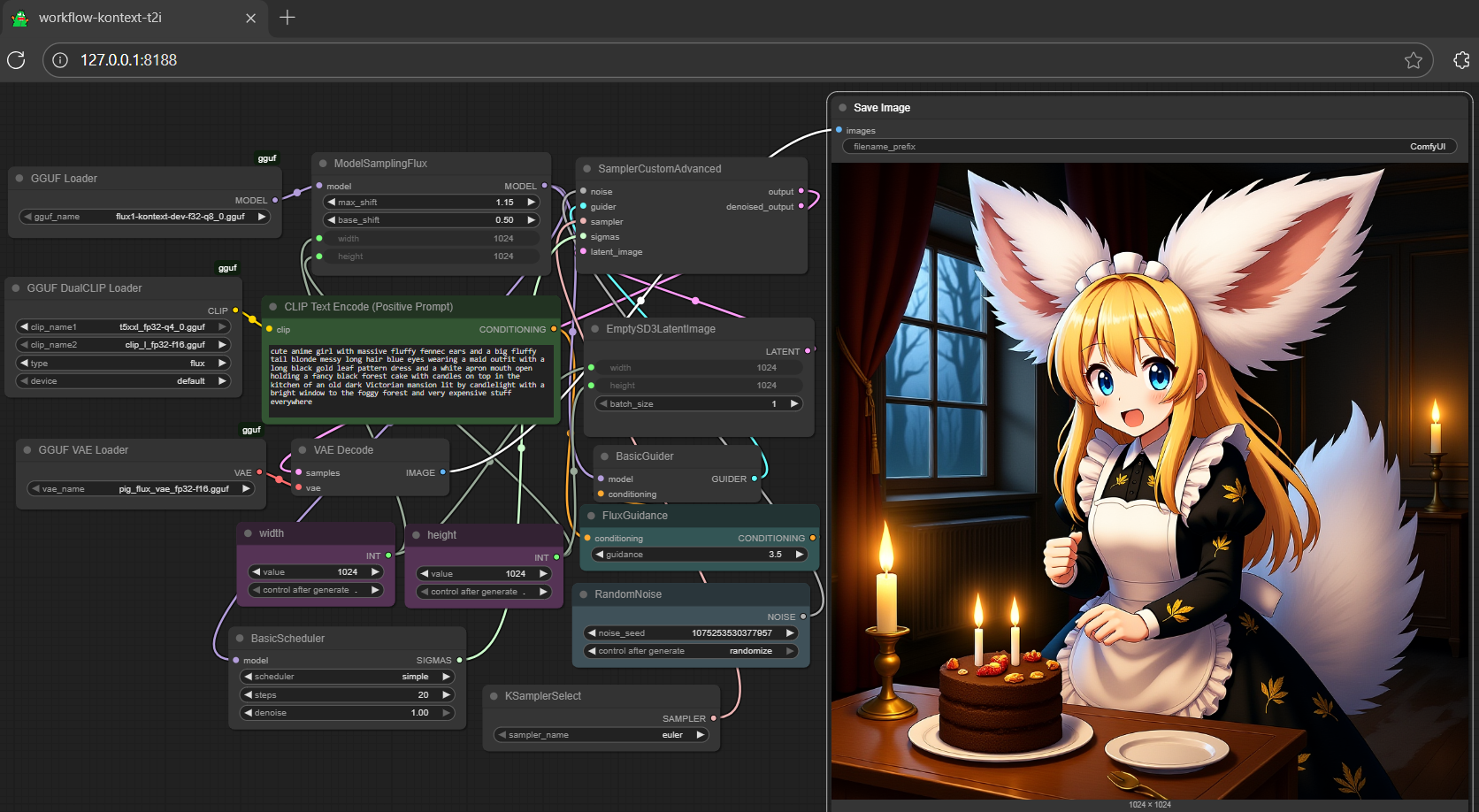

- text: >-

the anime girl with massive fennec ears is wearing cargo pants while

sitting on a log in the woods biting into a sandwitch beside a beautiful

alpine lake

output:

url: samples\ComfyUI_00002_.png

- text: >-

the anime girl with massive fennec ears is wearing cargo pants while

sitting on a log in the woods biting into a sandwitch beside a beautiful

alpine lake

output:

url: samples\ComfyUI_00003_.png

gguf quantized version of knotext

- drag kontext to >

./ComfyUI/models/diffusion_models - drag clip-l, t5xxl to >

./ComfyUI/models/text_encoders - drag pig to >

./ComfyUI/models/vae

- Prompt

- the anime girl with massive fennec ears is wearing cargo pants while sitting on a log in the woods biting into a sandwitch beside a beautiful alpine lake

- Prompt

- the anime girl with massive fennec ears is wearing a maid outfit with a long black gold leaf pattern dress and a white apron mouth open holding a fancy black forest cake with candles on top in the kitchen of an old dark Victorian mansion lit by candlelight with a bright window to the foggy forest and very expensive stuff everywhere

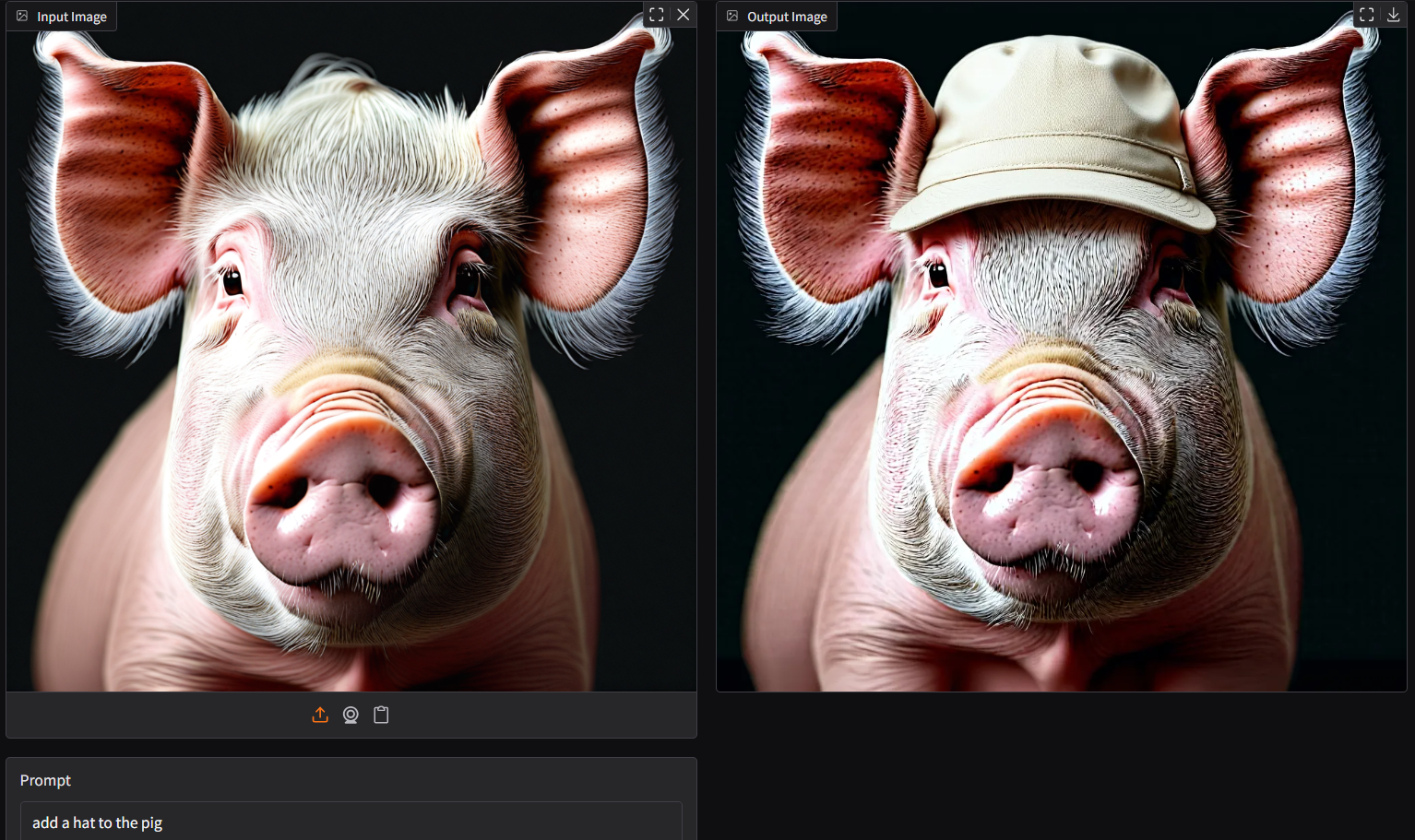

- Prompt

- add a hat to the pig

- don't need safetensors anymore; all gguf (model + encoder + vae)

- full set gguf works on gguf-node (see the last item from reference at the very end)

- get more t5xxl gguf encoder either here or here

extra: scaled safetensors (alternative 1)

- get all-in-one checkpoint here (model, clips and vae embedded)

- another option: get multi matrix scaled fp8 from comfyui here or e4m3fn fp8 here with seperate scaled version l-clip, t5xxl and vae

run it with diffusers🧨 (alternative 2)

- might need the most updated diffusers (git version) for

FluxKontextPipelineto work; upgrade your diffusers with:

pip install git+https://github.com/huggingface/diffusers.git

- since

FluxKontextTransformer3DModel.from_single_filenot ready yet; opt to run the scaled nf4 recently with gguf t5xxl encoder, see example below:

import torch

from transformers import T5EncoderModel

from diffusers import FluxKontextPipeline

from diffusers.utils import load_image

text_encoder = T5EncoderModel.from_pretrained(

"calcuis/kontext-gguf",

gguf_file="t5xxl_fp16-q4_0.gguf",

torch_dtype=torch.bfloat16,

)

pipe = FluxKontextPipeline.from_pretrained(

"calcuis/kontext-gguf",

text_encoder_2=text_encoder,

torch_dtype=torch.bfloat16

).to("cuda")

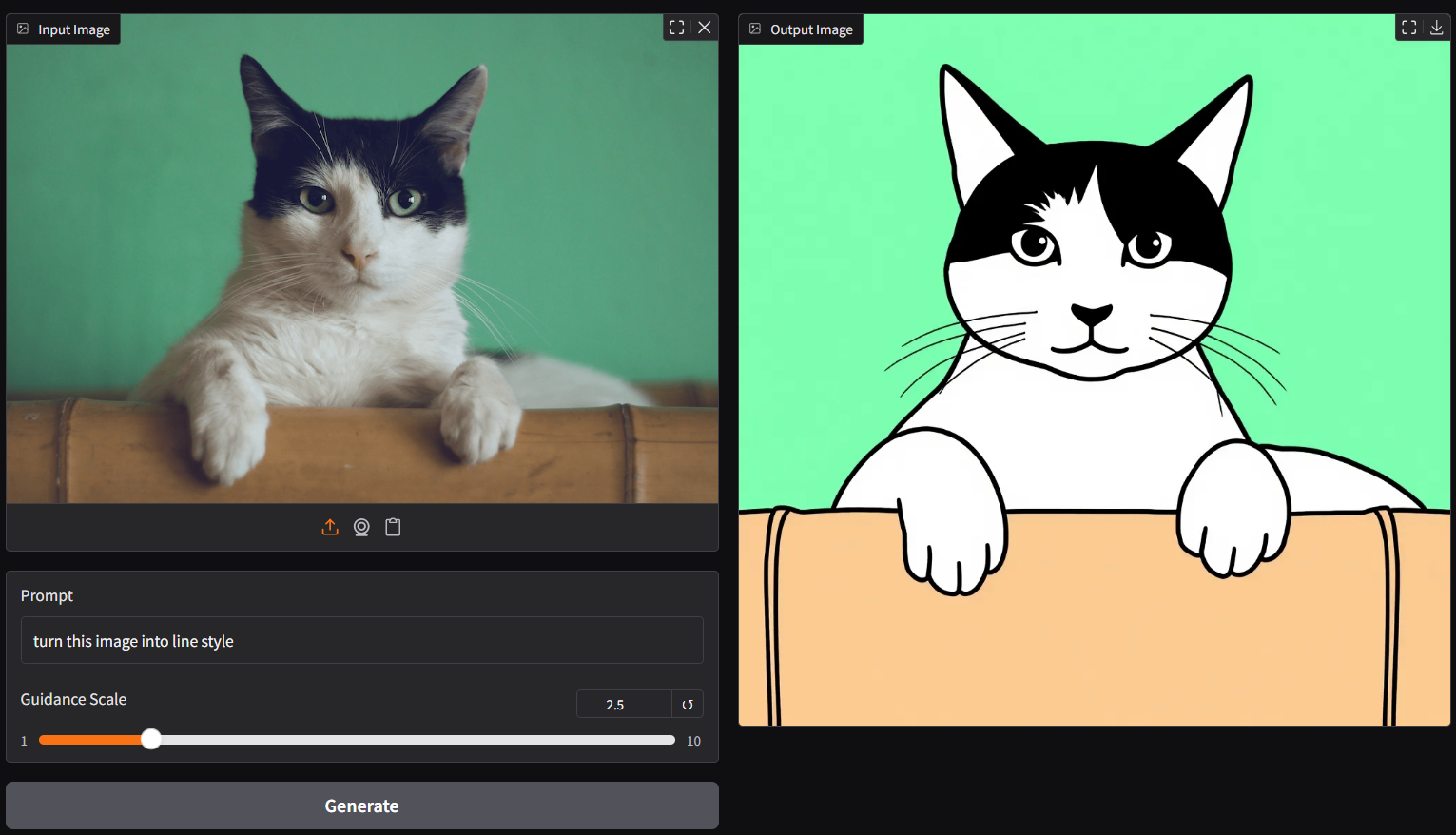

input_image = load_image("https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/cat.png")

image = pipe(

image=input_image,

prompt="Add a hat to the cat",

guidance_scale=2.5

).images[0]

image.save("output.png")

- tip: if your machine doesn't have enough vram, suggest running it with gguf-node via comfyui (plan a), otherwise you might need to wait very long; this is always a winner takes all game

run it with gguf-connector (alternative 3)

- simply execute the command below in console/terminal

ggc k2

- note: during the first time launch, it will pull the required model file(s) from this repo to local cache automatically; then opt to run it entirely offline; i.e., from local URL: http://127.0.0.1:7860 with lazy webui

update

- clip_l_v2:

text_projection.weightadded

reference

- base model from black-forest-labs

- comfyui from comfyanonymous

- gguf-connector (pypi)

- gguf-node (pypi|repo|pack)