IberianLLM-7B-Instruct Model Card

The IberianLLM-7B model is released under a permissive Apache 2.0 license, allowing both research and commercial use. Along with the open weights, all training scripts and configuration files are made publicly available in this GitHub repository.

DISCLAIMER: This model is a first proof-of-concept designed to demonstrate the instruction-following capabilities of recently released base models. It has been optimized to engage in conversation but has NOT been aligned through RLHF to filter or avoid sensitive topics. As a result, it may generate harmful or inappropriate content. The team is actively working to enhance its performance through further instruction and alignment with RL techniques.

Model Details

Description

Transformer-based decoder-only language model that has been pre-trained from scratch on 1.59 trillion tokens of highly curated data. The pre-training corpus contains text in 8 Iberian languages, English and code.

Architecture

| Total Parameters | 7,768,117,248 |

| Embedding Parameters | 1,048,576,000 |

| Layers | 32 |

| Hidden size | 4,096 |

| Attention heads | 32 |

| Context length | 8,192 |

| Vocabulary size | 256,000 |

| Precision | bfloat16 |

| Embedding type | RoPE |

| Activation Function | SwiGLU |

| Layer normalization | RMS Norm |

| Flash attention | ✅ |

| Grouped Query Attention | ✅ |

| Num. query groups | 8 |

Intended Use

Direct Use

This model is intended for both research and commercial use in any of the languages included in the training data. The base models are intended either for language generation or to be further fine-tuned for specific use-cases. This instruction-tuned version can be used as general-purpose assistants, as long as the user is fully aware of the model’s limitations.

Out-of-scope Use

The model is not intended for malicious activities, such as harming others or violating human rights. Any downstream application must comply with current laws and regulations. Irresponsible usage in production environments without proper risk assessment and mitigation is also discouraged.

Hardware and Software

Training Framework

Pre-training was conducted using NVIDIA’s NeMo Framework, which leverages PyTorch Lightning for efficient model training in highly distributed settings.

The instruction-tuned versions were produced with FastChat.

Compute Infrastructure

All models were trained on MareNostrum 5, a pre-exascale EuroHPC supercomputer hosted and operated by Barcelona Supercomputing Center.

The accelerated partition is composed of 1,120 nodes with the following specifications:

- 4x Nvidia Hopper GPUs with 64 HBM2 memory

- 2x Intel Sapphire Rapids 8460Y+ at 2.3Ghz and 32c each (64 cores)

- 4x NDR200 (BW per node 800Gb/s)

- 512 GB of Main memory (DDR5)

- 460GB on NVMe storage

| Model | Nodes | GPUs |

|---|---|---|

| 7B | 64 | 256 |

How to use

The instruction-following models use the commonly adopted ChatML template:

{%- if messages[0]['role'] == 'system' %}{%- set system_message = messages[0]['content'] %}{%- set loop_messages = messages[1:] %}{%- else %}{%- set system_message = 'SYSTEM MESSAGE' %}{%- set loop_messages = messages %}{%- endif %}{%- if not date_string is defined %}{%- set date_string = '2024-09-30' %}{%- endif %}{{ '<|im_start|>system\n' + system_message + '<|im_end|>\n' }}{% for message in loop_messages %}{%- if (message['role'] != 'user') and (message['role'] != 'assistant')%}{{ raise_exception('Only user and assitant roles are suported after the initial optional system message.') }}{% endif %}{% if (message['role'] == 'user') != (loop.index0 % 2 == 0) %}{{ raise_exception('After the optional system message, conversation roles must alternate user/assistant/user/assistant/...') }}{% endif %}{{'<|im_start|>' + message['role'] + '\n' + message['content'] + '<|im_end|>' + '\n'}}{% endfor %}{% if add_generation_prompt %}{{ '<|im_start|>assistant\n' }}{% endif %}

Where system_message is used to guide the model during generation and date_string can be set to allow the model to respond with the current date.

The exact same chat template should be used for an enhanced conversational experience. The easiest way to apply it is by using the tokenizer's built-in functions, as shown in the following snippet.

from datetime import datetime

from transformers import AutoTokenizer, AutoModelForCausalLM

import transformers

import torch

model_id = "BSC-LT/IberianLLM-7B-Instruct"

text = "At what temperature does water boil?"

tokenizer = AutoTokenizer.from_pretrained(model_id)

model = AutoModelForCausalLM.from_pretrained(

model_id,

device_map="auto",

torch_dtype=torch.bfloat16

)

message = [ { "role": "user", "content": text } ]

date_string = datetime.today().strftime('%Y-%m-%d')

prompt = tokenizer.apply_chat_template(

message,

tokenize=False,

add_generation_prompt=True,

date_string=date_string

)

inputs = tokenizer.encode(prompt, add_special_tokens=False, return_tensors="pt")

outputs = model.generate(input_ids=inputs.to(model.device), max_new_tokens=200)

print(tokenizer.decode(outputs[0], skip_special_tokens=True))

Using this template, each turn is preceded by a <|im_start|> delimiter and the role of the entity

(either user, for content supplied by the user, or assistant for LLM responses), and finished with the <|im_end|> token.

Data

Pretraining Data

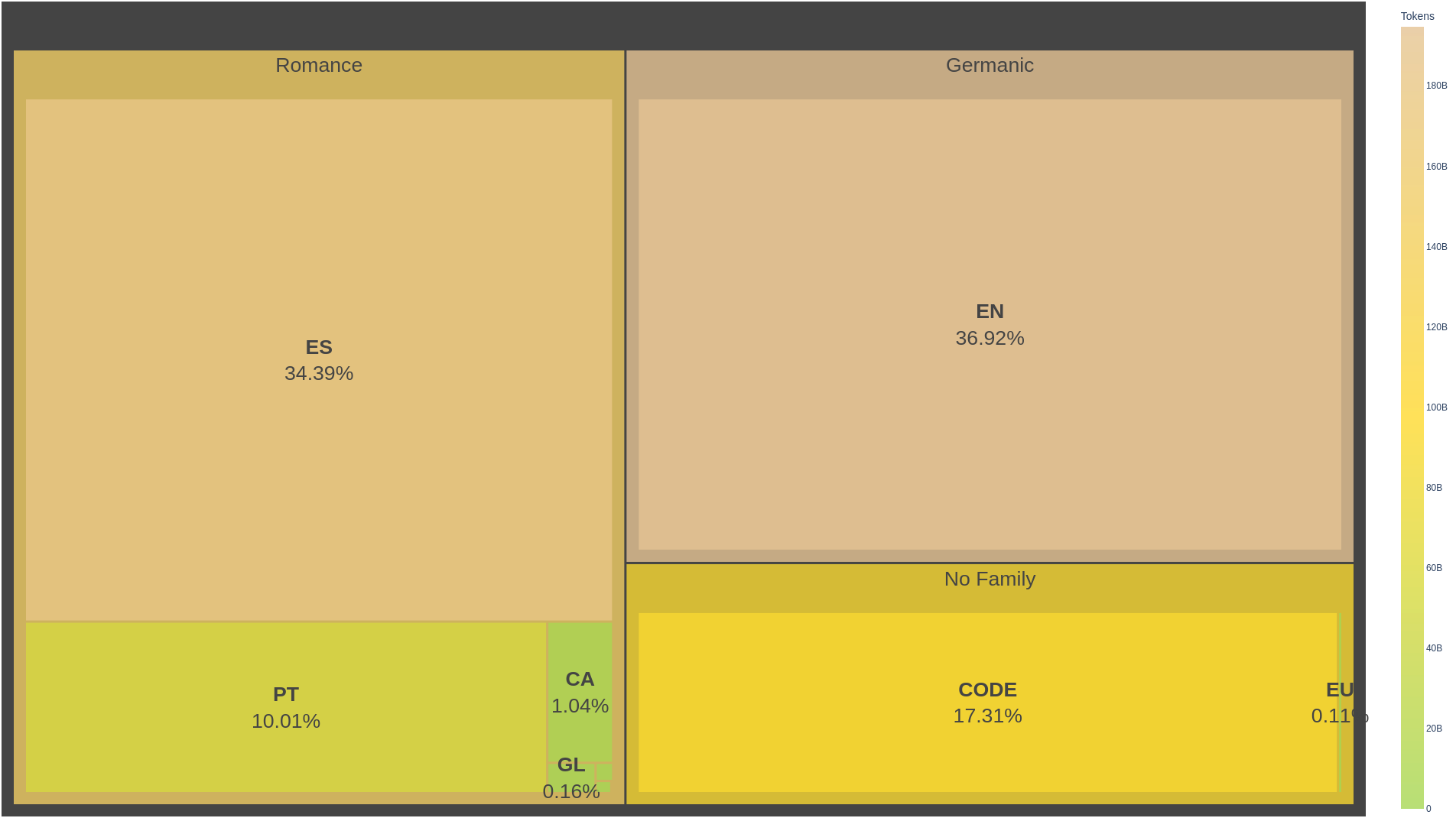

The training corpus consists of 0.53 trillion tokens, including 8 Iberian languages, English and 92 programming languages. It amounts to a total of 6,7TB of pre-processed text. The following image showcases the employed data distribution:

This multilingual corpus is composed of data from mainly from Colossal OSCAR and FineWeb-Edu, each corresponding to the 31% of the total data distribution, Colossal Oscar accounting to all languages except English and FineWeb-Edu consisting of solely English data. Following this, Starcoder provides 17.3% of data, consisting of all of the employed Code data. The next largest source accounts for data from BNE, 11.6%, grouping data for mostly Spanish, but also Basque, Catalan and Galician. The missing less than 10% comes from smaller sources in various languages.

Feel free to click the expand button below to see the full list of sources.

Data Sources

| Dataset | Language | Source |

|---|---|---|

| Colossal OSCAR 1.0 | ca, es, eu, gl, pt | (Brack et al., 2024) |

| Wikimedia dumps | ca, en, es, eu, gl, pt | Link |

| OpenSubtitlesv2016 | ca, en, es, eu, gl, pt | (Lison & Tiedemann, 2016) |

| EurLEX-Resources | ca, en, es, eu, gl, pt | Link |

| MC4-Legal | ca, en, es, eu, gl, pt | Link |

| FineWeb-Edu | en | (Penedo et al., 2024) |

| CATalog | ca | (Palomar-Giner et al., 2024) |

| Spanish Crawling | ca, es, eu, gl | Relevant Spanish websites crawling |

| Starcoder | code | (Li et al., 2023) |

| BIGPATENT | en | (Sharma et al., 2019) |

| PG-19 | en | (Rae et al., 2019) |

| proof-pile | en | Link |

| Spanish Legal Domain Corpora | es | (Gutiérrez-Fandiño et al., 2021) |

| HPLTDatasets v1 - Spanish | es | (de Gibert et al., 2024) |

| Legal | es | Internally generated legal dataset: BOE, BORME, Senado, Congreso, Spanish court orders, DOGC |

| Biomedical | es | Internally generated scientific dataset: Dialnet, Scielo, CSIC, TDX, BSC, UCM |

| Scientific | es | Internally generated scientific dataset: Wikipedia LS, Pubmed, MeSpEn, patents, clinical cases, medical crawler |

| EusCrawl (filtered: no Wikipedia, no NC-licenses) | eu | (Artetxe et al., 2022) |

| Latxa Corpus v1.1 | eu | (Etxaniz et al., 2024) Link |

| CorpusNÓS | gl | (de-Dios-Flores et al., 2024) |

| ParlamentoPT | pt | (Rodrigues et al., 2023) |

References

- Abadji, J., Suárez, P. J. O., Romary, L., & Sagot, B. (2021). Ungoliant: An optimized pipeline for the generation of a very large-scale multilingual web corpus (H. Lüngen, M. Kupietz, P. Bański, A. Barbaresi, S. Clematide, & I. Pisetta, Eds.; pp. 1–9). Leibniz-Institut für Deutsche Sprache. Link

- Artetxe, M., Aldabe, I., Agerri, R., Perez-de-Viñaspre, O., & Soroa, A. (2022). Does Corpus Quality Really Matter for Low-Resource Languages?

- Bañón, M., Esplà-Gomis, M., Forcada, M. L., García-Romero, C., Kuzman, T., Ljubešić, N., van Noord, R., Sempere, L. P., Ramírez-Sánchez, G., Rupnik, P., Suchomel, V., Toral, A., van der Werff, T., & Zaragoza, J. (2022). MaCoCu: Massive collection and curation of monolingual and bilingual data: Focus on under-resourced languages. Proceedings of the 23rd Annual Conference of the European Association for Machine Translation, 303–304. Link

- Brack, M., Ostendorff, M., Suarez, P. O., Saiz, J. J., Castilla, I. L., Palomar-Giner, J., Shvets, A., Schramowski, P., Rehm, G., Villegas, M., & Kersting, K. (2024). Community OSCAR: A Community Effort for Multilingual Web Data. Link

- Penedo, G., Kydlíček, H., Ben Allal, L., Lozhkov, A., Mitchell, M., Raffel, C., Von Werra, L., & Wolf, T. (2024). The FineWeb Datasets: Decanting the Web for the Finest Text Data at Scale. In A. Globerson, L. Mackey, D. Belgrave, A. Fan, U. Paquet, J. Tomczak, & C. Zhang (Eds.), Advances in Neural Information Processing Systems (Vol. 37, pp. 30811–30849). Curran Associates, Inc. Link

- de Gibert, O., Nail, G., Arefyev, N., Bañón, M., van der Linde, J., Ji, S., Zaragoza-Bernabeu, J., Aulamo, M., Ramírez-Sánchez, G., Kutuzov, A., Pyysalo, S., Oepen, S., & Tiedemann, J. (2024). A New Massive Multilingual Dataset for High-Performance Language Technologies (arXiv:2403.14009). arXiv. Link

- Dodge, J., Sap, M., Marasović, A., Agnew, W., Ilharco, G., Groeneveld, D., Mitchell, M., & Gardner, M. (2021). Documenting Large Webtext Corpora: A Case Study on the Colossal Clean Crawled Corpus. In M.-F. Moens, X. Huang, L. Specia, & S. W. Yih (Eds.), Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing (pp. 1286–1305). Association for Computational Linguistics. Link

- Etxaniz, J., Sainz, O., Perez, N., Aldabe, I., Rigau, G., Agirre, E., Ormazabal, A., Artetxe, M., & Soroa, A. (2024). Latxa: An Open Language Model and Evaluation Suite for Basque. [Link] (https://arxiv.org/abs/2403.20266)

- Gutiérrez-Fandiño, A., Armengol-Estapé, J., Gonzalez-Agirre, A., & Villegas, M. (2021). Spanish Legalese Language Model and Corpora.

- Hendrycks, D., Burns, C., Kadavath, S., Arora, A., Basart, S., Tang, E., Song, D., & Steinhardt, J. (2021). Measuring Mathematical Problem Solving With the MATH Dataset. NeurIPS.

- Jansen, T., Tong, Y., Zevallos, V., & Suarez, P. O. (2022). Perplexed by Quality: A Perplexity-based Method for Adult and Harmful Content Detection in Multilingual Heterogeneous Web Data.

- Ostendorff, M., Blume, T., & Ostendorff, S. (2020). Towards an Open Platform for Legal Information. Proceedings of the ACM/IEEE Joint Conference on Digital Libraries in 2020, 385–388. Link

- Ostendorff, M., Suarez, P. O., Lage, L. F., & Rehm, G. (2024). LLM-Datasets: An Open Framework for Pretraining Datasets of Large Language Models. First Conference on Language Modeling. Link

- Palomar-Giner, J., Saiz, J. J., Espuña, F., Mina, M., Da Dalt, S., Llop, J., Ostendorff, M., Ortiz Suarez, P., Rehm, G., Gonzalez-Agirre, A., & Villegas, M. (2024). A CURATEd CATalog: Rethinking the Extraction of Pretraining Corpora for Mid-Resourced Languages. In N. Calzolari, M.-Y. Kan, V. Hoste, A. Lenci, S. Sakti, & N. Xue (Eds.), Proceedings of the 2024 Joint International Conference on Computational Linguistics, Language Resources and Evaluation (LREC-COLING 2024) (pp. 335–349). ELRA and ICCL. Link

- Rae, J. W., Potapenko, A., Jayakumar, S. M., Hillier, C., & Lillicrap, T. P. (2019). Compressive Transformers for Long-Range Sequence Modelling. arXiv Preprint. Link

- Rodrigues, J., Gomes, L., Silva, J., Branco, A., Santos, R., Cardoso, H. L., & Osório, T. (2023). Advancing Neural Encoding of Portuguese with Transformer Albertina PT-*.

- Sharma, E., Li, C., & Wang, L. (2019). BIGPATENT: A Large-Scale Dataset for Abstractive and Coherent Summarization. CoRR, abs/1906.03741. Link

- Subramani, N., Luccioni, S., Dodge, J., & Mitchell, M. (2023). Detecting Personal Information in Training Corpora: An Analysis. 208–220. Link

The model was trained for 3 epochs, afterwards, a small continual pretraining experiment was performed using the language distribution weights obtained in CITE XDOGE. This continual pretraining experiment was performed over 34k steps, which accounts for an additional number of 143B tokens. This way, the total number of tokens seen during the pre-training phase amounts to 1.73T tokens.

For more information about the data, we refer to the (Salamandra family of models) (Agirre et al., 2025)

Datasheet

Motivation

For what purpose was the dataset created? Was there a specific task in mind? Was there a specific gap that needed to be filled? Please provide a description.

The purpose of creating this dataset is to pre-train the Salamandra family of multilingual models with high performance in a large number of European languages (35) and code (including 92 different programming languages). In addition, we aim to represent especially the co-official languages of Spain: Spanish, Catalan, Galician, and Basque. This is the reason why we carry out an oversampling of these languages.

Following the Salamandra family of models, the dataset has been employed to pre-train the IberianLLM model, focused on Iberian Languages, English and code.

We detected that there is a great lack of massive multilingual data, especially in minority languages (Ostendorff & Rehm, 2023), so part of our efforts in the creation of this pre-training dataset have resulted in the contribution to large projects such as the Community OSCAR (Brack et al., 2024), which includes 151 languages and 40T words, or CATalog (Palomar-Giner et al., 2024), the largest open dataset in Catalan in the world.

Who created the dataset (e.g., which team, research group) and on behalf of which entity (e.g., company, institution, organization)?

The dataset has been created by the Language Technologies unit (LangTech) of the Barcelona Supercomputing Center - Centro Nacional de Supercomputación (BSC-CNS), which aims to advance the field of natural language processing through cutting-edge research and development and the use of HPC. In particular, it was created by the unit's data team, the main contributors being Javier Saiz, Ferran Espuña, and Jorge Palomar.

However, the creation of the dataset would not have been possible without the collaboration of a large number of collaborators, partners, and public institutions, which can be found in detail in the acknowledgements.

Who funded the creation of the dataset? If there is an associated grant, please provide the name of the grantor and the grant name and number.

This work/research has been promoted and financed by the Government of Catalonia through the Aina project.

Composition

What do the instances that comprise the dataset represent (e.g., documents, photos, people, countries)? Are there multiple types of instances (e.g., movies, users, and ratings; people and interactions between them; nodes and edges)? Please provide a description.

The dataset consists entirely of text documents in various languages. Specifically, data was mainly sourced from the following databases and repositories:

- Common Crawl: Repository that holds website data and is run by the Common Crawl non-profit organization. It is updated monthly and is distributed under the CC0 1.0 public domain license.

- GitHub: Community platform that allows developers to create, store, manage, and share their code. Repositories are crawled and then distributed with their original licenses, which may vary from permissive to non-commercial licenses.

- Wikimedia: Database that holds the collection databases managed by the Wikimedia Foundation, including Wikipedia, Wikibooks, Wikinews, Wikiquote, Wikisource, and Wikivoyage. It is updated monthly and is distributed under Creative Commons Attribution-ShareAlike License 4.0.

- EurLex: Repository that holds the collection of legal documents from the European Union, available in all of the EU’s 24 official languages and run by the Publications Office of the European Union. It is updated daily and is distributed under the Creative Commons Attribution 4.0 International license.

- Other repositories: Specific repositories were crawled under permission for domain-specific corpora, which include academic, legal, and newspaper repositories.

We provide a complete list of dataset sources at the end of this section.

Does the dataset contain all possible instances or is it a sample (not necessarily random) of instances from a larger set? If the dataset is a sample, then what is the larger set? Is the sample representative of the larger set (e.g., geographic coverage)? If so, please describe how this representativeness was validated/verified. If it is not representative of the larger set, please describe why not (e.g., to cover a more diverse range of instances, because instances were withheld or unavailable).

The dataset is a sample from multiple sources. Containing data in 8 Iberian langauges: Spanish, Catalan, Basque, Portuguese, and Galician representing the main focus, but it also includes Occitan, Aranese and Asturian. On the other hand, as usual, English also has a strong importance. Finally, 92 different programming languages are included accounting almost 20% of the pre-training stage. Sources were sampled in proportion to their occurrence.

What data does each instance consist of? “Raw” data (e.g., unprocessed text or images) or features? In either case, please provide a description.

Each instance consists of a text document processed for deduplication, language identification, and source-specific filtering. Some documents required optical character recognition (OCR) to extract text from non-text formats such as PDFs.

Is there a label or target associated with each instance? If so, please provide a description.

Each instance is labeled with a unique identifier, the primary language of the content, and the URL for web-sourced instances. Additional labels were automatically assigned to detect specific types of content —harmful or toxic content— and to assign preliminary indicators of undesired qualities —very short documents, high density of symbols, etc.— which were used for filtering instances.

Is any information missing from individual instances? If so, please provide a description, explaining why this information is missing (e.g., because it was unavailable). This does not include intentionally removed information, but might include, e.g., redacted text.

No significant information is missing from the instances.

Are relationships between individual instances made explicit (e.g., users’ movie ratings, social network links)? If so, please describe how these relationships are made explicit.

Instances are related through shared metadata, such as source and language identifiers.

Are there recommended data splits (e.g., training, development/validation, testing)? If so, please provide a description of these splits, explaining the rationale behind them.

The dataset is split randomly into training, validation, and test sets.

Are there any errors, sources of noise, or redundancies in the dataset? If so, please provide a description.

Despite removing duplicated instances within each source, redundancy remains at the paragraph and sentence levels, particularly in web-sourced instances where SEO techniques and templates contribute to repeated textual patterns. Some instances may also be duplicated across sources due to format variations.

Is the dataset self-contained, or does it link to or otherwise rely on external resources (e.g., websites, tweets, other datasets)? If it links to or relies on external resources, a) are there guarantees that they will exist, and remain constant, over time; b) are there official archival versions of the complete dataset (i.e., including the external resources as they existed at the time the dataset was created); c) are there any restrictions (e.g., licenses, fees) associated with any of the external resources that might apply to a dataset consumer? Please provide descriptions of all external resources and any restrictions associated with them, as well as links or other access points, as appropriate.

The dataset is self-contained and does not rely on external resources.

Does the dataset contain data that might be considered confidential (e.g., data that is protected by legal privilege or by doctor–patient confidentiality, data that includes the content of individuals’ non-public communications)? If so, please provide a description.

The dataset does not contain confidential data.

Does the dataset contain data that, if viewed directly, might be offensive, insulting, threatening, or might otherwise cause anxiety? If so, please describe why. If the dataset does not relate to people, you may skip the remaining questions in this section.

The dataset includes web-crawled content, which may overrepresent pornographic material across languages (Kreutzer et al., 2022). Although pre-processing techniques were applied to mitigate offensive content, the heterogeneity and scale of web-sourced data make exhaustive filtering challenging, which makes it next to impossible to identify all adult content without falling into excessive filtering, which may negatively influence certain demographic groups (Dodge et al., 2021).

Does the dataset identify any subpopulations (e.g., by age, gender)? If so, please describe how these subpopulations are identified and provide a description of their respective distributions within the dataset.

The dataset does not explicitly identify any subpopulations.

Is it possible to identify individuals (i.e., one or more natural persons), either directly or indirectly (i.e., in combination with other data) from the dataset? If so, please describe how.

Web-sourced instances in the dataset may contain personally identifiable information (PII) that is publicly available on the Web, such as names, IP addresses, email addresses, and phone numbers. While it would be possible to indirectly identify individuals through the combination of multiple data points, the nature and scale of web data makes it difficult to parse such information. In any case, efforts are made to filter or anonymize sensitive data during pre-processing, but some identifiable information may remain in the dataset.

Does the dataset contain data that might be considered sensitive in any way? If so, please provide a description.

Given that the dataset includes web-sourced content and other publicly available documents, instances may inadvertently reveal financial information, health-related details, or forms of government identification, such as social security numbers (Subramani et al., 2023), especially if the content originates from less-regulated sources or user-generated platforms.

Collection Process

How was the data collected?

This dataset is constituted by combining several sources, whose acquisition methods can be classified into three groups:

- Web-sourced datasets with some preprocessing available under permissive license (p.e. Common Crawl).

- Domain-specific or language-specific raw crawls (p.e. Spanish Crawling).

- Manually curated data obtained through collaborators, data providers (by means of legal assignment agreements) or open source projects (p.e. CATalog).

What mechanisms or procedures were used to collect the data? How were these mechanisms or procedures validated?

According to the three groups previously defined, these are the mechanisms used in each of them:

- Open direct download. Validation: data integrity tests.

- Ad-hoc scrapers or crawlers. Validation: software unit and data integrity tests.

- Direct download via FTP, SFTP, API or S3. Validation: data integrity tests.

If the dataset is a sample from a larger set, what was the sampling strategy?

The sampling strategy was to use the whole dataset resulting from the filtering explained in the ‘preprocessing/cleaning/labelling’ section, with the particularity that an upsampling of 2 (i.e. twice the probability of sampling a document) was performed for the co-official languages of Spain (Spanish, Catalan, Galician, Basque), and a downsampling of 1/2 was applied for code (half the probability of sampling a code document, evenly distributed among all programming languages).

Who was involved in the data collection process and how were they compensated?

This data is generally extracted, filtered and sampled by automated processes. The code required to run these processes has been developed entirely by members of the LangTech data team, or otherwise obtained from open-source software. Furthermore, there has been no monetary consideration for acquiring data from suppliers.

Over what timeframe was the data collected? Does this timeframe match the creation timeframe of the data associated with the instances? If not, please describe the timeframe in which the data associated with the instances was created.

Data were acquired and processed from April 2023 to April 2024. However, as mentioned, much data has been obtained from open projects such as Common Crawl, which contains data from 2014, so it is the end date (04/2024) rather than the start date that is important.

Were any ethical review processes conducted? If so, please provide a description of these review processes, including the outcomes, as well as a link or other access point to any supporting documentation.

No particular ethical review process has been carried out as the data is mostly open and not particularly sensitive. However, we have an internal evaluation team and a bias team to monitor ethical issues. In addition, we work closely with ‘Observatori d'Ètica en Intel·ligència Artificial’ (OEIAC) and ‘Agencia Española de Supervisión de la Inteligencia Artificial’ (AESIA) to audit the processes we carry out from an ethical and legal point of view, respectively.

Preprocessing

Was any preprocessing/cleaning/labeling of the data done? If so, please provide a description. If not, you may skip the remaining questions in this section.

Instances of text documents were not altered, but web-sourced documents were filtered based on specific criteria along two dimensions:

- Quality: documents with a score lower than 0.8, based on undesired qualities, such as documents with low number of lines, very short sentences, presence of long footers and headers, and high percentage of punctuation, obtained through CURATE (Palomar-Giner et al., 2024) were filtered out.

- Harmful or adult content: documents originating from Colossal OSCAR were filtered using LLM-Datasets (Ostendorff et al., 2024) based on the perplexity from a language model (‘harmful_pp’ field) provided by the Ungoliant pipeline (Abadji et al., 2021).

Was the “raw” data saved in addition to the preprocessed/cleaned/labeled data? If so, please provide a link or other access point to the “raw” data.

The original raw data was not kept.

Is the software that was used to preprocess/clean/label the data available? If so, please provide a link or other access point.

Yes, the preprocessing and filtering software is open-sourced. The CURATE pipeline was used for Spanish Crawling and CATalog, and the Ungoliant pipeline was used for the OSCAR project.

Uses

Has the dataset been used for any tasks already? If so, please provide a description.

Pre-train the Salamandra model family & Pre-train the IberianLLM model.

What (other) tasks could the dataset be used for?

The data can be used primarily to pre-train other language models, which can then be used for a wide range of use cases. The dataset could also be used for other tasks such as fine-tuning language models, cross-lingual NLP tasks, machine translation, domain-specific text generation, and language-specific data analysis.

Is there anything about the composition of the dataset or the way it was collected and preprocessed/cleaned/labeled that might impact future uses? Is there anything a dataset consumer could do to mitigate these risks or harms?

Web-crawled content is over-represented with standard language varieties, impacting language model performance for minority languages. Language diversity in data is crucial to avoid bias, especially in encoding non-standard dialects, preventing the exclusion of demographic groups. Moreover, despite legal uncertainties in web-scraped data, we prioritize permissive licenses and privacy protection measures, acknowledging the challenges posed by personally identifiable information (PII) within large-scale datasets. Our ongoing efforts aim to address privacy concerns and contribute to a more inclusive linguistic dataset.

Are there tasks for which the dataset should not be used?

Distribution

Will the dataset be distributed to third parties outside of the entity on behalf of which the dataset was created? If so, please provide a description.

The dataset will not be released or distributed to third parties. Any related question to distribution is omitted in this section.

Maintenance

Who will be supporting/hosting/maintaining the dataset?

The dataset will be hosted by the Language Technologies unit (LangTech) of the Barcelona Supercomputing Center (BSC). The team will ensure regular updates and monitor the dataset for any issues related to content integrity, legal compliance, and bias for the sources they are responsible for.

How can the owner/curator/manager of the dataset be contacted?

The data owner may be contacted with the email address [email protected].

Will the dataset be updated?

The dataset will not be updated.

If the dataset relates to people, are there applicable limits on the retention of the data associated with the instances? If so, please describe these limits and explain how they will be enforced.

The dataset does not keep sensitive data that could allow direct identification of individuals, apart from the data that is publicly available in web-sourced content. Due to the sheer volume and diversity of web data, it is not feasible to notify individuals or manage data retention on an individual basis. However, efforts are made to mitigate the risks associated with sensitive information through pre-processing and filtering to remove identifiable or harmful content. Despite these measures, vigilance is maintained to address potential privacy and ethical issues.

Will older versions of the dataset continue to be supported/hosted/maintained? If so, please describe how. If not, please describe how its obsolescence will be communicated to dataset consumers.

Since the dataset will not be updated, only the final version will be kept.

If others want to extend/augment/build on/contribute to the dataset, is there a mechanism for them to do so?

The dataset does not allow for external contributions.

Finetuning Data

This instruction-tuned variant has been trained with a mixture of 272k English, Spanish, Catalan, Basque, Galician and Portuguese multi-turn instructions gathered from open datasets:

| Dataset | ca | en | es | eu | gl | pt |

|---|---|---|---|---|---|---|

| alpaca-cleaned | – | 49,950 | – | – | – | – |

| aya-dataset | – | 3,941 | 3,851 | 939 | – | 8,995 |

| coqcat_train | 4,797 | – | – | – | – | – |

| databricks-dolly-15k | – | 15,011 | – | – | – | – |

| dolly-ca | 3,232 | – | – | – | – | – |

| flores-dev | 986 | 1,037 | 1,964 | 493 | 505 | – |

| mentor-ca | 7,119 | – | – | – | – | – |

| mentor-es | – | – | 7,122 | – | – | – |

| no-robots-system-prompt | – | 9,485 | – | – | – | – |

| oasst-ca | 2,517 | – | – | – | – | – |

| oasst2 | 750 | 31,086 | 15,438 | 190 | 197 | 1,203 |

| open-orca-gpt4-system-prompt | – | 49,996 | – | – | – | – |

| rag-multilingual | 16,043 | 14,997 | 11,263 | – | – | – |

| tower-blocks | – | 7,762 | 1,000 | – | – | 1,000 |

| Total | 35,444 | 183,265 | 40,638 | 1,622 | 702 | 11,198 |

Evaluation

Gold-standard benchmarks

Evaluation is done using the Language Model Evaluation Harness (Gao et al., 2024). We evaluate on a set of tasks taken from SpanishBench, CatalanBench, BasqueBench and GalicianBench. These benchmarks include both new and existing tasks and datasets. In the tables below, we include the results in a selection of evaluation datasets that represent model's performance across a variety of tasks within these benchmarks.

We only use tasks that are either human generated, human translated, or with a strong human-in-the-loop (i.e., machine translation followed by professional revision or machine generation followed by human revision and annotation). This is the reason behind the variety in number of tasks reported across languages. As more tasks that fulfill these requirements are published, we will update the presented results. We also intend to expand the evaluation to other languages, as long as the datasets meet our quality standards.

During the implementation of the evaluation we observed a series of issues worth considering when replicating and interpreting the results presented. These issues include ≈1.5% variances in performance in some tasks depending on the version of the transformers library used, and depending on the use (or lack of use) of tensor parallelism when loading a model. When implementing existing tasks, we carry out a comprehensive quality evaluation of the dataset, the Harness task itself, and what kind of input models see during evaluation. Our implementation (see links above) addresses multiple existing problems such as errors in datasets and prompts, and lack of pre-processing. All this means that results will vary if using other Harness implementations, and may slightly vary depending on the replication setup.

It should be noted that these results are subject to all the drawbacks of every current gold-standard evaluation, and that the figures do not fully represent the models capabilities and potential. We thus advise caution when reading and interpreting the results.

All results reported below are on a 5-shot setting.

* Since these model has not been trained with German, French or Italian data, the Flores scoring does not take into account subtasks that involve these languages.

Spanish

| Category | Task | Metric | Result |

|---|---|---|---|

| Commonsense Reasoning | xstorycloze_es | acc | 70.09 |

| NLI | wnli_es | acc | 54.93 |

| QA | xquad_es | acc | 65.61 |

| Translation | flores_es | bleu | 24.42 |

Catalan

| Category | Task | Metric | Result |

|---|---|---|---|

| Commonsense Reasoning | copa_ca | acc | 79.4 |

| xstorycloze_ca | acc | 70.48 | |

| NLI | wnli_ca | acc | 52.11 |

| xnli_ca | acc | 52.34 | |

| Paraphrasing | paws_ca | acc | 62.05 |

| QA | arc_ca_easy | acc | 66.41 |

| arc_ca_challenge | acc | 40.02 | |

| openbookqa_ca | acc | 36.8 | |

| piqa_ca | acc | 71.44 | |

| siqa_ca | acc | 50.82 | |

| Translation | flores_ca | bleu | 30.65 |

Basque

| Category | Task | Metric | Result |

|---|---|---|---|

| Commonsense Reasoning | xcopa_eu | acc | 65,8 |

| xstorycloze_eu | acc | 59.96 | |

| NLI | wnli_eu | acc | 46.48 |

| QA | eus_exams | acc | 32.23 |

| eus_proficiency | acc | 29.50 | |

| eus_trivia | acc | 38.83 | |

| Reading Comprehension | eus_reading | acc | 32.95 |

| Translation | flores_eu | bleu | 16.97 |

Galician

| Category | Task | Metric | Result |

|---|---|---|---|

| Paraphrasing | paws_gl | acc | 58.60 |

| QA | openbookqa_gl | acc | 33.8 |

| Translation | flores_gl | bleu | 27.73 |

English

| Category | Task | Metric | Result |

|---|---|---|---|

| Commonsense Reasoning | copa | acc | 89 |

| xstorycloze_en | acc | 72.47 | |

| NLI | wnli | acc | 46.48 |

| xnli_en | acc | 49.48 | |

| QA | arc_easy | acc | 78.16 |

| arc_challenge | acc | 47.78 | |

| openbookqa | acc | 33.2 | |

| piqa | acc | 76.66 | |

| social_iqa | acc | 50.82 | |

| xquad_en | acc | 73.45 |

* Current LM Evaluation Harness implementation is lacking correct pre-processing. These results are obtained with adequate pre-processing.

Additional information

Author

The Language Technologies Unit from Barcelona Supercomputing Center.

Contact

For further information, please send an email to [email protected].

Copyright

Copyright(c) 2024 by Language Technologies Unit, Barcelona Supercomputing Center.

Funding

This work has been promoted and financed by the Government of Catalonia through the Aina Project.

This work is funded by the Ministerio para la Transformación Digital y de la Función Pública - Funded by EU – NextGenerationEU within the framework of ILENIA Project with reference 2022/TL22/00215337, 2022/TL22/00215336, 2022/TL22/00215335, 2022/TL22/00215334.

Acknowledgements

This project has benefited from the contributions of numerous teams and institutions, mainly through data contributions, knowledge transfer or technical support.

In Catalonia, many institutions have been involved in the project. Our thanks to Òmnium Cultural, Parlament de Catalunya, Institut d'Estudis Aranesos, Racó Català, Vilaweb, ACN, Nació Digital, El món and Aquí Berguedà.

At national level, we are especially grateful to our ILENIA project partners: CENID, HiTZ and CiTIUS for their participation. We also extend our genuine gratitude to the Spanish Senate and Congress, Fundación Dialnet, Fundación Elcano and the ‘Instituto Universitario de Sistemas Inteligentes y Aplicaciones Numéricas en Ingeniería (SIANI)’ of the University of Las Palmas de Gran Canaria.

At the international level, we thank the Welsh government, DFKI, Occiglot project, especially Malte Ostendorff, and The Common Crawl Foundation, especially Pedro Ortiz, for their collaboration. We would also like to give special thanks to the NVIDIA team, with whom we have met regularly, specially to: Ignacio Sarasua, Adam Henryk Grzywaczewski, Oleg Sudakov, Sergio Perez, Miguel Martinez, Felipes Soares and Meriem Bendris. Their constant support has been especially appreciated throughout the entire process.

Their valuable efforts have been instrumental in the development of this work.

Disclaimer

Be aware that the model may contain biases or other unintended distortions. When third parties deploy systems or provide services based on this model, or use the model themselves, they bear the responsibility for mitigating any associated risks and ensuring compliance with applicable regulations, including those governing the use of Artificial Intelligence.

The Barcelona Supercomputing Center, as the owner and creator of the model, shall not be held liable for any outcomes resulting from third-party use.

Citation

Work in progress, paper coming soon.

ADD XDOGE CITATION HERE

@article{salamandra,

title={Salamandra Technical Report},

author={LangTech@BSC},

year={2024},

url = {https://arxiv.org/abs/2502.08489}

}

License

- Downloads last month

- 1