Negative-aware Fine-Tuning: Bridging Supervised Learning and Reinforcement Learning in Math Reasoning

🚨 NFT-32B is specifically designed for mathematical reasoning tasks. We do not recommend using this model for general conversation or non-mathematical tasks.

Tsinghua University, NVIDIA, Stanford University

Huayu Chen, Kaiwen Zheng, Qinsheng Zhang, Ganqu Cui, Yin Cui, Haotian Ye, Tsung-Yi Lin, Ming-Yu Liu, Jun Zhu, Haoxiang Wang

[Paper] | [Blog] | [Code] | [Dataset] | [Models] | [Citation]

Model Overview

Description

NFT-32B is a math reasoning model finetuned from Qwen2.5-32B using the Negative-aware Fine-Tuning (NFT) algorithm. NFT is a supervised learning approach that enables LLMs to reflect on their failures and improve autonomously with no external teachers. Unlike traditional supervised methods that discard incorrect answers, NFT constructs an implicit negative policy to model and learn from these failures, achieving performance comparable to leading RL algorithms like GRPO and DAPO.

This larger 32B model demonstrates strong scaling properties, achieving substantial improvements in mathematical reasoning while maintaining the efficiency of supervised learning methods.

This model is for research and development only.

Model Developer

NVIDIA, Tsinghua University, Stanford University

License

This model is released under the NVIDIA Non-Commercial License. The model is for research and development only.

Deployment Geography

Global

Release Date

Huggingface 06/27/2025

- NFT-7B: https://huggingface.co/nvidia/NFT-7B/

- NFT-32B: https://huggingface.co/nvidia/NFT-32B/

Use Case

Mathematical reasoning and problem-solving, including:

- Competition-level mathematics (AIME, AMC, Olympiad)

- General mathematical reasoning (MATH500, Minerva Math)

- Step-by-step mathematical solution generation

Model Architecture

Architecture Type: Transformer decoder-only language model

Network Architecture: Qwen2.5 with RoPE, SwiGLU, RMSNorm, and Attention QKV bias

NFT-32B is post-trained based on Qwen2.5-32B and follows the same model architecture:

- Number of Parameters: 32.5B

- Number of Parameters (Non-Embedding): 31.0B

- Number of Layers: 64

- Number of Attention Heads (GQA): 40 for Q and 8 for KV

Input

Input Type(s): Text

Input Format: String

Input Parameters: One-dimensional (1D)

Other Properties Related to Input:

- Context length up to 131,072 tokens

- Mathematical problems should be clearly stated

- Supports LaTeX notation for mathematical expressions

Output

Output Type(s): Text

Output Format: String

Output Parameters: One-dimensional (1D)

Other Properties Related to Output:

- Step-by-step mathematical reasoning

- Final answers should be enclosed in

\boxed{} - Supports LaTeX notation for mathematical expressions

- Can generate up to 8,192 tokens

Software Integration

Our AI models are designed and/or optimized to run on NVIDIA GPU-accelerated systems. By leveraging NVIDIA’s hardware (e.g. GPU cores) and software frameworks (e.g., CUDA libraries), the model achieves faster training and inference times compared to CPU-only solutions.

Runtime Engine(s):

- Transformers (4.37.0+)

- vLLM

- TensorRT-LLM

Supported Hardware Microarchitecture Compatibility:

- NVIDIA Ampere

- NVIDIA Hopper

- NVIDIA Blackwell

Operating System(s):

- Linux

Model version: v1.0

Training Method

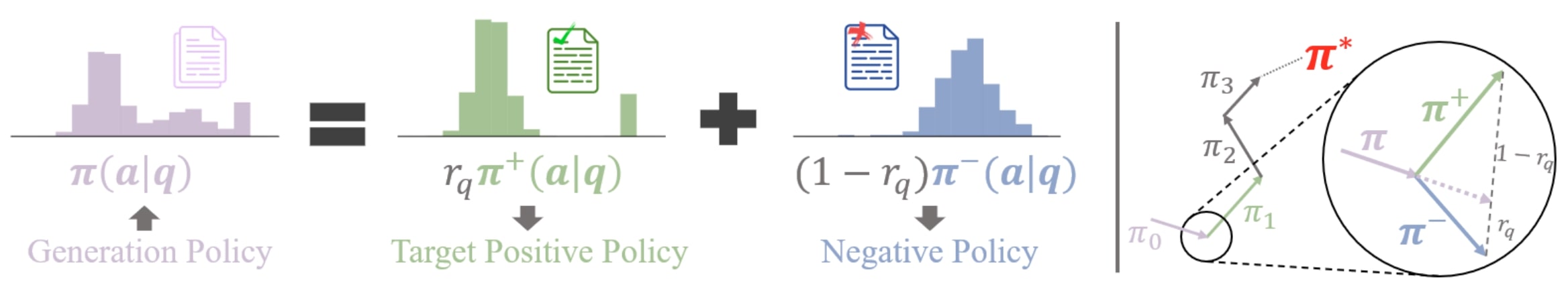

The NFT training pipeline consists of three main components:

Data Collection: The model generates answers to math questions, which are split into positive (correct) and negative (incorrect) datasets based on answer correctness.

Implicit Negative Policy: NFT constructs an implicit negative policy to model negative answers, parameterized with the same positive policy targeted for optimization, enabling direct policy optimization on all generations.

Policy Optimization: Both positive and negative answers are used to optimize the LLM policy via supervised learning with the NFT objective:

L_NFT(θ) = r[-log(π_θ⁺(a|q) / π(a|q))] + (1-r)[-log((1 - r_q * (π_θ⁺(a|q) / π(a|q))) / (1-r_q))]

Training Datasets

Dataset: DAPO-Math-17k

Dataset Size: 17k (math problems)

Data Collection Method: Automated

Labeling Method by dataset: Automated

The whole dataset is used for training. We directly evaluate the model on several other math evaluation datasets.

Evaluation Datasets

NFT-32B is evaluated on 6 mathematical reasoning benchmarks:

- AIME 2024 (30 problems) & 2025 (30 problems): American Invitational Mathematics Examination

- AMC 2023 (40 problems): American Mathematics Competitions

- MATH500 (500 problems): A subset of the MATH dataset

- OlympiadBench (675 problems): International Mathematical Olympiad problems

- Minerva Math (272 problems): Google's mathematical reasoning benchmark

Data Collection Method: Human

Labeling Method by dataset: Human

Performance

NFT-32B achieves state-of-the-art performance among supervised learning methods for mathematical reasoning:

| Benchmark | NFT-32B | Qwen2.5-32B | Improvement |

|---|---|---|---|

| AIME24 (avg@32) | 37.8% | 4.1% | +33.7% |

| AIME25 (avg@32) | 31.5% | 1.0% | +30.5% |

| MATH500 | 88.4% | 68.6% | +19.8% |

| AMC23 (avg@32) | 93.8% | 45.0% | +48.8% |

| OlympiadBench | 55.0% | 31.1% | +23.9% |

| Minerva Math | 48.9% | 27.9% | +21.0% |

| Average | 59.2% | 29.6% | +29.6% |

Notably, NFT-32B performs similarly to DAPO (59.2% vs 59.9%) while using a simpler supervised learning approach.

Usage

NFT-32B is optimized for mathematical reasoning tasks. For best results, use clear mathematical prompts and request step-by-step reasoning.

The model can be used with the Hugging Face Transformers library:

from transformers import AutoModelForCausalLM, AutoTokenizer

model_name = "nvidia/NFT-32B"

device = "cuda" # the device to load the model onto

model = AutoModelForCausalLM.from_pretrained(

model_name,

torch_dtype="auto",

device_map="auto"

)

tokenizer = AutoTokenizer.from_pretrained(model_name)

# Example math problem

problem = "Find the value of $x$ that satisfies the equation $\\sqrt{x+7} = x-5$."

# Format the prompt to encourage step-by-step reasoning

prompt = f"{problem}\nPlease reason step by step, and put your final answer within \\boxed{{}}."

messages = [

{"role": "user", "content": prompt}

]

text = tokenizer.apply_chat_template(

messages,

tokenize=False,

add_generation_prompt=True

)

model_inputs = tokenizer([text], return_tensors="pt").to(device)

# Generate response

generated_ids = model.generate(

**model_inputs,

max_new_tokens=512,

temperature=0 # Use 0 for deterministic output

)

generated_ids = [

output_ids[len(input_ids):] for input_ids, output_ids in zip(model_inputs.input_ids, generated_ids)

]

response = tokenizer.batch_decode(generated_ids, skip_special_tokens=True)[0]

print(response)

Usage Recommendations

- Temperature: Use temperature=0 for deterministic outputs, or 0.1-0.3 for slight variation

- Sampling: For best results on competition problems, consider using multiple samples with majority voting

- Format: Include instructions for step-by-step reasoning directly in the user prompt

- Final Answer: Instruct the model to put the final answer in

\boxed{} - Long Context: This model supports up to 131K tokens context, making it suitable for complex multi-step problems

- Language: This model is primarily trained on English mathematical problems

Requirements

The code of Qwen2.5 has been integrated into Hugging Face transformers, and we recommend using the latest version:

transformers>=4.37.0

Citation

If you find our project helpful, please consider citing

@article{chen2025bridging,

title = {Bridging Supervised Learning and Reinforcement Learning in Math Reasoning},

author = {Huayu Chen, Kaiwen Zheng, Qinsheng Zhang, Ganqu Cui, Yin Cui, Haotian Ye, Tsung-Yi Lin, Ming-Yu Liu, Jun Zhu, Haoxiang Wang},

journal = {arXiv preprint arXiv:2505.18116},

year = {2025}

}

Known Limitations

- Domain Specificity: This model is specifically trained for mathematical reasoning and may not perform well on general conversation or non-mathematical tasks

- Calculation Errors: While the model shows strong reasoning abilities, it may still make arithmetic errors in complex calculations

- Context Understanding: The model may struggle with problems requiring real-world context or domain knowledge outside mathematics

- Resource Requirements: The 32B model requires significant GPU memory for inference

Bias Considerations

The model is trained on mathematical problems which are inherently objective. However, the training data may reflect biases in problem selection, difficulty distribution, and mathematical notation preferences from the source datasets.

Inference:

- Acceleration Engine: TensorRT-LLM, vLLM, SGLang

- Test Hardware: NVIDIA H100

Ethical Considerations

NVIDIA believes Trustworthy AI is a shared responsibility and we have established policies and practices to enable development for a wide array of AI applications. When downloaded or used in accordance with our terms of service, developers should work with their internal model team to ensure this model meets requirements for the relevant industry and use case and addresses unforeseen product misuse.

Please report security vulnerabilities or NVIDIA AI Concerns here.

- Downloads last month

- 24